Archive

Causal versus causal

Today I want to talk about the different ways the word “causal” is thrown around by statisticians versus finance quants, because it’s both confusing and really interesting.

But before I do, can I just take a moment to be amazed at how pervasive Gangnam Style has become? When I first posted the video on August 1st, I had no idea how much of a sensation it was destined to become. Here’s the Google trend graph for “Gangnam” versus “Obama”:

It really hit home last night as I was reading a serious Bloomberg article take on the economic implications of Gangnam Style whilst the song was playing in the background at the playoff game between the Cardinals and the Giants.

Back to our regularly scheduled program. I’m first going to talk about how finance quants think about “causal models” and second how statisticians do. This has come out of conversations with Suresh Naidu and Rachel Schutt.

Causal modeling in finance

When I learned how to model causally, it basically meant something very simple: I never used “future information” to make a prediction about the future. I was strictly using information from the past, or that was available and I had access to, to make predictions about the future. In other words, as I trained a model, I always had in mind a timestamp explaining what the “present time” is, and all data I had access to at that moment had timestamps of availability for before that present time so that I could use this information to make a statement about what I think would happen after that present time. If I did this carefully, then my model was termed “causal.” It respected time, and in particular it didn’t have great-looking predictive power just because it was peeking ahead.

Causal modeling in statistics

By contrast, when statisticians talk about a causal model, they mean something very different. Namely, they mean whether the model shows that something caused something else to happen. For example, if we saw certain plants in a certain soil all died but those in a different soil lived, then they’d want to know if the soil caused the death of the plants. Usually to answer this kind of questions, in an ideal situation, statisticians set up randomly chosen experiments where the only difference between the treatments is that one condition (i.e. the type of soil, but not how often you water it or the type of sunlight it gets). When they can’t set it up perfectly (say because it involves people dying instead of plants) they do the best they can.

The differences and commonalities

On the one hand both concepts refer and depend on time. There’s no way X caused Y to happen if X happened after Y. But whereas in finance we only care about time, in statistics there’s more to it.

So for example, if there’s a third underlying thing that causes both X and Y, but X happens before Y, then the finance people are psyched because they have a way of betting on the direction of Y: just keep an eye on X! But the statisticians are not amused, since there’s no way to prove causality in this case unless you get your hands on that third thing.

Although I understand wanting to know the underlying reasons things happen, I have a personal preference for the finance definition, which is just plain easier to understand and test, and usually the best we can do with real world data. In my experience the most interesting questions relate to things that you can’t set up experiments for. So, for example, it’s hard to know whether blue-collar presidents would be impose less elitist policy than millionaires, because we only have millionaires.

Moreover, it usually is interesting to know what you can predict for the future knowing what you know now, even if there’s no proof of causation, and not only because you can maybe make money betting on something (but that’s part of it).

Columbia Data Science course, week 6: Kaggle, crowd-sourcing, decision trees, random forests, social networks, and experimental design

Yesterday we had two guest lecturers, who took up approximately half the time each. First we welcomed William Cukierski from Kaggle, a data science competition platform.

Will went to Cornell for a B.A. in physics and to Rutgers to get his Ph.D. in biomedical engineering. He focused on cancer research, studying pathology images. While working on writing his dissertation, he got more and more involved in Kaggle competitions, finishing very near the top in multiple competitions, and now works for Kaggle. Here’s what Will had to say.

Crowd-sourcing in Kaggle

What is a data scientist? Some say it’s someone who is better at stats than an engineer and better at engineering than a statistician. But one could argue it’s actually someone who is worse at stats than a statistician. Being a data scientist is when you learn more and more about more and more until you know nothing about everything.

Kaggle using prizes to induce the public to do stuff. This is not a new idea:

- the Royal Navy in 1714 couldn’t measure longitude, and put out a prize worth $6 million in today’s dollars to get help. John Harrison, an unknown cabinetmaker, figured it out how to make a clock to solve the problem.

- In the U.S. in 2002 FOX issued a prize for the next pop solo artist, which resulted in American Idol.

- There’s also the X-prize company; $10 million was offered for the Ansari X-prize and $100 million was lost in trying to solve it. So it doesn’t always mean it’s an efficient process (but it’s efficient for the people offering the prize if it gets solved!)

There are two kinds of crowdsourcing models. First, we have the distributive crowdsourcing model, like wikipedia, which as for relatively easy but large amounts of contributions. Then, there’s the singular, focused difficult problems that Kaggle, DARPA, InnoCentive and other companies specialize in.

Somee of the problems with some crowdsourcing projects include:

- they don’t always evaluate your submission objectively. Instead they have a subjective measure, so they might just decide your design is bad or something. This leads to high barrier to entry, since people don’t trust the evaluation criterion.

- Also, one doesn’t get recognition until after they’ve won or ranked highly. This leads to high sunk costs for the participants.

- Also, bad competitions often conflate participants with mechanical turks: in other words, they assume you’re stupid. This doesn’t lead anywhere good.

- Also, the competitions sometimes don’t chunk the work into bite size pieces, which means it’s too big to do or too small to be interesting.

A good competition has a do-able, interesting question, with an evaluation metric which is transparent and entirely objective. The problem is given, the data set is given, and the metric of success is given. Moreover, prizes are established up front.

The participants are encouraged to submit their models up to twice a day during the competitions, which last on the order of a few days. This encourages a “leapfrogging” between competitors, where one ekes out a 5% advantage, giving others incentive to work harder. It also establishes a band of accuracy around a problem which you generally don’t have- in other words, given no other information, you don’t know if your 75% accurate model is the best possible.

The test set y’s are hidden, but the x’s are given, so you just use your model to get your predicted y’s for the test set and upload them into the Kaggle machine to see your evaluation score. This way you don’t share your actual code with Kaggle unless you win the prize (and Kaggle doesn’t have to worry about which version of python you’re running).

Note this leapfrogging effect is good and bad. It encourages people to squeeze out better performing models but it also tends to make models much more complicated as they get better. One reason you don’t want competitions lasting too long is that, after a while, the only way to inch up performance is to make things ridiculously complicated. For example, the original Netflix Prize lasted two years and the final winning model was too complicated for them to actually put into production.

The hole that Kaggle is filling is the following: there’s a mismatch between those who need analysis and those with skills. Even though companies desperately need analysis, they tend to hoard data; this is the biggest obstacle for success.

They have had good results so far. Allstate, with a good actuarial team, challenged their data science competitors to improve their actuarial model, which, given attributes of drivers, approximates the probability of a car crash. The 202 competitors improved Allstate’s internal model by 271%.

There were other examples, including one where the prize was $1,000 and it benefited the company $100,000.

A student then asked, is that fair? There are actually two questions embedded in that one. First, is it fair to the data scientists working at the companies that engage with Kaggle? Some of them might lose their job, for example. Second, is it fair to get people to basically work for free and ultimately benefit a for-profit company? Does it result in data scientists losing their fair market price?

Of course Kaggle charges a fee for hosting competitions, but is it enough?

[Mathbabe interjects her view: personally, I suspect this is a model which seems like an arbitrage opportunity for companies but only while the data scientists of the world haven’t realized their value and have extra time on their hands. As soon as they price their skills better they’ll stop working for free, unless it’s for a cause they actually believe in.]

Facebook is hiring data scientists, they hosted a Kaggle competition, where the prize was an interview. There were 422 competitors.

[Mathbabe can’t help but insert her view: it’s a bit too convenient for Facebook to have interviewees for data science positions in such a posture of gratitude for the mere interview. This distracts them from asking hard questions about what the data policies are and the underlying ethics of the company.]

There’s a final project for the class, namely an essay grading contest. The students will need to build it, train it, and test it, just like any other Kaggle competition. Group work is encouraged.

Thought Experiment: What are the ethical implications of a robo-grader?

Some of the students’ thoughts:

- It depends on how much you care about your grade.

- Actual human graders aren’t fair anyway.

- Is this the wrong question? The goal of a test is not to write a good essay but rather to do well in a standardized test. The real profit center for standardized testing is, after all, to sell books to tell you how to take the tests. It’s a screening, you follow the instructions, and you get a grade depending on how well you follow instructions.

- There are really two question: 1) Is it wise to move from the human to the machine version of same thing for any given thing? and 2) Are machines making things more structured, and is this inhibiting creativity? One thing is for sure, robo-grading prevents me from being compared to someone more creative.

- People want things to be standardized. It gives us a consistency that we like. People don’t want artistic cars, for example.

- Will: We used machine learning to research cancer, where the stakes are much higher. In fact this whole field of data science has to be thinking about these ethical considerations sooner or later, and I think it’s sooner. In the case of doctors, you could give the same doctor the same slide two months apart and get different diagnoses. We aren’t consistent ourselves, but we think we are. Let’s keep that in mind when we talk about the “fairness” of using machine learning algorithms in tricky situations.

Introduction to Feature Selection

“Feature extraction and selection are the most important but underrated step of machine learning. Better features are better than better algorithms.” – Will

“We don’t have better algorithms, we just have more data” –Peter Norvig

Will claims that Norvig really wanted to say we have better features.

We are getting bigger and bigger data sets, but that’s not always helpful. The danger is if the number of features is larger than the number of samples or if we have a sparsity problem.

We improve our feature selection process to try to improve performance of predictions. A criticism of feature selection is that it’s no better than data dredging. If we just take whatever answer we get that correlates with our target, that’s not good.

There’s a well known bias-variance tradeoff: a model is “high bias” if it’s is too simple (the features aren’t encoding enough information). In this case lots more data doesn’t improve your model. On the other hand, if your model is too complicated, then “high variance” leads to overfitting. In this case you want to reduce the number of features you are using.

We will take some material from a famous paper by Isabelle Guyon published in 2003 entitled “An Introduction to Variable and Feature Selection”.

There are three categories of feature selection methods: filters, wrappers, and embedded methods. Filters order variables (i.e. possible features) with respect to some ranking (e.g. correlation with target). This is sometimes good on a first pass over the space of features. Filters take account of the predictive power of individual features, and estimate mutual information or what have you. However, the problem with filters is that you get correlated features. In other words, the filter doesn’t care about redundancy.

This isn’t always bad and it isn’t always good. On the one hand, two redundant features can be more powerful when they are both used, and on the other hand something that appears useless alone could actually help when combined with another possibly useless-looking feature.

Wrapper feature selection tries to find subsets of features that will do the trick. However, as anyone who has studied the binomial coefficients knows, the number of possible size subsets of

things, called

, grows exponentially. So there’s a nasty opportunity for over fitting by doing this. Most subset methods are capturing some flavor of minimum-redundancy-maximum-relevance. So, for example, we could have a greedy algorithm which starts with the best feature, takes a few more highly ranked, removes the worst, and so on. This a hybrid approach with a filter method.

We don’t have to retrain models at each step of such an approach, because there are fancy ways to see how objective function changes as we change the subset of features we are trying out. These are called “finite differences” and rely essentially on Taylor Series expansions of the objective function.

One last word: if you have a domain expertise on hand, don’t go into the machine learning rabbit hole of feature selection unless you’ve tapped into your expert completely!

Decision Trees

We’ve all used decision trees. They’re easy to understand and easy to use. How do we construct? Choosing a feature to pick at each step is like playing 20 questions. We take whatever the most informative thing is first. For the sake of this discussion, assume we break compound questions into multiple binary questions, so the answer is “+” or “-“.

To quantify “what is the most informative feature”, we first define entropy for a random variable to mean:

Note when we define the term to vanish. This is consistent with the fact that

In particular, if either option has probability zero, the entropy is 0. It is maximized at 0.5 for binary variables:

which we can easily compute using the fact that in the binary case, and a bit of calculus.

Using this definition, we define the information gain for a given feature, which is defined as the entropy we lose if we know the value of that feature.

To make a decision tree, then, we want to maximize information gain, and make a split on that. We keep going until all the points at the end are in the same class or we end up with no features left. In this case we take the majority vote. Optionally we prune the tree to avoid overfitting.

This is an example of an embedded feature selection algorithm. We don’t need to use a filter here because the “information gain” method is doing our feature selection for us.

How do you handle continuous variables?

In the case of continuous variables, you need to ask for the correct threshold of value so that it can be though of as a binary variable. So you could partition a user’s spend into “less than $5” and “at least $5” and you’d be getting back to the binary variable case. In this case it takes some extra work to decide on the information gain because it depends on the threshold as well as the feature.

Random Forests

Random forests are cool. They incorporate “bagging” (bootstrap aggregating) and trees to make stuff better. Plus they’re easy to use: you just need to specify the number of trees you want in your forest, as well as the number of features to randomly select at each node.

A bootstrap sample is a sample with replacement, which we usually take to be 80% of the actual data, but of course can be adjusted depending on how much data we have.

To construct a random forest, we construct a bunch of decision trees (we decide how many). For each tree, we take a bootstrap sample of our data, and for each node we randomly select (a second point of bootstrapping actually) a few features, say 5 out of the 100 total features. Then we use our entropy-information-gain engine to decide which among those features we will split our tree on, and we keep doing this, choosing a different set of five features for each node of our tree.

Note we could decide beforehand how deep the tree should get, but we typically don’t prune the trees, since a great feature of random forests is that it incorporates idiosyncratic noise.

Here’s what does a decision tree looks like for surviving on the Titanic.

David Huffaker, Google: Hybrid Approach to Social Research

David is one of Rachel’s collaborators in Google. They had a successful collaboration, starting with complementary skill sets, an explosion of goodness ensued when they were put together to work on Google+ with a bunch of other people, especially engineers. David brings a social scientist perspective to the analysis of social networks. He’s strong in quantitative methods for understanding and analyzing online social behavior. He got a Ph.D. in Media, Technology, and Society from Northwestern.

Google does a good job of putting people together. They blur the lines between research and development. The researchers are embedded on product teams. The work is iterative, and the engineers on the team strive to have near-production code from day 1 of a project. They leverage cloud infrastructure to deploy experiments to their mass user base and to rapidly deploy a prototype at scale.

Note that, considering the scale of Google’s user base, redesign as they scaling up is not a viable option. They instead do experiments with smaller groups of users.

David suggested that we, as data scientists, consider how to move into an experimental design so as to move to a causal claim between variables rather than a descriptive relationship. In other words, to move from the descriptive to the predictive.

As an example, he talked about the genesis of the “circle of friends” feature of Google+. They know people want to selectively share; they’ll send pictures to their family, whereas they’d probably be more likely to send inside jokes to their friends. They came up with the idea of circles, but it wasn’t clear if people would use them. How do they answer the question: will they use circles to organize their social network? It’s important to know what motivates them when they decide to share.

They took a mixed-method approach, so they used multiple methods to triangulate on findings and insights. Given a random sample of 100,000 users, they set out to determine the popular names and categories of names given to circles. They identified 168 active users who filled out surveys and they had longer interviews with 12.

They found that the majority were engaging in selective sharing, that most people used circles, and that the circle names were most often work-related or school-related, and that they had elements of a strong-link (“epic bros”) or a weak-link (“acquaintances from PTA”)

They asked the survey participants why they share content. The answers primarily came in three categories: first, the desire to share about oneself – personal experiences, opinions, etc. Second, discourse: people wanna participate in a conversation. Third, evangelism: people wanna spread information.

Next they asked participants why they choose their audiences. Again, three categories: first, privacy – many people were public or private by default. Second, relevance – they wanted to share only with those who may be interested, and they don’t wanna pollute other people’s data stream. Third, distribution – some people just want to maximize their potential audience.

The takeaway from this study was this: people do enjoy selectively sharing content, depending on context, and the audience. So we have to think about designing features for the product around content, context, and audience.

Network Analysis

We can use large data and look at connections between actors like a graph. For Google+, the users are the nodes and the edges (directed) are “in the same circle”.

Other examples of networks:

- nodes are users in 2nd life, interactions between users are possible in three different ways, corresponding to three different kinds of edges

- nodes are websites, edges are links

- nodes are theorems, directed edges are dependencies

After you define and draw a network, you can hopefully learn stuff by looking at it or analyzing it.

Social at Google

As you may have noticed, “social” is a layer across all of Google. Search now incorporates this layer: if you search for something you might see that your friend “+1″‘ed it. This is called a social annotation. It turns out that people care more about annotation when it comes from someone with domain expertise rather than someone you’re very close to. So you might care more about the opinion of a wine expert at work than the opinion of your mom when it comes to purchasing wine.

Note that sounds obvious but if you started the other way around, asking who you’d trust, you might start with your mom. In other words, “close ties,” even if you can determine those, are not the best feature to rank annotations. But that begs the question, what is? Typically in a situation like this we use click-through rate, or how long it takes to click.

In general we need to always keep in mind a quantitative metric of success. This defines success for us, so we have to be careful.

Privacy

Human facing technology has thorny issues of privacy which makes stuff hard. We took a survey of how people felt uneasy about content. We asked, how does it affect your engagement? What is the nature of your privacy concerns?

Turns out there’s a strong correlation between privacy concern and low engagement, which isn’t surprising. It’s also related to how well you understand what information is being shared, and the question of when you post something, where does it go and how much control do you have over it. When you are confronted with a huge pile of complicated all settings, you tend to start feeling passive.

Again, we took a survey and found broad categories of concern as follows:

identity theft

- financial loss

digital world

- access to personal data

- really private stuff I searched on

- unwanted spam

- provocative photo (oh shit my boss saw that)

- unwanted solicitation

- unwanted ad targeting

physical world

- offline threats

- harm to my family

- stalkers

- employment risks

- hassle

What is the best way to decrease concern and increase undemanding and control?

Possibilities:

- Write and post a manifesto of your data policy (tried that, nobody likes to read manifestos)

- Educate users on our policies a la the Netflix feature “because you liked this, we think you might like this”

- Get rid of all stored data after a year

Rephrase: how do we design setting to make it easier for people? how do you make it transparent?

- make a picture or graph of where data is going.

- give people a privacy switchboard

- give people access to quick settings

- make the settings you show them categorized by things you don’t have a choice about vs. things you do

- make reasonable default setting so people don’t have to worry about it.

David left us with these words of wisdom: as you move forward and have access to big data, you really should complement them with qualitative approaches. Use mixed methods to come to a better understanding of what’s going on. Qualitative surveys can really help.

Suresh Naidu: analyzing the language of political partisanship

I was lucky enough to attend Suresh Naidu‘s lecture last night on his recent work analyzing congressional speeches with co-authors Jacob Jensen, Ethan Kaplan, and Laurence Wilse-Samson.

Namely, along with his co-authors, he found popular three-word phrases, measured and ranked their partisanship (by how often a democrat uttered the phrase versus a republican), and measured the extent to which those phrases were being used in the public discussion before congress started using them or after congress started using them.

Note this means that phrases that were uttered often by both parties were ignored. Only phrases that were uttered more by one party than the other like “free market system” were counted. Also, the words were reduced to their stems and small common words were ignored, so the phrase “united states of america” was reduced to “unite.state.america”. So if parties were talking about the same issue but insisted on using certain phrases (“death tax” for example), then it would show up. This certainly jives with my sense of how partisanship is established by politicians, and for the sake of the paper it can be taken to be the definition.

The first data set he used was a digitized version of all of the speeches from the House since the end of the Civil War, which was also the beginning of the “two-party” system as we know it. Third party politicians were ignored. The proxy for “the public discussion” was taken from Google Book N-grams. It consists of books that were published in English in a given year.

Some of the conclusions that I can remember are as follows:

- The three-word phrases themselves are a super interesting data set; their prevalence, how the move from one side of the aisle to the other over time, and what they discuss (so for example, they don’t discuss international issues that much – which doesn’t mean the politicians don’t discuss international issues, but that it’s not a particularly partisan issue or at least their language around this issue is similar).

- When the issue is economic and highly partisan, it tends to show up “in the public” via Google Books before it shows up in Congress. Which is to say, there’s been a new book written by some economist, presumably, who introduces language into the public discussion that later gets picked up by Congress.

- When the issue is non-economic or only somewhat partisan, it tends to show up in Congress before or at the same time as in the public domain. Members of Congress seem to feel comfortable making up their own phrases and repeating them in such circumstances.

So the cult of the economic expert has been around for a while now.

Suresh and his crew also made an overall measurement of the partisanship of a given 2-year session of congress. It was interesting to discuss how this changed over time, and how having large partisanship, in terms of language, did not necessarily correlate with having stalemate congresses. Indeed if I remember correctly, a moment of particularly high partisanship, as defined above via language, was during the time the New Deal was passed.

Also, as we also discussed (it was a lively audience), language may be a marker of partisan identity without necessarily pointing to underlying ideological differences. For example, the phrase “Martin Luther King” has been ranked high as a partisan democratic phrase since the civil rights movement but then again it’s customary (I’ve been told) for democrats to commemorate MLK’s birthday, but not for republicans to do so.

Given their speech, this analysis did a good job identifying which party a politician belonged to, but the analysis was not causal in the sense of time: we needed to know the top partisan phrases of that session of Congress to be able to predict the party of a given politician. Indeed the “top phrases” changed so quickly that the predictive power may be mostly lost between sessions.

Not that this is a big deal, since of course we know what party a politician is from, but it would be interesting to use this as a measure of how radical or centered a given politician is or will be.

Even if you aren’t interested in the above results and discussion, the methodology is very cool. Suresh and his co-authors view text as its own data set and analyze it as such.

And after all, the words historical politicians spoke is what we have on record – we can’t look into their brain and see what they were thinking. It’s of course interesting and important to have historians (domain experts) inform the process as well, e.g. for the “Martin Luther King” phrase above, but barring expert knowledge this is lots better than nothing. One thing it tells us, just in case we didn’t study political history, is that we’ve seen way worse partisanship in the past than we see now, although things have consistently been getting worse since the 1980’s.

Here’s a wordcloud from the 2007 session; blue and red are what you think, and bigger means more partisan:

Columbia Data Science course, week 5: GetGlue, time series, financial modeling, advanced regression, and ethics

I was happy to be giving Rachel Schutt’s Columbia Data Science course this week, where I discussed time series, financial modeling, and ethics. I blogged previous classes here.

The first few minutes of class were for a case study with GetGlue, a New York-based start-up that won the mashable breakthrough start-up of the year in 2011 and is backed by some of the VCs that also fund big names like Tumblr, etsy, foursquare, etc. GetGlue is part of the social TV space. Lead Scientist, Kyle Teague, came to tell the class a little bit about GetGlue, and some of what he worked on there. He also came to announce that GetGlue was giving the class access to a fairly large data set of user check-ins to tv shows and movies. Kyle’s background is in electrical engineering, he placed in the 2011 KDD cup (which we learned about last week from Brian), and he started programming when he was a kid.

GetGlue’s goal is to address the problem of content discovery within the movie and tv space, primarily. The usual model for finding out what’s on TV is the 1950’s TV Guide schedule, and that’s still how we’re supposed to find things to watch. There are thousands of channels and it’s getting increasingly difficult to find out what’s good on. GetGlue wants to change this model, by giving people personalized TV recommendations and personalized guides. There are other ways GetGlue uses Data Science but for the most part we focused on how this the recommendation system works. Users “check-in” to tv shows, which means they can tell people they’re watching a show. This creates a time-stamped data point. They can also do other actions such as like, or comment on the show. So this is a -tuple: {user, action, object} where the object is a tv show or movie. This induces a bi-partite graph. A bi-partite graph or network contains two types of nodes: users and tv shows. An edges exist between users and an tv shows, but not between users and users or tv shows and tv shows. So Bob and Mad Men are connected because Bob likes Mad Men, and Sarah and Mad Men and Lost are connected because Sarah liked Mad Men and Lost. But Bob and Sarah aren’t connected, nor are Mad Men and Lost. A lot can be learned from this graph alone.

But GetGlue finds ways to create edges between users and between objects (tv shows, or movies.) Users can follow each other or be friends on GetGlue, and also GetGlue can learn that two people are similar[do they do this?]. GetGlue also hires human evaluators to make connections or directional edges between objects. So True Blood and Buffy the Vampire Slayer might be similar for some reason and so the humans create an edge in the graph between them. There were nuances around the edge being directional. They may draw an arrow pointing from Buffy to True Blood but not vice versa, for example, so their notion of “similar” or “close” captures both content and popularity. (That’s a made-up example.) Pandora does something like this too.

Another important aspect is time. The user checked-in or liked a show at a specific time, so the -tuple extends to have a time-stamp: {user,action,object,timestamp}. This is essentially the data set the class has access to, although it’s slightly more complicated and messy than that. Their first assignment with this data will be to explore it, try to characterize it and understand it, gain intuition around it and visualize what they find.

Students in the class asked him questions around topics of the value of formal education in becoming a data scientist (do you need one? Kyle’s time spent doing signal processing in research labs was valuable, but so was his time spent coding for fun as a kid), what would be messy about a data set, why would the data set be messy (often bugs in the code), how would they know? (their QA and values that don’t make sense), what language does he use to prototype algorithms (python), how does he know his algorithm is good.

Then it was my turn. I started out with my data scientist profile:

As you can see, I feel like I have the most weakness in CS. Although I can use python pretty proficiently, and in particular I can scrape and parce data, prototype models, and use matplotlib to draw pretty pictures, I am no java map-reducer and I bow down to those people who are. I am also completely untrained in data visualization but I know enough to get by and give presentations that people understand.

Thought Experiment

I asked the students the following question:

What do you lose when you think of your training set as a big pile of data and ignore the timestamps?

They had some pretty insightful comments. One thing they mentioned off the bat is that you won’t know cause and effect if you don’t have any sense of time. Of course that’s true but it’s not quite what I meant, so I amended the question to allow you to collect relative time differentials, so “time since user last logged in” or “time since last click” or “time since last insulin injection”, but not absolute timestamps.

What I was getting at, and what they came up with, was that when you ignore the passage of time through your data, you ignore trends altogether, as well as seasonality. So for the insulin example, you might note that 15 minutes after your insulin injection your blood sugar goes down consistently, but you might not notice an overall trend of your rising blood sugar over the past few months if your dataset for the past few months has no absolute timestamp on it.

This idea, of keeping track of trends and seasonalities, is very important in financial data, and essential to keep track of if you want to make money, considering how small the signals are.

How to avoid overfitting when you model with time series

After discussing seasonality and trends in the various financial markets, we started talking about how to avoid overfitting your model.

Specifically, I started out with having a strict concept of in-sample (IS) and out-of-sample (OOS) data. Note the OOS data is not meant as testing data- that all happens inside OOS data. It’s meant to be the data you use after finalizing your model so that you have some idea how the model will perform in production.

Next, I discussed the concept of causal modeling. Namely, we should never use information in the future to predict something now. Similarly, when we have a set of training data, we don’t know the “best fit coefficients” for that training data until after the last timestamp on all the data. As we move forward in time from the first timestamp to the last, we expect to get different sets of coefficients as more events happen.

One consequence of this is that, instead of getting on set of coefficients, we actually get an evolution of each coefficient. This is helpful because it gives us a sense of how stable those coefficients are. In particular, if one coefficient has changed sign 10 times over the training set, then we expect a good estimate for it is zero, not the so-called “best fit” at the end of the data.

One last word on causal modeling and IS/OOS. It is consistent with production code. Namely, you are always acting, in the training and in the OOS simulation, as if you’re running your model in production and you’re seeing how it performs. Of course you fit your model in sample, so you expect it to perform better there than in production.

Another way to say this is that, once you have a model in production, you will have to make decisions about the future based only on what you know now (so it’s causal) and you will want to update your model whenever you gather new data. So your coefficients of your model are living organisms that continuously evolve.

Submodels of Models

We often “prepare” the data before putting it into a model. Typically the way we prepare it has to do with the mean or the variance of the data, or sometimes the log (and then the mean or the variance of that transformed data).

But to be consistent with the causal nature of our modeling, we need to make sure our running estimates of mean and variance are also causal. Once we have causal estimates of our mean and variance $\sigma_y^2$, we can normalize the next data point with these estimates just like we do to get from a gaussian distribution to the normal gaussian distribution:

Of course we may have other things to keep track of as well to prepare our data, and we might run other submodels of our model. For example we may choose to consider only the “new” part of something, which is equivalent to trying to predict something like instead of

Or we may train a submodel to figure out what part of

predicts

so a submodel which is a univariate regression or something.

There are lots of choices here, but the point is it’s all causal, so you have to be careful when you train your overall model how to introduce your next data point and make sure the steps are all in order of time, and that you’re never ever cheating and looking ahead in time at data that hasn’t happened yet.

Financial time series

In finance we consider returns, say daily. And it’s not percent returns, actually it’s log returns: if denotes a close on day

then the return that day is defined as

See more about this here.

So if you start with S&P closing levels:

Then you get the following log returns:

What’s that mess? It’s crazy volatility caused by the financial crisis. We sometimes (not always) want to account for that volatility by normalizing with respect to it (described above). Once we do that we get something like this:

Which is clearly better behaved. Note this process is discussed in this post.

We could also normalize with respect to the mean, but we typically assume the mean of daily returns is 0, so as to not bias our models on short term trends.

Financial Modeling

One thing we need to understand about financial modeling is that there’s a feedback loop. If you find a way to make money, it eventually goes away- sometimes people refer to this as the fact that the “market learns over time”.

One way to see this is that, in the end, your model comes down to knowing some price is going to go up in the future, so you buy it before it goes up, you wait, and then you sell it at a profit. But if you think about it, your buying it has actually changed the process, and decreased the signal you were anticipating. That’s how the market learns – it’s a combination of a bunch of algorithms anticipating things and making them go away.

The consequence of this learning over time is that the existing signals are very weak. We are happy with a 3% correlation for models that have a horizon of 1 day (a “horizon” for your model is how long you expect your prediction to be good). This means not much signal, and lots of noise! In particular, lots of the machine learning “metrics of success” for models, such as measurements of precision or accuracy, are not very relevant in this context.

So instead of measuring accuracy, we generally draw a picture to assess models, namely of the (cumulative) PnL of the model. This generalizes to any model as well- you plot the cumulative sum of the product of demeaned forecast and demeaned realized. In other words, you see if your model consistently does better than the “stupidest” model of assuming everything is average.

If you plot this and you drift up and to the right, you’re good. If it’s too jaggedy, that means your model is taking big bets and isn’t stable.

Why regression?

From above we know the signal is weak. If you imagine there’s some complicated underlying relationship between your information and the thing you’re trying to predict, get over knowing what that is – there’s too much noise to find it. Instead, think of the function as possibly complicated, but continuous, and imagine you’ve written it out as a Taylor Series. Then you can’t possibly expect to get your hands on anything but the linear terms.

Don’t think about using logistic regression, either, because you’d need to be ignoring size, which matters in finance- it matters if a stock went up 2% instead of 0.01%. But logistic regression forces you to have an on/off switch, which would be possible but would lose a lot of information. Considering the fact that we are always in a low-information environment, this is a bad idea.

Note that although I’m claiming you probably want to use linear regression in a noisy environment, the actual terms themselves don’t have to be linear in the information you have. You can always take products of various terms as x’s in your regression. but you’re still fitting a linear model in non-linear terms.

Advanced regression

The first thing I need to explain is the exponential downweighting of old data, which I already used in a graph above, where I normalized returns by volatility with a decay of 0.97. How do I do this?

Working from this post again, the formula is given by essentially a weighted version of the normal one, where I weight recent data more than older data, and where the weight of older data is a power of some parameter which is called the decay. The exponent is the number of time intervals since that data was new. Putting that together, the formula we get is:

We are actually dividing by the sum of the weights, but the weights are powers of some number s, so it’s a geometric sum and the sum is given by

One cool consequence of this formula is that it’s easy to update: if we have a new return to add to the series, then it’s not hard to show we just want

In fact this is the general rule for updating exponential downweighted estimates, and it’s one reason we like them so much- you only need to keep in memory your last estimate and the number

How do you choose your decay length? This is an art instead of a science, and depends on the domain you’re in. Think about how many days (or time periods) it takes to weight a data point at half of a new data point, and compare that to how fast the market forgets stuff.

This downweighting of old data is an example of inserting a prior into your model, where here the prior is “new data is more important than old data”. What are other kinds of priors you can have?

Priors

Priors can be thought of as opinions like the above. Besides “new data is more important than old data,” we may decide our prior is “coefficients vary smoothly.” This is relevant when we decide, say, to use a bunch of old values of some time series to help predict the next one, giving us a model like:

which is just the example where we take the last two values of the time series $F$ to predict the next one. But we could use more than two values, of course.

[Aside: in order to decide how many values to use, you might want to draw an autocorrelation plot for your data.]

The way you’d place the prior about the relationship between coefficients (in this case consecutive lagged data points) is by adding a matrix to your covariance matrix when you perform linear regression. See more about this here.

Ethics

I then talked about modeling and ethics. My goal is to get this next-gen group of data scientists sensitized to the fact that they are not just nerds sitting in the corner but have increasingly important ethical questions to consider while they work.

People tend to overfit their models. It’s human nature to want your baby to be awesome. They also underestimate the bad news and blame other people for bad news, because nothing their baby has done or is capable of is bad, unless someone else made them do it. Keep these things in mind.

I then described what I call the deathspiral of modeling, a term I coined in this post on creepy model watching.

I counseled the students to

- try to maintain skepticism about their models and how their models might get used,

- shoot holes in their own ideas,

- accept challenges and devise tests as scientists rather than defending their models using words – if someone thinks they can do better, than let them try, and agree on an evaluation method beforehand,

- In general, try to consider the consequences of their models.

I then showed them Emanuel Derman’s Hippocratic Oath of Modeling, which was made for financial modeling but fits perfectly into this framework. I discussed the politics of working in industry, namely that even if they are skeptical of their model there’s always the chance that it will be used the wrong way in spite of the modeler’s warnings. So the Hippocratic Oath is, unfortunately, insufficient in reality (but it’s a good start!).

Finally, there are ways to do good: I mentioned stuff like DataKind. There are also ways to be transparent: I mentioned Open Models, which is so far just an idea, but Victoria Stodden is working on RunMyCode, which is similar and very awesome.

What is a model?

I’ve been thinking a lot recently about mathematical models and how to explain them to people who aren’t mathematicians or statisticians. I consider this increasingly important as more and more models are controlling our lives, such as:

- employment models, which help large employers screen through applications,

- political ad models, which allow political groups to personalize their ads,

- credit scoring models, which allow consumer product companies and loan companies to screen applicants, and,

- if you’re a teacher, the Value-Added Model.

- See more models here and here.

It’s a big job, to explain these, because the truth is they are complicated – sometimes overly so, sometimes by construction.

The truth is, though, you don’t really need to be a mathematician to know what a model is, because everyone uses internal models all the time to make decisions.

For example, you intuitively model everyone’s appetite when you cook a meal for your family. You know that one person loves chicken (but hates hamburgers), while someone else will only eat the pasta (with extra cheese). You even take into account that people’s appetites vary from day to day, so you can’t be totally precise in preparing something – there’s a standard error involved.

To explain modeling at this level, then, you just need to imagine that you’ve built a machine that knows all the facts that you do and knows how to assemble them together to make a meal that will approximately feed your family. If you think about it, you’ll realize that you know a shit ton of information about the likes and dislikes of all of your family members, because you have so many memories of them grabbing seconds of the asparagus or avoiding the string beans.

In other words, it would be actually incredibly hard to give a machine enough information about all the food preferences for all your family members, and yourself, along with the constraints of having not too much junky food, but making sure everyone had something they liked, etc. etc.

So what would you do instead? You’d probably give the machine broad categories of likes and dislikes: this one likes meat, this one likes bread and pasta, this one always drinks lots of milk and puts nutella on everything in sight. You’d dumb it down for the sake of time, in other words. The end product, the meal, may not be perfect but it’s better than no guidance at all.

That’s getting closer to what real-world modeling for people is like. And the conclusion is right too- you aren’t expecting your model to do a perfect job, because you only have a broad outline of the true underlying facts of the situation.

Plus, when you’re modeling people, you have to a priori choose the questions to ask, which will probably come in the form of “does he/she like meat?” instead of “does he/she put nutella on everything in sight?”; in other words, the important but idiosyncratic rules won’t even be seen by a generic one-size-fits-everything model.

Finally, those generic models are hugely scaled- sometimes there’s really only one out there, being used everywhere, and its flaws are compounded that many times over because of its reach.

So, say you’ve got a CV with a spelling error. You’re trying to get a job, but the software that screens for applicants automatically rejects you because of this spelling error. Moreover, the same screening model is used everywhere, and you therefore don’t get any interviews because of this one spelling error, in spite of the fact that you’re otherwise qualified.

I’m not saying this would happen – I don’t know how those models actually work, although I do expect points against you for spelling errors. My point is there’s some real danger in using such models on a very large scale that we know are simplified versions of reality.

One last thing. The model fails in the example above, because the qualified person doesn’t get a job. But it fails invisibly; nobody knows exactly how it failed or even that it failed. Moreover, it only really fails for the applicant who doesn’t get any interviews. For the employer, as long as some qualified applicants survive the model, they don’t see failure at all.

Columbia Data Science course, week 4: K-means, Classifiers, Logistic Regression, Evaluation

This week our guest lecturer for the Columbia Data Science class was Brian Dalessandro. Brian works at Media6Degrees as a VP of Data Science, and he’s super active in the research community. He’s also served as co-chair of the KDD competition.

Before Brian started, Rachel threw us a couple of delicious data science tidbits.

The Process of Data Science

First we have the Real World. Inside the Real World we have:

- Users using Google+

- People competing in the Olympics

- Spammers sending email

From this we draw raw data, e.g. logs, all the olympics records, or Enron employee emails. We want to process this to make it clean for analysis. We use pipelines of data munging, joining, scraping, wrangling or whatever you want to call it and we use tools such as:

- python

- shell scripts

- R

- SQL

We eventually get the data down to a nice format, say something with columns:

name event year gender event time

Note: this is where you typically start in a standard statistics class. But it’s not where we typically start in the real world.

Once you have this clean data set, you should be doing some kind of exploratory data analysis (EDA); if you don’t really know what I’m talking about then look at Rachel’s recent blog post on the subject. You may realize that it isn’t actually clean.

Next, you decide to apply some algorithm you learned somewhere:

- k-nearest neighbor

- regression

- Naive Bayes

- (something else),

depending on the type of problem you’re trying to solve:

- classification

- prediction

- description

You then:

- interpret

- visualize

- report

- communicate

At the end you have a “data product”, e.g. a spam classifier.

K-means

So far we’ve only seen supervised learning. K-means is the first unsupervised learning technique we’ll look into. Say you have data at the user level:

- G+ data

- survey data

- medical data

- SAT scores

Assume each row of your data set corresponds to a person, say each row corresponds to information about the user as follows:

age gender income Geo=state household size

Your goal is to segment them, otherwise known as stratify, or group, or cluster. Why? For example:

- you might want to give different users different experiences. Marketing often does this.

- you might have a model that works better for specific groups

- hierarchical modeling in statistics does something like this.

One possibility is to choose the groups yourself. Bucket users using homemade thresholds. Like by age, 20-24, 25-30, etc. or by income. In fact, say you did this, by age, gender, state, income, marital status. You may have 10 age buckets, 2 gender buckets, and so on, which would result in 10x2x50x10x3 = 30,000 possible bins, which is big.

You can picture a five dimensional space with buckets along each axis, and each user would then live in one of those 30,000 five-dimensional cells. You wouldn’t want 30,000 marketing campaigns so you’d have to bin the bins somewhat.

Wait, what if you want to use an algorithm instead where you could decide on the number of bins? K-means is a “clustering algorithm”, and k is the number of groups. You pick k, a hyper parameter.

2-d version

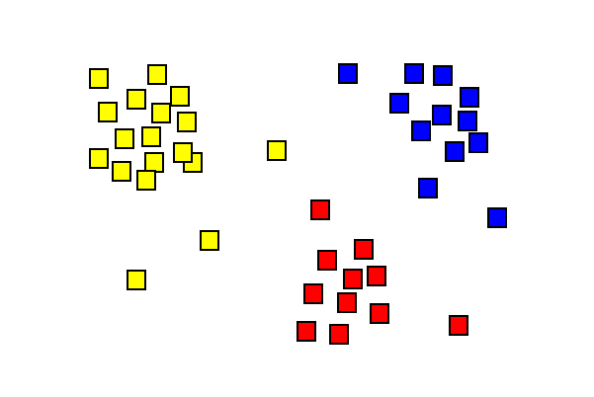

Say you have users with #clicks, #impressions (or age and income – anything with just two numerical parameters). Then k-means looks for clusters on the 2-d plane. Here’s a stolen and simplistic picture that illustrates what this might look like:

The general algorithm is just the same picture but generalized to d dimensions, where d is the number of features for each data point.

Here’s the actual algorithm:

- randomly pick K centroids

- assign data to closest centroid.

- move the centroids to the average location of the users assigned to it

- repeat until the assignments don’t change

It’s up to you to interpret if there’s a natural way to describe these groups.

This is unsupervised learning and it has issues:

- choosing an optimal k is also a problem although

, where n is number of data points.

- convergence issues – the solution can fail to exist (the configurations can fall into a loop) or “wrong”

- but it’s also fast

- interpretability can be a problem – sometimes the answer isn’t useful

- in spite of this, there are broad applications in marketing, computer vision (partition an image), or as a starting point for other models.

One common tool we use a lot in our systems is logistic regression.

Thought Experiment

Brian now asked us the following:

How would data science differ if we had a “grand unified theory of everything”?

He gave us some thoughts:

- Would we even need data science?

- Theory offers us a symbolic explanation of how the world works.

- What’s the difference between physics and data science?

- Is it just accuracy? After all, Newton wasn’t completely precise, but was pretty close.

If you think of the sciences as a continuum, where physics is all the way on the right, and as you go left, you get more chaotic, then where is economics on this spectrum? Marketing? Finance? As we go left, we’re adding randomness (and as a clever student points out, salary as well).

Bottomline: if we could model this data science stuff like we know how to model physics, we’d know when people will click on what ad. The real world isn’t this understood, nor do we expect to be able to in the future.

Does “data science” deserve the word “science” in its name? Here’s why maybe the answer is yes.

We always have more than one model, and our models are always changing.

The art in data science is this: translating the problem into the language of data science

The science in data science is this: given raw data, constraints and a problem statement, you have an infinite set of models to choose from, with which you will use to maximize performance on some evaluation metric, that you will have to specify. Every design choice you make can be formulated as an hypothesis, upon which you will use rigorous testing and experimentation to either validate or refute.

Never underestimate the power of creativity: usually people have vision but no method. As the data scientist, you have to turn it into a model within the operational constraints. You need to optimize a metric that you get to define. Moreover, you do this with a scientific method, in the following sense.

Namely, you hold onto your existing best performer, and once you have a new idea to prototype, then you set up an experiment wherein the two best models compete. You therefore have a continuous scientific experiment, and in that sense you can justify it as a science.

Classifiers

Given

- data

- a problem, and

- constraints,

we need to determine:

- a classifier,

- an optimization method,

- a loss function,

- features, and

- an evaluation metric.

Today we will focus on the process of choosing a classifier.

Classification involves mapping your data points into a finite set of labels or the probability of a given label or labels. Examples of when you’d want to use classification:

- will someone click on this ad?

- what number is this?

- what is this news article about?

- is this spam?

- is this pill good for headaches?

From now on we’ll talk about binary classification only (0 or 1).

Examples of classification algorithms:

- decision tree

- random forests

- naive bayes

- k-nearest neighbors

- logistic regression

- support vector machines

- neural networks

Which one should we use?

One possibility is to try them all, and choose the best performer. This is fine if you have no constraints or if you ignore constraints. But usually constraints are a big deal – you might have tons of data or not much time or both.

If I need to update 500 models a day, I do need to care about runtime. these end up being bidding decisions. Some algorithms are slow – k-nearest neighbors for example. Linear models, by contrast, are very fast.

One under-appreciated constraint of a data scientist is this: your own understanding of the algorithm.

Ask yourself carefully, do you understand it for real? Really? Admit it if you don’t. You don’t have to be a master of every algorithm to be a good data scientist. The truth is, getting the “best-fit” of an algorithm often requires intimate knowledge of said algorithm. Sometimes you need to tweak an algorithm to make it fit your data. A common mistake for people not completely familiar with an algorithm is to overfit.

Another common constraint: interpretability. You often need to be able to interpret your model, for the sake of the business for example. Decision trees are very easy to interpret. Random forests, on the other hand, not so much, even though it’s almost the same thing, but can take exponentially longer to explain in full. If you don’t have 15 years to spend understanding a result, you may be willing to give up some accuracy in order to have it easy to understand.

Note that credit cards have to be able to explain their models by law so decision trees make more sense than random forests.

How about scalability? In general, there are three things you have to keep in mind when considering scalability:

- learning time: how much time does it take to train the model?

- scoring time: how much time does it take to give a new user a score once the model is in production?

- model storage: how much memory does the production model use up?

Here’s a useful paper to look at when comparing models: “An Empirical Comparison of Supervised Learning Algorithms”, from which we learn:

- Simpler models are more interpretable but aren’t as good performers.

- The question of which algorithm works best is problem dependent

- It’s also constraint dependent

At M6D, we need to match clients (advertising companies) to individual users. We have logged the sites they have visited on the internet. Different sites collect this information for us. We don’t look at the contents of the page – we take the url and hash it into some random string and then we have, say, the following data about a user we call “u”:

u = <xyz, 123, sdqwe, 13ms>

This means u visited 4 sites and their urls hashed to the above strings. Recall last week we learned spam classifier where the features are words. We aren’t looking at the meaning of the words. So the might as well be strings.

At the end of the day we build a giant matrix whose columns correspond to sites and whose rows correspond to users, and there’s a “1” if that user went to that site.

To make this a classifier, we also need to associate the behavior “clicked on a shoe ad”. So, a label.

Once we’ve labeled as above, this looks just like spam classification. We can now rely on well-established methods developed for spam classification – reduction to a previously solved problem.

Logistic Regression

We have three core problems as data scientists at M6D:

- feature engineering,

- user level conversion prediction,

- bidding.

We will focus on the second. We use logistic regression– it’s highly scalable and works great for binary outcomes.

What if you wanted to do something else? You could simply find a threshold so that, above you get 1, below you get 0. Or you could use a linear model like linear regression, but then you’d need to cut off below 0 or above 1.

What’s better: fit a function that is bounded in side [0,1]. For example, the logit function

wanna estimate

.

To make this a linear model in the outcomes , we take the log of the odds ratio:

The parameter keeps shape of the logit curve but shifts it back and forth. To interpret

further, consider what we call the base rate, the unconditional probability of “1” (so, in the case of ads, the base rate would correspond to the click-through rate, i.e. the overall tendency for people to click on ads; this is typically on the order of 1%).

If you had no information except the base rate, the average prediction would be just that. In a logistical regression, defines the base rate. Specifically, the base rate is approximately equal to

The slope defines the slope of the logit function. Note in general it’s a vector which is as long as the number of features we are using for each data point.

Our immediate modeling goal is to use our training data to find the best choices for and

We will use a maximum likelihood estimation or convex optimization to achieve this; we can’t just use derivatives and vector calculus like we did with linear regression because it’s a complicated function of our data.

The likelihood function is defined by:

where we are assuming the data points are independent and where

We then search for the parameters that maximize this having observed our data:

The probability of a single observation is

where is the modeled probability of a “1” for the binary outcome $Y_i.$ Taking the product of all of these we get our likelihood function which we want to maximize.

Similar to last week, we now take the log and get something convex, so it has to have a global maximum. Finally, we use numerical techniques to find it, which essentially follow the gradient like Newton’s method from calculus. Computer programs can do this pretty well. These algorithms depend on a step size, which we will need to adjust as we get closer to the global max or min – there’s an art to this piece of numerical optimization as well. Each step of the algorithm looks something like this:

where remember we are actually optimizing our parameters and

to maximize the (log) likelihood function, so the

you see above is really a vector of

s and the function

corresponds to our

“Flavors” of Logistic Regression for convex optimization.

The Newton’s method we described above is also called Iterative Reweighted Least Squares. It uses the curvature of log-likelihood to choose appropriate step direction. The actual calculation involves the Hessian matrix, and in particular requires its inversion, which is a kxk matrix. This is bad when there’s lots of features, as in 10,000 or something. Typically we don’t have that many features but it’s not impossible.

Another possible method to maximize our likelihood or log likelihood is called Stochastic Gradient Descent. It approximates gradient using a single observation at a time. The algorithm updates the current best-fit parameters each time it sees a new data point. The good news is that there’s no big matrix inversion, and it works well with huge data and with sparse features; it’s a big deal in Mahout and Vowpal Wabbit. The bad news is it’s not such a great optimizer and it’s very dependent on step size.

Evaluation

We generally use different evaluation metrics for different kind of models.

First, for ranking models, where we just want to know a relative rank versus and absolute score, we’d look to one of:

Second, for classification models, we’d look at the following metrics:

- lift: how much more people are buying or clicking because of a model

- accuracy: how often the correct outcome is being predicted

- f-score

- precision

- recall

Finally, for density estimation, where we need to know an actual probability rather than a relative score, we’d look to:

In general it’s hard to compare lift curves but you can compare AUC (area under the receiver operator curve) – they are “base rate invariant.” In other words if you bring the click-through rate from 1% to 2%, that’s 100% lift but if you bring it from 4% to 7% that’s less lift but more effect. AUC does a better job in such a situation when you want to compare.

Density estimation tests tell you how well are you fitting for conditional probability. In advertising, this may arise if you have a situation where each ad impression costs $c and for each conversion you receive $q. You will want to target every conversion that has a positive expected value, i.e. whenever

But to do this you need to make sure the probability estimate on the left is accurate, which in this case means something like the mean squared error of the estimator is small. Note a model can give you good rankings but bad P estimates.

Similarly, features that rank highly on AUC don’t necessarily rank well with respect to mean absolute error. So feature selection, as well as your evaluation method, is completely context-driven.

Evaluating professor evaluations

I recently read this New York Times “Room for Debate” on professor evaluations. There were some reasonably good points made, with people talking about the trend that students generally give better grades to attractive professors and easy grading professors, and that they were generally more interested in the short-term than in the long-term in this sense.

For these reasons, it was stipulated, it would be better and more informative to have anonymous evaluations, or have students come back after some time to give evaluations, or interesting ideas like that.

Then there was a crazy crazy man named Jeff Sandefer, co-founder and master teacher at the Acton School of Business in Austin, Texas. He likes to call his students “customers” and here’s how he deals with evaluations:

Acton, the business school that I co-founded, is designed and is led exclusively by successful chief executives. We focus intently on customer feedback. Every week our students rank each course and professor, and the results are made public for all to see. We separate the emotional venting from constructive criticism in the evaluations, and make frequent changes in the program in real time.

We also tie teacher bonuses to the student evaluations and each professor signs an individual learning covenant with each student. We have eliminated grade inflation by using a forced curve for student grades, and students receive their grades before evaluating professors. Not only do we not offer tenure, but our lowest rated teachers are not invited to return.

First of all, I’m not crazy about the idea of weekly rankings and public shaming going on here. And how do you separate emotional venting from constructive criticism anyway? Isn’t the customer always right? Overall the experience of the teachers doesn’t sound good – if I have a choice as a teacher, I teach elsewhere, unless the pay and the students are stellar.

On the other hand, I think it’s interesting that they have a curve for student grades. This does prevent the extra good evaluations coming straight from grade inflation (I’ve seen it, it does happen).

Here’s one think I didn’t see discussed, which is students themselves and how much they want to be in the class. When I taught first semester calculus at Barnard twice in consecutive semesters, my experience was vastly different in the two classes.

The first time I taught, in the Fall, my students were mostly straight out of high school, bright eyed and bushy tailed, and were happy to be there, and I still keep in touch with some of them. It was a great class, and we all loved each other by the end of it. I got crazy good reviews.

By contrast, the second time I taught the class, which was the next semester, my students were annoyed, bored, and whiny. I had too many students in the class, partly because my reviews were so good. So the class was different on that score, but I don’t think that mattered so much to my teaching.

My theory, which was backed up by all the experienced Profs in the math department, was that I had the students who were avoiding calculus for some reason. And when I thought about it, they weren’t straight out of high school, they were all over the map. They generally were there only because they needed some kind of calculus to fulfill a requirement for their major.

Unsurprisingly, I got mediocre reviews, with some really pretty nasty ones. The nastiest ones, I noticed, all had some giveaway that they had a bad attitude- something like, “Cathy never explains anything clearly, and I hate calculus.” My conclusion is that I get great evaluations from students who want to learn calculus and nasty evaluations from students who resent me asking them to really learn calculus.

What should we do about prof evaluations?

The problem with using evaluations to measure professor effectiveness is that you might be a prof that only has ever taught calculus in the Spring, and then you’d be wrongfully punished. That’s where we are now, and people know it, so instead of using them they just mostly ignore them. Of course, the problem with not ever using these evaluations is that they might actually contain good information that you could use to get better at teaching.

We have a lot of data collected on teacher evaluations, so I figure we should be analyzing it to see if there really is a useful signal or not. And we should use domain expertise from experienced professors to see if there are any other effects besides the “Fall/Spring attitude towards math” effect to keep in mind.

It’s obviously idiosyncratic depending on field and even which class it is, i.e. Calc II versus Calc III. If there even is a signal after you extract the various effects and the “attractiveness” effect, I expect it to be very noisy and so I’d hate to see someone’s entire career depend on evaluations, unless there was something really outrageous going on.

In any case it would be fun to do that analysis.

Columbia Data Science course, week 3: Naive Bayes, Laplace Smoothing, and scraping data off the web

In the third week of the Columbia Data Science course, our guest lecturer was Jake Hofman. Jake is at Microsoft Research after recently leaving Yahoo! Research. He got a Ph.D. in physics at Columbia and taught a fantastic course on modeling last semester at Columbia.

After introducing himself, Jake drew up his “data science profile;” turns out he is an expert on a category that he created called “data wrangling.” He confesses that he doesn’t know if he spends so much time on it because he’s good at it or because he’s bad at it.

Thought Experiment: Learning by Example

Jake had us look at a bunch of text. What is it? After some time we describe each row as the subject and first line of an email in Jake’s inbox. We notice the bottom half of the rows of text looks like spam.

Now Jake asks us, how did you figure this out? Can you write code to automate the spam filter that your brain is?

Some ideas the students came up with:

- Any email is spam if it contains Viagra references. Jake: this will work if they don’t modify the word.

- Maybe something about the length of the subject?

- Exclamation points may point to spam. Jake: can’t just do that since “Yahoo!” would count.

- Jake: keep in mind spammers are smart. As soon as you make a move, they game your model. It would be great if we could get them to solve important problems.

- Should we use a probabilistic model? Jake: yes, that’s where we’re going.

- Should we use k-nearest neighbors? Should we use regression? Recall we learned about these techniques last week. Jake: neither. We’ll use Naive Bayes, which is somehow between the two.

Why is linear regression not going to work?

Say you make a feature for each lower case word that you see in any email and then we used R’s “lm function:”

lm(spam ~ word1 + word2 + …)

Wait, that’s too many variables compared to observations! We have on the order of 10,000 emails with on the order of 100,000 words. This will definitely overfit. Technically, this corresponds to the fact that the matrix in the equation for linear regression is not invertible. Moreover, maybe can’t even store it because it’s so huge.

Maybe you could limit to top 10,000 words? Even so, that’s too many variables vs. observations to feel good about it.

Another thing to consider is that target is 0 or 1 (0 if not spam, 1 if spam), whereas you wouldn’t get a 0 or a 1 in actuality through using linear regression, you’d get a number. Of course you could choose a critical value so that above that we call it “1” and below we call it “0”. Next week we’ll do even better when we explore logistic regression, which is set up to model a binary response like this.

How about k-nearest neighbors?

To use k-nearest neighbors we would still need to choose features, probably corresponding to words, and you’d likely define the value of those features to be 0 or 1 depending on whether the word is present or not. This leads to a weird notion of “nearness”.

Again, with 10,000 emails and 100,000 words, we’ll encounter a problem: it’s not a singular matrix this time but rather that the space we’d be working in has too many dimensions. This means that, for example, it requires lots of computational work to even compute distances, but even that’s not the real problem.

The real problem is even more basic: even your nearest neighbors are really far away. this is called “the curse of dimensionality“. This problem makes for a poor algorithm.

Question: what if sharing a bunch of words doesn’t mean sentences are near each other in the sense of language? I can imagine two sentences with the same words but very different meanings.

Jake: it’s not as bad as it sounds like it might be – I’ll give you references at the end that partly explain why.

Aside: digit recognition

In this case k-nearest neighbors works well and moreover you can write it in a few lines of R.

Take your underlying representation apart pixel by pixel, say in a 16 x 16 grid of pixels, and measure how bright each pixel is. Unwrap the 16×16 grid and put it into a 256-dimensional space, which has a natural archimedean metric. Now apply the k-nearest neighbors algorithm.

Some notes:

- If you vary the number of neighbors, it changes the shape of the boundary and you can tune k to prevent overfitting.

- You can get 97% accuracy with a sufficiently large data set.

- Result can be viewed in a “confusion matrix“.

Naive Bayes

Question: You’re testing for a rare disease, with 1% of the population is infected. You have a highly sensitive and specific test:

- 99% of sick patients test positive

- 99% of healthy patients test negative

Given that a patient tests positive, what is the probability that the patient is actually sick?

Answer: Imagine you have 100×100 = 10,000 people. 100 are sick, 9,900 are healthy. 99 sick people test sick, and 99 healthy people do too. So if you test positive, you’re equally likely to be healthy or sick. So the answer is 50%.

Let’s do it again using fancy notation so we’ll feel smart:

Recall

and solve for

The denominator can be thought of as a “normalization constant;” we will often be able to avoid explicitly calculuating this. When we apply the above to our situation, we get:

This is called “Bayes’ Rule“. How do we use Bayes’ Rule to create a good spam filter? Think about it this way: if the word “Viagra” appears, this adds to the probability that the email is spam.