Archive

Fingers crossed – book coming out next May

As it turns out, it takes a while to write a book, and then another few months to publish it.

I’m very excited today to tentatively announce that my book, which is tentatively entitled Weapons of Math Destruction: How Big Data Increases Inequality and Threatens Democracy, will be published in May 2016, in time to appear on summer reading lists and well before the election.

Fuck yeah! I’m so excited.

p.s. Fight for 15 is happening now.

Fairness, accountability, and transparency in big data models

As I wrote about already, last Friday I attended a one day workshop in Montreal called FATML: Fairness, Accountability, and Transparency in Machine Learning. It was part of the NIPS conference for computer science, and there were tons of nerds there, and I mean tons. I wanted to give a report on the day, as well as some observations.

First of all, I am super excited that this workshop happened at all. When I left my job at Intent Media in 2011 with the intention of studying these questions and eventually writing a book about them, they were, as far as I know, on nobody’s else’s radar. Now, thanks to the organizers Solon and Moritz, there are communities of people, coming from law, computer science, and policy circles, coming together to exchange ideas and strategies to tackle the problems. This is what progress feels like!

OK, so on to what the day contained and my copious comments.

Hannah Wallach

Sadly, I missed the first two talks, and an introduction to the day, because of two airplane cancellations (boo American Airlines!). I arrived in the middle of Hannah Wallach’s talk, the abstract of which is located here. Her talk was interesting, and I liked her idea of having social scientists partnered with data scientists and machine learning specialists, but I do want to mention that, although there’s a remarkable history of social scientists working within tech companies – say at Bell Labs and Microsoft and such – we don’t see that in finance at all, nor does it seem poised to happen. So in other words, we certainly can’t count on social scientists to be on hand when important mathematical models are getting ready for production.

Also, I liked Hannah’s three categories of models: predictive, explanatory, and exploratory. Even though I don’t necessarily think that a given model will fall neatly into one category or the other, they still give you a way to think about what we do when we make models. As an example, we think of recommendation models as ultimately predictive, but they are (often) predicated on the ability to understand people’s desires as made up of distinct and consistent dimensions of personality (like when we use PCA or something equivalent). In this sense we are also exploring how to model human desire and consistency. For that matter I guess you could say any model is at its heart an exploration into whether the underlying toy model makes any sense, but that question is dramatically less interesting when you’re using linear regression.

Anupam Datta and Michael Tschantz

Next up Michael Tschantz reported on work with Anupam Datta that they’ve done on Google profiles and Google ads. The started with google’s privacy policy, which I can’t find but which claims you won’t receive ads based on things like your health problems. Starting with a bunch of browsers with no cookies, and thinking of each of them as fake users, they did experiments to see what actually happened both to the ads for those fake users and to the google ad profiles for each of those fake users. They found that, at least sometimes, they did get the “wrong” kind of ad, although whether Google can be blamed or whether the advertiser had broken Google’s rules isn’t clear. Also, they found that fake “women” and “men” (who did not differ by any other variable, including their searches) were offered drastically different ads related to job searches, with men being offered way more ads to get $200K+ jobs, although these were basically coaching sessions for getting good jobs, so again the advertisers could have decided that men are more willing to pay for such coaching.

An issue I enjoyed talking about was brought up in this talk, namely the question of whether such a finding is entirely evanescent or whether we can call it “real.” Since google constantly updates its algorithm, and since ad budgets are coming and going, even the same experiment performed an hour later might have different results. In what sense can we then call any such experiment statistically significant or even persuasive? Also, IRL we don’t have clean browsers, so what happens when we have dirty browsers and we’re logged into gmail and Facebook? By then there are so many variables it’s hard to say what leads to what, but should that make us stop trying?

From my perspective, I’d like to see more research into questions like, of the top 100 advertisers on Google, who saw the majority of the ads? What was the economic, racial, and educational makeup of those users? A similar but different (because of the auction) question would be to reverse-engineer the advertisers’ Google ad targeting methodologies.

Finally, the speakers mentioned a failure on Google’s part of transparency. In your advertising profile, for example, you cannot see (and therefore cannot change) your marriage status, but advertisers can target you based on that variable.

Sorelle Friedler, Carlos Scheidegger, and Suresh Venkatasubramanian

Next up we had Sorelle talk to us about her work with two guys with enormous names. They think about how to make stuff fair, the heart of the question of this workshop.

First, if we included race in, a resume sorting model, we’d probably see negative impact because of historical racism. Even if we removed race but included other attributes correlated with race (say zip code) this effect would remain. And it’s hard to know exactly when we’ve removed the relevant attributes, but one thing these guys did was define that precisely.

Second, say now you have some idea of the categories that are given unfair treatment, what can you do? One thing suggested by Sorelle et al is to first rank people in each category – to assign each person a percentile in their given category – and then to use the “forgetful function” and only consider that percentile. So, if we decided at a math department that we want 40% women graduate students, to achieve this goal with this method we’d independently rank the men and women, and we’d offer enough spots to top women to get our quota and separately we’d offer enough spots to top men to get our quota. Note that, although it comes from a pretty fancy setting, this is essentially affirmative action. That’s not, in my opinion, an argument against it. It’s in fact yet another argument for it: if we know women are systemically undervalued, we have to fight against it somehow, and this seems like the best and simplest approach.

Ed Felten and Josh Kroll

After lunch Ed Felton and Josh Kroll jointly described their work on making algorithms accountable. Basically they suggested a trustworthy and encrypted system of paper trails that would support a given algorithm (doesn’t really matter which) and create verifiable proofs that the algorithm was used faithfully and fairly in a given situation. Of course, we’d really only consider an algorithm to be used “fairly” if the algorithm itself is fair, but putting that aside, this addressed the question of whether the same algorithm was used for everyone, and things like that. In lawyer speak, this is called “procedural fairness.”

So for example, if we thought we could, we might want to turn the algorithm for punishment for drug use through this system, and we might find that the rules are applied differently to different people. This algorithm would catch that kind of problem, at least ideally.

David Robinson and Harlan Yu

Next up we talked to David Robinson and Harlan Yu about their work in Washington D.C. with policy makers and civil rights groups around machine learning and fairness. These two have been active with civil rights group and were an important part of both the Podesta Report, which I blogged about here, and also in drafting the Civil Rights Principles of Big Data.

The question of what policy makers understand and how to communicate with them came up several times in this discussion. We decided that, to combat cherry-picked examples we see in Congressional Subcommittee meetings, we need to have cherry-picked examples of our own to illustrate what can go wrong. That sounds bad, but put it another way: people respond to stories, especially to stories with innocent victims that have been wronged. So we are on the look-out.

Closing panel with Rayid Ghani and Foster Provost

I was on the closing panel with Rayid Ghani and Foster Provost, and we each had a few minutes to speak and then there were lots of questions and fun arguments. To be honest, since I was so in the moment during this panel, and also because I was jonesing for a beer, I can’t remember everything that happened.

As I remember, Foster talked about an algorithm he had created that does its best to “explain” the decisions of a complicated black box algorithm. So in real life our algorithms are really huge and messy and uninterpretable, but this algorithm does its part to add interpretability to the outcomes of that huge black box. The example he gave was to understand why a given person’s Facebook “likes” made a black box algorithm predict they were gay: by displaying, in order of importance, which likes added the most predictive power to the algorithm.

[Aside, can anyone explain to me what happens when such an algorithm comes across a person with very few likes? I’ve never understood this very well. I don’t know about you, but I have never “liked” anything on Facebook except my friends’ posts.]

Rayid talked about his work trying to develop a system for teachers to understand which students were at risk of dropping out, and for that system to be fair, and he discussed the extent to which that system could or should be transparent.

Oh yeah, and that reminds me that, after describing my book, we had a pretty great argument about whether credit scoring models should be open source, and what that would mean, and what feedback loops that would engender, and who would benefit.

Altogether a great day, and a fantastic discussion. Thanks again to Solon and Moritz for their work in organizing it.

What the fucking shit, Barbie?

I’m back from Haiti! It was amazing and awesome, and please stand by for more about that, with cultural observations and possibly a slide show if you’re all well behaved.

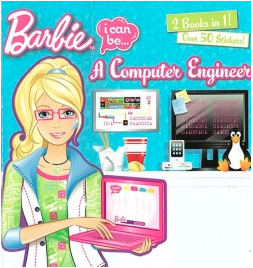

Today, thanks to my math camp buddy Lenore Cowen, I am going to share with you an amazing blog post by Pamela Ribon. Her post is called Barbie Fucks It Up Again and it describes a Barbie book entitled Barbie: I Can Be a Computer Engineer

Just to give you an idea of the plot, Barbie’s sister finds Barbie engaged on a project on her computer, and after asking her about it, Barbie responds:

“I’m only creating the design ideas,” Barbie says, laughing. “I’ll need Steven and Brian’s help to turn it into a real game!”

What the fucking shit, Barbie?

Core Econ: a free economics textbook

Today I want to tell you guys about core-econ.org, a free (although you do have to register) textbook my buddy Suresh Naidu is using this semester to teach out of and is also contributing to, along with a bunch of other economists.

It’s super cool, and I wish a class like that had been available when I was an undergrad. In fact I took an economics course at UC Berkeley and it was a bad experience – I couldn’t figure out why anyone would think that people behaved according to arbitrary mathematical rules. There was no discussion of whether the assumptions were valid, no data to back it up. I decided that anybody who kept going had to be either religious or willing to say anything for money.

Not much has changed, and that means that Econ 101 is a terrible gateway for the subject, letting in people who are mostly kind of weird. This is a shame because, later on in graduate level economics, there really is no reason to use toy models of society without argument and without data; the sky’s the limit when you get through the bullshit at the beginning. The goal of the Core Econ project is to give students a taste for the good stuff early; the subtitle on the webpage is teaching economics as if the last three decades happened.

What does that mean? Let’s take a look at the first few chapters of the curriculum (the full list is here):

- The capitalist revolution

- Innovation and the transition from stagnation to rapid growth

- Scarcity, work and progress

- Strategy, altruism and cooperation

- Property, contract and power

- The firm and its employees

- The firm and its customers

Once you register, you can download a given chapter in pdf form. So I did that for Chapter 6, The firm and its employees, and here’s a screenshot of the first page:

The chapter immediately dives into a discussion of Apple and Foxconn. Interesting! Topical! Like, it might actually help you understand the newspaper!! Can you imagine that?

The project is still in beta version, so give it some time to smooth out the rough edges, but I’m pretty excited about it already. It has super high production values and will squarely compete with the standard textbooks and curriculums, which is a good thing, both because it’s good stuff and because it’s free.

De-anonymizing what used to be anonymous: NYC taxicabs

Thanks to Artem Kaznatcheev, I learned yesterday about the recent work of Anthony Tockar in exploring the field of anonymization and deanonymization of datasets.

Specifically, he looked at the 2013 cab rides in New York City, which was provided under a FOIL request, and he stalked celebrities Bradley Cooper and Jessica Alba (and discovered that neither of them tipped the cabby). He also stalked a man who went to a slew of NYC titty bars: found out where the guy lived and even got a picture of him.

Previously, some other civic hackers had identified the cabbies themselves, because the original dataset had scrambled the medallions, but not very well.

The point he was trying to make was that we should not assume that “anonymized” datasets actually protect privacy. Instead we should learn how to use more thoughtful approaches to anonymizing stuff, and he proposes a method called “differential privacy,” which he explains here. It involves adding noise to the data, in a certain way, so that at the end any given person doesn’t risk too much of their own privacy by being included in the dataset versus being not included in the dataset.

Bottomline, it’s actually pretty involved mathematically, and although I’m a nerd and it doesn’t intimidate me, it does give me pause. Here are a few concerns:

- It means that most people, for example the person in charge of fulfilling FOIL requests, will not actually understand the algorithm.

- That means that, if there’s a requirement that such a procedure is used, that person will have to use and trust a third party to implement it. This leads to all sorts of problems in itself.

- Just to name one, depending on what kind of data it is, you have to implement differential privacy differently. There’s no doubt that a complicated mapping of datatype to methodology will be screwed up when the person doing it doesn’t understand the nuances.

- Here’s another: the third party may not be trustworthy and may have created a backdoor.

- Or they just might get it wrong, or do something lazy that doesn’t actually work, and they can get away with it because, again, the user is not an expert and cannot accurately evaluate their work.

Altogether I’m imagining that this is at best an expensive solution for very important datasets, and won’t be used for your everyday FOIL requests like taxicab rides unless the culture around privacy changes dramatically.

Even so, super interesting and important work by Anthony Tockar. Also, if you think that’s cool, take a look at my friend Luis Daniel‘s work on de-anonymizing the Stop & Frisk data.

The Head First book series

I’ve been reading Head First Java this past week and I’m super impressed and want to tell you guys about it if you don’t already know.

I wanted to learn what the big fuss was about object-oriented programming, plus it seems like all the classes my Lede students are planning to take either require python or java, so this seemed like a nice bridge.

But the book is outstanding, with quirky cartoons and a super fun attitude, and I’m on page 213 after less than a week, and yes that’s out of more than 600 pages but what I’m saying is that it’s a thrilling read.

My one complaint is how often the book talks about motivating programmers with women in tight sweaters. And no, I don’t think they were assuming the programmers were lesbians, but I could be wrong and I hope I am. At the beginning they made the point that people remember stuff better when there is emotional attachment to things, so I’m guessing they’re getting me annoyed to help me remember details on reference types.

Here’s another Head First book which my nerd mom recommended to me some time ago, and I bought but haven’t read yet, but now I really plan to: Head First Design Patterns. Because ultimately, programming is just a tool set and you need to learn how to think about constructing stuff with those tools. Exciting!

And by the way, there is a long list of Head First books, and I head good things about the whole series. Honestly I will never write a technical book in the old-fashioned dry way again.

Nerding out: RSA on an iPython Notebook

Yesterday was a day filled with secrets and codes. In the morning, at The Platform, we had guest speaker Columbia history professor Matthew Connelly, who came and talked to us about his work with declassified documents. Two big and slightly depressing take-aways for me were the following:

- As records have become digitized, it has gotten easy for people to get rid of archival records in large quantities. Just press delete.

- As records have become digitized, it has become easy to trace the access of records, and in particular the leaks. Connelly explained that, to some extent, Obama’s harsh approach to leakers and whistleblowers might be explained as simply “letting the system work.” Yet another way that technology informs the way we approach human interactions.

After class we had section, in which we discussed the Computer Science classes some of the students are taking next semester (there’s a list here) and then I talked to them about prime numbers and the RSA crypto system.

I got really into it and wrote up an iPython Notebook which could be better but is pretty good, I think, and works out one example completely, encoding and decoding the message “hello”.

The underlying file is here but if you want to view it on the web just go here.

The Lede Program students are rocking it

Yesterday was the end of the first half of the Lede Program, and the students presented their projects, which were really impressive. I am hoping some of them will be willing to put them up on a WordPress site or something like that in order to showcase them and so I can brag about them more explicitly. Since I didn’t get anyone’s permission yet, let me just say: wow.

During the second half of the program the students will do another project (or continue their first) as homework for my class. We’re going to start planning for that on the first day, so the fact that they’ve all dipped their toes into data projects is great. For example, during presentations yesterday I heard the following a number of times: “I spent most of my time cleaning my data” or “next time I will spend more time thinking about how to drill down in my data to find an interesting story”. These are key phrases for people learning lessons with data.

Since they are journalists (I’ve learned a thing or two about journalists and their mindset in the past few months) they love projects because they love deadlines and they want something they can add to their portfolio. Recently they’ve been learning lots of geocoding stuff, and coming up they’ll be learning lots of algorithms as well. So they’ll be well equipped to do some seriously cool shit for their final project. Yeah!

In addition to the guest lectures I’m having in The Platform, I’ll also be reviewing prerequisites for the classes many of them will be taking in the Computer Science department in the fall, so for example linear algebra, calculus, and basic statistics. I just bought them all a copy of How to Lie with Statistics as well as The Cartoon Guide to Statistics, both of which I adore. I’m also making them aware of Statistics Done Wrong, which is online. I am also considering The Cartoon Guide to Calculus, which I have but I haven’t read yet.

Keep an eye out for some of their amazing projects! I’ll definitely blog about them once they’re up.

Update on the Lede Program

My schedule nowadays is to go to the Lede Program classes every morning from 10am until 1pm, then office hours, when I can, from 2-4pm. The students are awesome and are learning a huge amount in a super short time.

So for instance, last time I mentioned we set up iPython notebooks on the cloud, on Amazon EC2 servers. After getting used to the various kinds of data structures in python like integers and strings and lists and dictionaries, and some simple for loops and list comprehensions, we started examining regular expressions and we played around with the old enron emails for things like social security numbers and words that had four or more vowels in a row (turns out that always means you’re really happy as in “woooooohooooooo!!!” or really sad as in “aaaaaaarghghgh”).

Then this week we installed git and started working in an editor and using the command line, which is exciting, and then we imported pandas and started to understand dataframes and series and boolean indexes. At some point we also plotted something in matplotlib. We had a nice discussion about unsupervised learning and how such techniques relate to surveillance.

My overall conclusion so far is that when you have a class of 20 people installing git, everything that can go wrong does (versus if you do it yourself, then just anything that could go wrong might), and also that there really should be a better viz tool than matplotlib. Plus my Lede students are awesome.

Ignore data, focus on power

I get asked pretty often whether I “believe” in open data. I tend to murmur a response along the lines of “it depends,” which doesn’t seem too satisfying to me or to the person I’m talking about. But this morning, I’m happy to say, I’ve finally come up with a kind of rule, which isn’t universal. It focuses on power.

Namely, I like data that shines light on powerful people. Like the Sunlight Foundation tracks money and politicians, and that’s good. But I tend to want to protect powerless people, like people who are being surveilled with sensors and their phones. And the thing is, most of the open data focuses on the latter. How people ride the subway or how they use the public park or where they shop.

Something in the middle is crime data, where you have compilation of people being stopped by the police (powerless) and the police themselves (powerful). But here as well you’ll notice an asymmetry on identifying information. Looking at Stop and Frisk data, for example, there’s a precinct to identify the police officer, but no badge number, whereas there’s a bunch of identifying information about the person being stopped which is recorded.

A lot of the time you won’t even find data about powerful people. Worker bees get scored but the managers are somehow above scoring. Bloomberg never scored his lieutenants or himself even when he insisted that teachers should be scored. I like to keep an eye on who gets data collected about them. The power is where the data isn’t.

I guess my point is this. Data and data modeling are not magical tools. They are in fact crude tools, and so to focus on them is misleading and distracting from the real show, which is always about power (and/or money). It’s a boondoggle to think about data when we should be thinking about when and how a model is being wielded and who gets to decide.

One of the biggest problem we face is that all this data is being collected and saved now and the models haven’t even been invented yet. That’s why there’s so much urgency in getting reasonable laws in place to protect the powerless.

The Lede Program has awesome faculty

A few weeks ago I mentioned that I’m the Program Director for the new Lede Program at the Columbia Graduate School of Journalism. I’m super excited to announce that I’ve found amazing faculty for the summer part of the program, including:

- Jonathan Soma, who will be the primary instructor for Basic Computing and for Algorithms

- Dennis Tenen, who will be helping Soma in the first half of the summer with Basic Computing

- Chris Wiggins, who will be helping Soma in the second half of the summer with Algorithms

- An amazing primary instructor for Databases who I will announce soon,

- Matthew Jones, who will help that amazing yet-to-be-announced instructor in Data and Databases

- Three amazing TA’s: Charles Berret, Sophie Chou, and Josh Vekhter (who doesn’t have a website!).

I’m planning to teach The Platform with the help of a bunch of generous guest lecturers (please make suggestions or offer your services!).

Applications are open now, and we’re hoping to get amazing students to enjoy these amazing faculty and the truly innovative plan they have for the summer (and I don’t use the word “innovative” lightly!). We’ve already gotten some super strong applications and made a couple offers of admission.

Also, I was very pleased yesterday to see a blogpost I wrote about the genesis and the goals of the program be published in PBS’s MediaShift.

Finally, it turns out I’m a key influencer, according to The Big Roundtable.

Does OpenSSL bug prove that open source code doesn’t work?

By now most of you have read about the major bug that was found in OpenSSL, an open source security software toolkit. The bug itself is called the Heartbleed Bug, and there’s lots of information about it and how to fix it here. People are super upset about this, and lots of questions remain.

For example, was it intentionally undermined? Has the NSA deliberately inserted weaknesses into this as well? It seems like the jury is out right now, but if I’m the guy who put in the bug, I’m changing my name and going undercover just in case.

Next, how widely was the weakness exploited? If you’re super worried about stuff, or if you are a particular target of attack, the answer is probably “widely.” The frustrating thing is that there’s seemingly no way to measure or test that assumption, since the attackers would leave no trace.

Here’s what I find interesting the most interesting question: what will the long-term reaction be to open source software? People might think that open source code is a bust after this. They will complain that something like this should never have been allowed to happen – that the whole point of open software is that people should be checking this stuff as it comes in – and it never would have happened if there were people getting paid to test the software.

First of all, it did work as intended, even though it took two years instead of two days like people might have wanted. And maybe this shouldn’t have happened like it did, but I suspect that people will learn this particular lesson really well as of now.

But in general terms, bugs are everywhere. Think about Knight Capital’s trading debacle or the ObamaCare website, just two famous recent problems with large-scale coding projects that aren’t open source.

Even when people are paid to fix bugs, they fix the kind of bugs that cause the software to stop a lot sooner than the kind of bug that doesn’t make anything explode, lets people see information they shouldn’t see, and leaves no trace. So for every Knight’s Capital there are tons of other bugs in software that continue to exist.

In other words it’s more a question of who knows about the bugs and who can exploit them. And of course, whether those weaknesses will ever be exposed to the public at all.

It would be great to see the OpenSSL bug story become, over time, a success story. This would mean that, on the one hand the nerds becoming more vigilant in checking vitally important code, and learning to think like assholes, but also the public would need to acknowledge how freaking hard it is to program.

Two thoughts on math research papers

Today I’d like to mention two ideas I’ve been having recently on how to make being a research mathematician (even) more fun.

1) Mathematicians should consider holding public discussions about papers

First, math nerds, did you know that in statistics they have formal discussions about papers? It’s been a long-standing tradition by the Royal Statistical Society, whose motto is “Advancing the science and application of statistics, and promoting use and awareness for public benefit,” to choose papers by some criterion and then hold regular public discussions about those papers by a few experts who are not the author, about the paper. Then the author responds to their points and the whole conversation is published for posterity.

I think this is a cool idea for math papers too. One thing that kind of depressed me about math is how rarely you’d find people reading the same papers unless you specifically got a group of people together to do so, which was a lot of work. This way the work is done mostly by other people and more importantly the payoff is much better for them since everyone gets a view into the discussion.

Note I’m sidestepping who would organize this whole thing, and how the papers would be chosen exactly, but I’d expect it would improve the overall feeling that I had of being isolated in a tiny math community, especially if the conversations were meant to be penetrable.

2) There should be a good clustering method for papers around topics

This second idea may already be happening, but I’m going to say it anyway, and it could easily be a thesis for someone in CS.

Namely, the idea of using NLP and other such techniques to cluster math papers by topic. Right now the most obvious way to find a “nearby” paper is to look at the graph of papers by direct reference, but you’re probably missing out on lots of stuff that way. I think a different and possibly more interesting way would be to use the text in the title, abstract, and introduction to find papers with similar subjects.

This might be especially useful when you want to know the answer to a question like, “has anyone proved that such-and-such?” and you can do a text search for the statement of that theorem.

The good news here is that mathematicians are in love with terminology, and give weird names to things that make NLP techniques very happy. My favorite recent example which I hear Johan muttering under his breath from time to time is Flabby Sheaves. There’s no way that’s not a distinctive phrase.

The bad news is that such techniques won’t help at all in finding different fields who have come across the same idea but have different names for the relevant objects. But that’s OK, because it means there’s still lots of work for mathematicians.

By the way, back to the question of whether this has already been done. My buddy Max Lieblich has a website called MarXiv which is a wrapper over the math ArXiv and has a “similar” button. I have no idea what that button actually does though. In any case I totally dig the design of the similar button, and what I propose is just to have something like that work with NLP.

PDF Liberation Hackathon: January 17-19

This is a guest post by Marc Joffe, the principal consultant at Public Sector Credit Solutions, an organization that provides data and analysis related to sovereign and municipal securities. Previously, Joffe was a Senior Director at Moody’s Analytics.

As Cathy has argued, open source models can bring much needed transparency to scientific research, finance, education and other fields plagued by biased, self-serving analytics. Models often need large volumes of data, and if the model is to be run on an ongoing basis, regular data updates are required.

Unfortunately, many data sets are not ready to be loaded into your analytical tool of choice; they arrive in an unstructured form and must be organized into a consistent set of rows and columns. This cleaning process can be quite costly. Since open source modeling efforts are usually low dollar operations, the costs of data cleaning may prove to be prohibitive. Hence no open model – distortion and bias continue their reign.

Much data comes to us in the form of PDFs. Say, for example, you want to model student loan securitizations. You will be confronted with a large number of PDF servicing reports that look like this. A corporation or well funded research institution can purchase an expensive, enterprise-level ETL (Extract-Transform-Load) tool to migrate data from the PDFs into a database. But this is not much help to insurgent modelers who want to produce open source work.

Data journalists face a similar challenge. They often need to extract bulk data from PDFs to support their reporting. Examples include IRS Form 990s filed by non-profits and budgets issued by governments at all levels.

The data journalism community has responded to this challenge by developing software to harvest usable information from PDFs. Examples include Tabula, a tool written by Knight-Mozilla OpenNews Fellow Manuel Aristarán, extracts data from PDF tables in a form that can be readily imported to a spreadsheet – if the PDF was “printed” from a computer application. Introduced earlier this year, Tabula continues to evolve thanks to the volunteer efforts of Manuel, with help from OpenNews Fellow Mike Tigas and New York Times interactive developer Jeremy Merrill. Meanwhile, DocHive, a tool whose continuing development is being funded by a Knight Foundation grant, addresses PDFs that were created by scanning paper documents. DocHive is a project of Raleigh Public Record and is led by Charles and Edward Duncan.

These open source tools join a number of commercial offerings such as Able2Extract and ABBYY Fine Reader that extract data from PDFs. A more comprehensive list of open source and commercial resources is available here.

Unfortunately, the free and low cost tools available to modelers, data journalists and transparency advocates have limitations that hinder their ability to handle large scale tasks. If, like me, you want to submit hundreds of PDFs to a software tool, press “Go” and see large volumes of cleanly formatted data, you are out of luck.

It is for this reason that I am working with The Sunlight Foundation and other sponsors to stage the PDF Liberation Hackathon from January 17-19, 2014. We’ll have hack sites at Sunlight’s Washington DC office and at RallyPad in San Francisco. Developers can also join remotely because we will publish a number of clearly specified PDF extraction challenges before the hackathon.

Participants can work on one of the pre-specified challenges or choose their own PDF extraction projects. Ideally, hackathon teams will use (and hopefully improve upon) open source tools to meet the hacking challenges, but they will also be allowed to embed commercial tools into their projects as long as their licensing cost is less than $1000 and an unlimited trial is available.

Prizes of up to $500 will be awarded to winning entries. To receive a prize, a team must publish their source code on a GitHub public repository. To join the hackathon in DC or remotely, please sign up at Eventbrite; to hack with us in SF, please sign up via this Meetup. Please also complete our Google Form survey. Also, if anyone reading this is associated with an organization in New York or Chicago that would like to organize an additional hack space, please contact me.

The PDF Liberation Hackathon is going to be a great opportunity to advance the state of the art when it comes to harvesting data from public documents. I hope you can join us.

Cool open-source models?

I’m looking to develop my idea of open models, which I motivated here and started to describe here. I wrote the post in March 2012, but the need for such a platform has only become more obvious.

I’m lucky to be working with a super fantastic python guy on this, and the details are under wraps, but let’s just say it’s exciting.

So I’m looking to showcase a few good models to start with, preferably in python, but the critical ingredient is that they’re open source. They don’t have to be great, because the point is to see their flaws and possible to improve them.

- For example, I put in a FOIA request a couple of days ago to get the current teacher value-added model from New York City.

- A friends of mine, Marc Joffe, has an open source municipal credit rating model. It’s not in python but I’m hopeful we can work with it anyway.

- I’m in search of an open source credit scoring model for individuals. Does anyone know of something like that?

- They don’t have to be creepy! How about a Nate Silver – style weather model?

- Or something that relies on open government data?

- Can we get the Reinhart-Rogoff model?

The idea here is to get the model, not necessarily the data (although even better if it can be attached to data and updated regularly). And once we get a model, we’d build interactives with the model (like this one), or at least the tools to do so, so other people could build them.

At its core, the point of open models is this: you don’t really know what a model does until you can interact with it. You don’t know if a model is robust unless you can fiddle with its parameters and check. And finally, you don’t know if a model is best possible unless you’ve let people try to improve it.

Crisis Text Line: Using Data to Help Teens in Crisis

This morning I’m helping out at a datadive event set up by DataKind (apologies to Aunt Pythia lovers).

The idea is that we’re analyzing metadata around a texting hotline for teens in crisis. We’re trying to see if we can use the information we have on these texts (timestamps, character length, topic – which is most often suicide – and outcome reported by both the texter and the counselor) to help the counselors improve their responses.

For example, right now counselors can be in up to 5 conversations at a time – is that too many? Can we figure that out from the data? Is there too much waiting between texts? Other questions are listed here.

Our “hackpad” is located here, and will hopefully be updated like a wiki with results and visuals from the exploration of our group. It looks like we have a pretty amazing group of nerds over here looking into this (mostly python users!), and I’m hopeful that we will be helping the good people at Crisis Text Line.

A.F.R. Transparency Panel coming up on Friday in D.C.

I’m preparing for a short trip to D.C. this week to take part in a day-long event held by Americans for Financial Reform. You can get the announcement here online, but I’m not sure what the finalized schedule of the day is going to be. Also, I believe it will be recorded, but I don’t know the details yet.

In any case, I’m psyched to be joining this, and the AFR are great guys doing important work in the realm of financial reform.

——

Opening Wall Street’s Black Box: Pathways to Improved Financial Transparency

Sponsored By Americans for Financial Reform and Georgetown University Law Center

Keynote Speaker: Gary Gensler Chair, Commodity Futures Trading Commission

October 11, 2013 10 AM – 3 PM

Georgetown Law Center, Gewirz Student Center, 12th Floor

120 F Street NW, Washington, DC (Judiciary Square Metro) (Space is limited. Please RSVP to AFRtransparencyrsvp@gmail.com)

The 2008 financial crisis revealed that regulators and many sophisticated market participants were in the dark about major risks and exposures in our financial system. The lack of financial transparency enabled large-scale fraud and deception of investors, weakened the stability of the financial system, and contributed to the market failure after the collapse of Lehman Brothers. Five years later, despite regulatory efforts, it’s not clear how much the situation has improved.

Join regulators, market participants, and academic experts for an exploration of the progress made – and the work that remains to be done – toward meaningful transparency on Wall Street. How can better information and disclosure make the financial system both fairer and safer?

Panelists include:

| Jesse Eisinger, Pulitzer Prize-winning reporter for the New York Times and Pro Publica |

| Zach Gast, Head of financial sector research, Center on Financial Research and Analysis |

| Amias Gerety, Deputy Assistant Secretary for the FSOC, United States Treasury |

| Henry Hu, Alan Shivers Chair in the Law of Banking and Finance, University of Texas Law School |

| Albert “Pete” Kyle, Charles E. Smith Professor of Finance, University of Maryland |

| Adam Levitan, Professor of Law, Georgetown University Law Center |

| Antoine Martin, Vice President, New York Federal Reserve Bank |

| Brad Miller, Former Representative from North Carolina; Of Counsel, Grais & Ellsworth |

| Cathy O’Neil, Senior Data Scientist, Johnson Research Labs; Occupy Alternative Banking |

| Gene Phillips, Director, PF2 Securities Evaluation |

| Greg Smith, Author of “Why I Left Goldman Sachs”; former Goldman Sachs Executive Director |

A Code of Conduct for data scientists from the Bellagio Fellows

The 2013 PopTech & Rockefeller Foundation Bellagio Fellows – Kate Crawford, Patrick Meier, Claudia Perlich, Amy Luers, Gustavo Faleiros and Jer Thorp – yesterday published “Seven Principles for Big Data and Resilience Projects” on Patrick Meier’s blog iRevolution.

Although they claim that these principles are meant for “best practices for resilience building projects that leverage Big Data and Advanced Computing,” I think they’re more general than that (although I’m not sure exactly what a resilience building project is) I and I really like them. They are looking for public comments too. Go to the post for the full description of each, but here is a summary:

1. Open Source Data Tools

Wherever possible, data analytics and manipulation tools should be open source, architecture independent and broadly prevalent (R, python, etc.).

2. Transparent Data Infrastructure

Infrastructure for data collection and storage should operate based on transparent standards to maximize the number of users that can interact with the infrastructure.

3. Develop and Maintain Local Skills

Make “Data Literacy” more widespread. Leverage local data labor and build on existing skills.

4. Local Data Ownership

Use Creative Commons and licenses that state that data is not to be used for commercial purposes.

5. Ethical Data Sharing

Adopt existing data sharing protocols like the ICRC’s (2013). Permission for sharing is essential. How the data will be used should be clearly articulated. An opt in approach should be the preference wherever possible, and the ability for individuals to remove themselves from a data set after it has been collected must always be an option.

6. Right Not To Be Sensed

Local communities have a right not to be sensed. Large scale city sensing projects must have a clear framework for how people are able to be involved or choose not to participate.

7. Learning from Mistakes

Big Data and Resilience projects need to be open to face, report, and discuss failures.

Learning accounting

There are lots of things I know nothing at all about. It annoys me not to understand a subject at all, because it often means I can’t follow a conversation that I care about. The list includes, just as a start: accounting, law, and politics.

Of those three, accounting seems like the easiest thing to tackle by far. This is partly because the space between what it’s theoretically supposed to be and how it’s practiced is smaller than with law or politics. Or maybe the kind of tricks accountants use seem closer to the kind of tricks I know about from being a quant, so that space seems easier to navigate for me personally.

Anyway, I might be wrong, but my impression is that my lack of understanding of accounting is mostly a language barrier, rather than a conceptual problem. There are expenses, and revenue, and lots of tax issues. There are categories. I’m working on the assumption that none of this stuff is exactly mathematical either, it’s all about knowing what things are called. And I don’t know any of it.

So I just signed up to learn at least some of it on a free Coursera course from the Wharton MBA Foundation Series. Here’s the introductory video, the professor seems super nerdy and goofy, which is a good start.

So in my copious free time I’ll be watching videos explaining the language of tax deferment and the like. Or at least that’s the fantasy – the thing about Coursera is that it’s free, so there’s not much incentive to keep up with the course. And the fact that all four Wharton 1st-year courses are being given away for free is proof of something, by the way – possibly that what you’re really paying for in business school is the connections you make while you’re there.

Finance and open source

I want to bring up two quick topics this morning I’ve been mulling over lately which are both related to this recent post by Economist Rajiv Sethi from Barnard (h/t Suresh Naidu), who happened to be my assigned faculty mentor when I was an assistant prof there. I have mostly questions and few answers right now.

In his post, Sethi talks about former computer nerd for Goldman Sachs Sergey Aleynikov and his trial, which was chronicled by Michael Lewis recently. See also this related interview with Lewis, h/t Chris Wiggins.

I haven’t read Lewis’s piece yet, only his interview and Sethi’s reaction. But I can tell it’ll be juicy and fun, as Lewis usually is. He’s got a way with words and he’s bloodthirsty, always an entertaining combination.

So, the two topics.

First off, let’s talk a bit about high frequency trading, or HFT. My first two questions are, who does HFT benefit and what does HFT cost? For both of these, there’s the easy answer and then there’s the hard answer.

Easy answer for HFT benefitting someone: primarily the people who make loads of money off of it, including the hardware industry and the people who get paid to drill through mountains with cables to make connections between Chicago and New York faster.

Secondarily, market participants whose fees have been lowered because of the tight market-making brought about by HFT, although that savings may be partially undone by the way HFT’ers operate to pick off “dumb money” participants. After all, you say market making, I say arbing. Sorting out the winners, especially when you consider times of “extreme market conditions”, is where it gets hard.

Easy answer for the costs of HFT is for the companies that invest in IT and infrastructure and people to do the work, although to be sure they wouldn’t be willing to make that investment if they didn’t expect it to pay off.

A harder and more complete answer would involve how much risk we take on as a society when we build black boxes that we don’t understand and let them collide with each other with our money, as well as possibly a guess at what those people and resources now doing HFT might be doing otherwise.

And that brings me to my second topic, namely the interaction between the open source community and the finance community, but mostly the HFTers.

Sethi said it well (Cathy: see bottom of this for an update) this way in his post:

Aleynikov relied routinely on open-source code, which he modified and improved to meet the needs of the company. It is customary, if

not mandatory(Cathy: see bottom of this for an update) for these improvements to be released back into the public domain for use by others. But his attempts to do so were blocked:

Serge quickly discovered, to his surprise, that Goldman had a one-way relationship with open source. They took huge amounts of free software off the Web, but they did not return it after he had modified it, even when his modifications were very slight and of general rather than financial use. “Once I took some open-source components, repackaged them to come up with a component that was not even used at Goldman Sachs,” he says. “It was basically a way to make two computers look like one, so if one went down the other could jump in and perform the task.” He described the pleasure of his innovation this way: “It created something out of chaos. When you create something out of chaos, essentially, you reduce the entropy in the world.” He went to his boss, a fellow named Adam Schlesinger, and asked if he could release it back into open source, as was his inclination. “He said it was now Goldman’s property,” recalls Serge. “He was quite tense. When I mentioned it, it was very close to bonus time. And he didn’t want any disturbances.”

This resonates with my experience at D.E. Shaw. We used lots of python stuff, and as a community were working at the edges of its capabilities (not me, I didn’t do fancy HFT stuff, my models worked at a much longer time frame of at least a few hours between trades).

The urge to give back to the OS community was largely thwarted, when it came up at all, because there was a fear, or at least an argument, that somehow our competition would use it against us, to eliminate our edge, even if it was an invention or tool completely sanitized from the actual financial algorithm at hand.

A few caveats: First, I do think that stuff, i.e. python technology and the like eventually gets out to the open source domain even if people are consistently thwarting it. But it’s incredibly slow compared to what you might expect.

Second, It might be the case that python developers working outside of finance are actually much better at developing good tools for python, especially if they have some interaction with finance but don’t work inside. I’m guessing this because, as a modeler, you have a very selfish outlook and only want to develop tools for your particular situation. In other words, you might have some really weird looking tools if you did see a bunch coming from finance.

Finally, I think I should mention that quite a few people I knew at D.E. Shaw have now left and are actively contributing to the open source community now. So it’s a lagged contribution but a contribution nonetheless, which is nice to see.

Update: from my Facebook page, a discussion of the “mandatoriness” of giving back to the OS community from my brother Eugene O’Neil, super nerd, and friend William Stein, other super nerd:

Eugene O’Neil: the GPL says that if you give someone a binary executable compiled with GPL source code, you also have to provide them free access to all the source code used to generate that binary, under the terms of the GPL. This makes the commercial sale of GPL binaries without source code illegal. However, if you DON’T give anyone outside your organization a binary, you are not legally required to give them the modified source code for the binary you didn’t give them. That being said, any company policy that tries to explicitly PROHIBIT employees from redistributing modified GPL code is in a legal gray area: the loophole works best if you completely trust everyone who has the modified code to simply not want to distribute it.

William Stein: Eugene — You are absolutely right. The “mandatory” part of the quote: “It is customary, if not mandatory, for these improvements to be released back into the public domain for use by others.” from Cathy’s article is misleading. I frequently get asked about this sort of thing (because of people using Sage (http://sagemath.org) for web backends, trading, etc.). I’m not aware of any popular open source license that make it mandatory to give back changes if you use a project internally in an organization (let alone the GPL, which definitely doesn’t). The closest is AGPL, which involves external use for a website. Cathy — you might consider changing “Sethi said it well…”, since I think his quote is misleading at best. I’m personally aware of quite a few people that do use Sage right now who wouldn’t otherwise if Sethi’s statement were correct.