Open Models (part 2)

In my first post about open models, I argued that something needs to be done but I didn’t really say what.

This morning I want to outline how I see an open model platform working, although I won’t be able to resist mentioning a few more reasons we urgently need this kind of thing to happen.

The idea is for the platform to have easy interfaces both for modelers and for users. I’ll tackle these one at a time.

Modeler

Say I’m a modeler. I just wrote a paper on something that used a model, and I want to open source my model so that people can see how it works. I go to this open source platform and I click on “new model”. It asks for source code, as well as which version of which open source language (and exactly which packages) it’s written in. I feed it the code.

It then asks for the data and I either upload the data or I give it a url which tells the platform the location of the data. I also need to explain to the platform exactly how to transform the data, if at all, to prepare it for feeding to the model. This may require code as well.

Next, I specify the extent to which the data needs to stay anonymous (hopefully not at all, but sometimes in the case of medical data or something, I need to place security around the data). These anonymity limits will translate into the kinds of visualizations and results that can be requested by users but not the overall model’s aggregated results.

Finally, I specify which parameters in my model were obvious “choices” (like tuning parameters, or prior strengths, or thresholds I chose for cleaning data). This is helpful but not necessary, since other people will be able to come along later and add things. Specifically, they might try out new things like how many signals to use, which ones to use, and how to normalize various signals.

That’s it, I’m done, and just to be sure I “play” the model and make sure that the results jive with my published paper. There’s a suite of visualization tools and metrics of success built into the model platform for me to choose from which emphasize the good news for my model. I’ve created an instance of my model which is available for anyone to take a look at. This alone would be major progress, and the technology already exists for some languages.

User

Now say I’m a user. First of all, I want to be able to retrain the model and confirm the results, or see a record that this has already been done.

Next, I want to be able to see how the model predicts a given set of input data (that I supply). Specifically, if I’m a teacher and this is the open-sourced value added teacher model, I’d like to see how my score would have varied if I’d had 3 fewer students or they had had free school lunches or if I’d been teaching in a different district. If there were a bunch of different models, I could see what scores my data would have produced in different cities or different years in my city. This is a good start for a robustness test for such models.

If I’m also a modeler, I’d like to be able to play with the model itself. For example, I’d like to tweak the choices that have been made by the original modeler and retrain the model, seeing how different the results are. I’d like to be able to provide new data, or a new url for data, along with instructions for using the data, to see how this model would fare on new training data. Or I’d like to think of this new data as updating the model.

This way I get to confirm the results of the model, but also see how robust the model is under various conditions. If the overall result holds only when you exclude certain outliers and have a specific prior strength, that’s not good news.

I can also change the model more fundamentally. I can make a copy of the model, and add another predictor from the data or from new data, and retrain the model and see how this new model performs. I can change the way the data is normalized. I can visualize the results in an entirely different way. Or whatever.

Depending on the anonymity constraints of the original data, there are things I may not be able to ask as a user. However, most aggregated results should be allowed. Specifically, the final model with its coefficients.

Records

As a user, when I play with a model, there is an anonymous record kept of what I’ve done, which I can choose to put my name on. On the one hand this is useful for users because if I’m a teacher, I can fiddle with my data and see how my score changes under various conditions, and if it changes radically, I have a way of referencing this when I write my op-ed in the New York Times. If I’m a scientist trying to make a specific point about some published result, there’s a way for me to reference my work.

On the other hand this is useful for the original modelers, because if someone comes along and improves my model, then I have a way of seeing how they did it. This is a way to crowdsource modeling.

Note that this is possible even if the data itself is anonymous, because everyone in sight could just be playing with the model itself and only have metadata information.

More on why we need this

First, I really think we need a better credit rating system, and so do some guys in Europe. From the New York Times article (emphasis mine):

Last November, the European Commission proposed laws to regulate the ratings agencies, outlining measures to increase transparency, to reduce the bloc’s dependence on ratings and to tackle conflicts of interest in the sector.

But it’s not just finance that needs this. The entirety of science publishing is in need of more transparent models. From the nature article’s abstract:

Scientific communication relies on evidence that cannot be entirely included in publications, but the rise of computational science has added a new layer of inaccessibility. Although it is now accepted that data should be made available on request, the current regulations regarding the availability of software are inconsistent. We argue that, with some exceptions, anything less than the release of source programs is intolerable for results that depend on computation. The vagaries of hardware, software and natural language will always ensure that exact reproducibility remains uncertain, but withholding code increases the chances that efforts to reproduce results will fail.

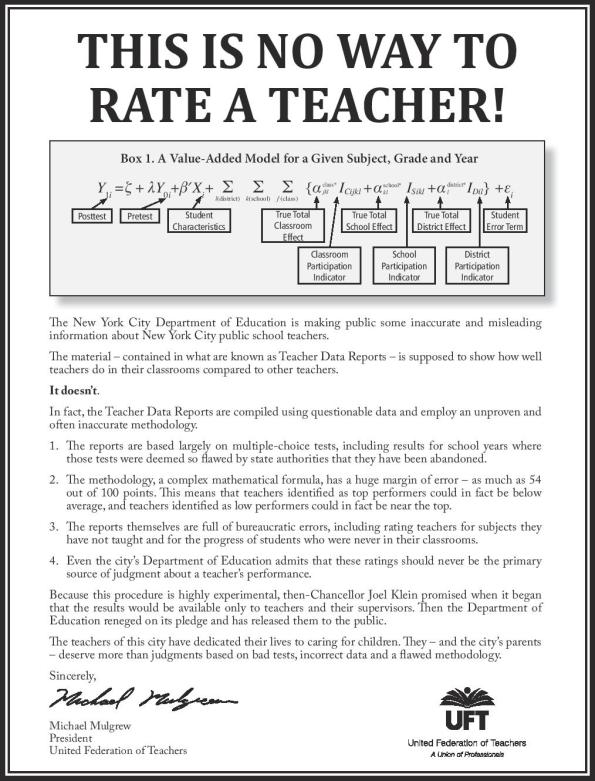

Finally, the field of education is going through a revolution, and it’s not all good. Teachers are being humiliated and shamed by weak models, which very few people actually understand. Here’s what the teacher’s union has just put out to prove this point:

Sounds pretty good … but … you need to have some way in which models built on personal data can be uploaded without violating the privacy of the people described in the data. You have discussed the techniques that might be used in previous posts. You need these to be built into your portal.

LikeLike

Agreed, but I did think I discussed this a bit. I’ve updated the post with more on it 🙂

LikeLike

Check out Steve Keen’s work at http://www.debtdeflation.com/blogs/.

He is doing that already.

LikeLike

What happens when people have models that are incommensurate, or overlap?

Are the models silo’ed, or are there crosswalks between them?

LikeLike

Ideally they would all be easily accessible. I’m not sure what you mean by crosswalks between models though.

LikeLike

And as for the formula that putatively rates teachers, the assumption seems to be that the ratings matter. They don’t. It’s the fact of rating that matters. The objective of the program is obviously to privatize the entire school system, and to that end, life is made as unpleasant as possible for teachers. (Rather the same thing is happening to universities. Ask any adjunct.) From that perspective, arbitrary yet “high stakes” testing for teachers is a feature, and not a bug.

LikeLike

The goal with open-sourcing value-added teaching is to show that the emperor has no clothes.

LikeLike

I use APL, I’m quite happy for others to see my models, but they can’t understand them 😉

LikeLike