Archive

Marc Andreessen and Al3x Payne

My friend Chris Wiggins just sent me this recent letter by Alex “Al3x” Payne in response to this recent post by Marc Andreessen. Andreessen’s original post is entitled This is Probably a Good Time to Say That I Don’t Believe Robots Will Eat All the Jobs… and the rebuttal is entitled simply Dear Marc Andreessen.

To get a flavor of the exchange, we’ll start with this from Andreessen:

What never gets discussed in all of this robot fear-mongering is that the current technology revolution has put the means of production within everyone’s grasp. It comes in the form of the smartphone (and tablet and PC) with a mobile broadband connection to the Internet. Practically everyone on the planet will be equipped with that minimum spec by 2020.

versus this from Payne:

If we’re gonna throw around Marxist terminology, though, can we at least keep Karl’s ideas intact? Workers prosper when they own the means of production. The factory owner gets rich. The line worker, not so much.

Owning a smartphone is not the equivalent of owning a factory. I paid for my iPhone in full, but Apple owns the software that runs on it, the patents on the hardware inside it, and the exclusive right to the marketplace of applications for it.

…

You spent a lot of paragraphs on back-of-the-napkin economics describing the coming Awesome Robot Future, addressing the hypotheticals. What you left out was the essential question: who owns the robots?

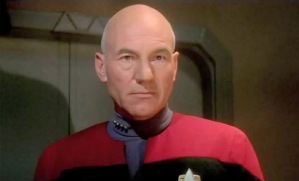

Please read both the original post and the rebuttal in their entireties. At it’s heart, their conversation strikes me as a somewhat more contentious version of the argument I’ve had with myself about the utopia envisioned in Star Trek.

Namely, at some point we’ll have all these robots doing stuff for us, but how are we going to spread that wealth around? Who owns the robots and when are they going to learn to share? In this vision of the distant future, that critical “singularity of moral enlightenment” (SME) is never explained. I wish I could ask Captain Picard how it all went down.

It’s one thing to lack an explanation for the SME, and to consider it an aspirational quasi-religious utopian goal, but it’s another thing entirely to fail to acknowledge it.

That someone as powerful and famous as Mark Andreessen, who is personally involved in the development and nurturing of so many technology platforms, has trouble seeing the logical inconsistency of his own rhetoric can only be explained by the fact that, as the controller of such platforms, it is he who reaps their benefits. It’s yet another case of someone thinking “this system works for me therefore it is super awesome for everyone and everything, amen.”

I’m hoping Al3x’s fine response will get Marc to consider how SME is gonna happen, and when.

The politics of data mining

At first glance, data miners inside governments, start-ups, corporations, and political campaigns are all doing basically the same thing. They’ll all need great engineering infrastructure, good clean data, a working knowledge of statistical techniques and enough domain knowledge to get things done.

We’ve seen recent articles that are evidence for this statement: Facebook data people move to the NSA or other government agencies easily, and Obama’s political campaign data miners have launched a new data mining start-up. I am a data miner myself, and I could honestly work at any of those places – my skills would translate, if not my personality.

I do think there are differences, though, and here I’m not talking about ethics or trust issues, I’m talking about pure politics[1].

Namely, the world of data mining is divided into two broad categories: people who want to cause things to happen and people who want to prevent things from happening.

I know that sounds incredibly vague, so let me give some examples.

In start-ups, irrespective of what you’re actually doing (what you’re actually doing is probably incredibly banal, like getting people to click on ads), you feel like you’re the first person ever to do it, at least on this scale, or at least with this dataset, and that makes it technically challenging and exciting.

Or, even if you’re not the first, at least what you’re creating or building is state-of-the-art and is going to be used to “disrupt” or destroy lagging competition. You feel like a motherfucker, and it feels great[2]!

The same thing can be said for Obama’s political data miners: if you read this article, you’ll know they felt like they’d invented a new field of data mining, and a cult along with it, and it felt great! And although it’s probably not true that they did something all that impressive technically, in any case they did a great job of applying known techniques to a different data set, and they got lots of people to allow access to their private information based on their trust of Obama, and they mined the fuck out of it to persuade people to go out and vote and to go out and vote for Obama.

Now let’s talk about corporations. I’ve worked in enough companies to know that “covering your ass” is a real thing, and can overwhelm a given company’s other goals. And the larger the company, the more the fear sets in and the more time is spent covering one’s ass and less time is spent inventing and staying state-of-the-art. If you’ve ever worked in a place where it takes months just to integrate two different versions of SalesForce you know what I mean.

Those corporate people have data miners too, and in the best case they are somewhat protected from the conservative, risk averse, cover-your-ass atmosphere, but mostly they’re not. So if you work for a pharmaceutical company, you might spend your time figuring out how to draw up the numbers to make them look good for the CEO so he doesn’t get axed.

In other words, you spend your time preventing something from happening rather than causing something to happen.

Finally, let’s talk about government data miners. If there’s one thing I learned when I went to the State Department Tech@State “Moneyball Diplomacy” conference a few weeks back, it’s that they are the most conservative of all. They spend their time worrying about a terrorist attack and how to prevent it. It’s all about preventing bad things from happening, and that makes for an atmosphere where causing good things to happen takes a rear seat.

I’m not saying anything really new here; I think this stuff is pretty uncontroversial. Maybe people would quibble over when a start-up becomes a corporation (my answer: mostly they never do, but certainly by the time of an IPO they’ve already done it). Also, of course, there are ass-coverers in start-ups and there are risk-takers in corporation and maybe even in government, but they don’t dominate.

If you think through things in this light, it makes sense that Obama’s data miners didn’t want to stay in government and decided to go work on advertising stuff. And although they might have enough clout and buzz to get hired by a big corporation, I think they’ll find it pretty frustrating to be dealing with the cover-my-ass types that will hire them. It also makes sense that Facebook, which spends its time making sure no other social network grows enough to compete with it, works so well with the NSA.

1. If you want to talk ethics, though, join me on Monday at Suresh Naidu’s Data Skeptics Meetup where he’ll be talking about Political Uses and Abuses of Data.

Explain your revenue model to me so I’ll know how I’m paying for this free service

When you find a website that claims to be free for users, we should know to be automatically suspicious. What is sustaining this service? How could you possibly have 35 people working at the underlying company without a revenue source?

We’ve been trained to not think about this, as web surfers, because everything seems, on its face, to be free, until it isn’t, which seems outright objectionable (as I wrote about here). Or is it? Maybe it’s just more honest.

When I go to the newest free online learning site, I’d like to know how they plan to eventually make money. If I’m registering on the site, do I need to worry that they will turn around and sell my data? Is it just advertising? Are they going to keep the good stuff away from me unless I pay?

And it’s not enough to tell me it’s making no revenue yet, that it’s being funded somehow for now without revenue. Because wherever there is funding, there are strings attached.

If the NSF has given a grant for this project, then you can bet the project never involves attacking the NSF for incompetence and politics. If it’s a VC firm, then you’d better believe they are actively figuring out how to make a major return on their investment. So even if they’re not selling your registration and click data now, they have plans for it.

So in other words, I want to know how you’re being funded, who’s giving you the money, and what your revenue model is. Unless you are independently wealthy and want to give back to the community by slaving away on a project, or you’re doing it in your spare time, then I know I’m somehow paying for this.

Just in the spirit of disclosure and transparency, I have no income and I pay a bit for my WordPress site.

Why the internet is creepy

Recently I’ve been seeing various articles and opinion pieces that say that Facebook should pay its users to use it, or give a cut of the proceeds when they sell personal data, or something along those lines.

This strikes me a naive to a surprising degree; it means people really don’t understand how web businesses work. How can people simultaneously complain that Facebook isn’t a viable business and that they don’t pay their users for their data?

People have gotten used to getting free services, and they assume that infrastructure somehow just exists, and they want to have that infrastructure, and use it, and never see ads and never have their data used, or get paid whenever someone uses their data.

But you can’t have all of that at the same time!

These companies need to monetize somehow, and instead of asking users for money directly, which isn’t the current culture, they get creepy with data. The fact that there are basically no rules about personal information (aside from some medical information) means that the creepiness limit is extreme, and possibly hasn’t been reached yet.

What are the alternatives? I can think of a few, none of them particularly wonderful:

- Legislate privacy laws to make personal data sharing or storing illegal without explicit consent for each use (right now you just sign away all your rights at once when you sign up for the service, but that could and probably should change). This would kill the internet as we know it. In the short term the consequences would be extreme. Besides the fact that some people would save and use data illegally, which would be very hard to track and to stop, places like Twitter, Facebook, and Google would have no revenue model. An interesting thought experiment on what would happen after this.

- Make people pay for services, either through micro-payments or subscription services like Netflix. This would maybe work, but only for people with credit cards and money to spare. So it would also change access to the internet, and not in a good way.

- Wikipedia-style donation-based services. This is clearly a tough model, and they always seem to be on the edge of solvency.

- Get the government to provide these services as meaningful infrastructure for society, like highways. Imagine what Google Government would be like.

- Some combination of the above.

Am I missing something?

Resampling

I’m enjoying reading and learning about agile software development, which is a method of creating software in teams where people focus on short and medium term “iterations”, with the end goal in sight but without attempting to map out the entire path to that end goal. It’s an excellent idea considering how much time can be wasted by businesses in long-term planning that never gets done. And the movement has its own manifesto, which is cool.

The post I read this morning is by Mike Cohn, who seems heavily involved in the agile movement. It’s a good post, with a good idea, and I have just one nerdy pet peeve concerning it.

I’m a huge fan of stealing good ideas from financial modeling and importing them into other realms. For example, I stole the idea of stress testing of portfolios and use them in stress testing the business itself where I work, replacing scenarios like “the Dow drops 9% in a day” with things like, “one of our clients drops out of the auction.”

I’ve also stolen the idea of “resampling” in order to forecast possible future events based on past data. This is particularly useful when the data you’re handling is not normally distributed, and when you have quite a few data points.

To be more precise, say you want to anticipate what will happen over the next week (5 days) with something. You have 100 days of daily results in the past, and you think the daily results are more or less independent of each other. Then you can take 5 random days in the past and see how that “artificial week” would look if it happened again. Of course, that’s only one artificial week, and you should do that a bunch of times to get an idea of the kind of weeks you may have coming up.

If you do this 10,000 times and then draw a histogram, you have a pretty good sense of what might happen, assuming of course that the 100 days of historical data is a good representation of what can happen on a daily basis.

Here comes my pet peeve. In Mike Cohn’s blog post, he goes to the trouble of resampling to get a histogram, so a distribution of fake scenarios, but instead of really using that as a distribution, for the sake of computing a confidence interval, he only computes the average and standard deviation and then replaces the artificial distribution with a normal distribution with those parameters. From his blog:

Armed with 200 simulations of the ten sprints of the project (or ideally even more), we can now answer the question we started with, which is, How much can this team finish in ten sprints? Cells E17 and E18 of the spreadsheet show the average total work finished from the 200 simulations and the standard deviation around that work.

In this case the resampled average is 240 points (in ten sprints) with a standard deviation of 12. This means our single best guess (50/50) of how much the team can complete is 240 points. Knowing that 95% of the time the value will be within two standard deviations we know that there is a 95% chance of finishing between 240 +/- (2*12), which is 216 to 264 points.

What? This is kind of the whole point of resampling, that you could actually get a handle on non-normal distributions!

For example, let’s say in the above example, your daily numbers are skewed and fat-tailed, like a lognormal distribution or something, and say the weekly numbers are just the sum of 5 daily numbers. Then the weekly numbers will also be skewed and fat-tailed, although less so, and the best estimate of a 95% confidence interval would be to sort the scenarios and look at the 2.5th percentile scenario, the 97.5th percentile scenario and use those as endpoints of your interval.

The weakness of resampling is the possibility that the data you have isn’t representative of the future. But the strength is that you get to work with a honest-to-goodness distribution and don’t need to revert to assuming things are normally distributed.

Meritocracy and horizon bias

I read this article yesterday about racism in Silicon Valley. It’s interesting, written by an interesting guy named Eric Ries, and it touches on stuff I’ve thought about like stereotype threat and the idea that diverse teams perform better than homogeneous ones.

In spite of liking the article pretty well, I take issue with two points.

In the beginning of the article Ries lays down some ground rules, and one of them is that “meritocracy is good.” Is it really good? Always? And to what limit? People are born with talent just as they’re born rich or poor, and what makes talent a better or more fair way of sorting people? Or are we just claiming it’s more efficient?

Actually I could go on but this blog post kind of says everything I wanted to say on the matter. As an aside, I’m kind of sick of the way people use the idea of “meritocracy” to overpay people who they justify as having superhuman qualifications (I’m looking at you, CEO’s) or a ridiculous, massively scaleable amount of luck (most super rich entrepreneurs).

Second, I’m going to coin a term here, but I’m sure someone else has already done so. Namely, I consider it horizon bias to think that wherever you are, whatever you do, is the coolest place in the world and that everyone else is just super jealous of you and wishes they had that job. So you don’t look beyond your horizon to see that there are other jobs that may be more attractive to people. The reason this comes up is the following paragraph:

What accounts for the decidedly non-diverse results in places like Silicon Valley? We have two competing theories. One is that deliberate racisms keeps people out. Another is that white men are simply the ones that show up, because of some combination of aptitude and effort (which it is depends on who you ask), and that admissions to, say Y Combinator, simply reflect the lack of diversity of the applicant pool, nothing more.

I’d like to offer a third option, namely that only white guys show up because that’s who thinks working in Silicon Valley is an attractive idea. I know it’s kind of like the second option above, but it’s not exactly. The qualification “because of some combination of aptitude and effort” is the difference.

Let’s say I’m considering moving to Silicon Valley to work. But all of my images of that place come from movies and my experiences with my actual friends in the dotcom bubble era who slept under their desks at night. Plus I know that the housing market out there is crazy and that the commute sucks. Finally, I’d picture myself working with lots of single, ambitious, and arrogant young men who believe in meritocracy (code for: use vaguely libertarian philosophical arguments to act ruthlessly). I can imagine that these facts keep plenty of non-white non-men away.

Next, going on to the point about horizon bias. People who already work in Silicon Valley already selected themselves as people who think it’s a great deal. And then they sit around wondering why it’s not a more diverse place, in spite of having everything awesomely meritocratic.

Going back to the article, Ries mentions this idea that diverse teams outperform homogeneous ones. I’d like to look at that in light of horizon bias and ask whether that’s the wrong way to look at it. In other words maybe it’s more a function of what the common goal is, which leads to a diverse team if the common goal is broadly attractive, than how the exact team was created. If goals are super attractive, attractive enough to draw diverse people, then maybe those goals deserve success more.

For example, one of the strengths of Occupy Wall Street has been the diversity of its membership. People of all ages, all backgrounds, and all races have been coming together to speak for the 99%. It’s of course fitting, since 99% does represent lots of people, but I’d like to point out that it is diverse because the cause resonates with so many people, which makes it successful.

Another example. I worked at the math department at M.I.T., which is famously not diverse. And I saw the “Truth Values” play recently which made me think about that experience some more. There’s lots of horizon bias in math, because there’s this assumption that everyone who was ever a math major should want to someday become a math professor (at M.I.T. no less). So it’s easy enough to wring your hands when you see that, although 45% of the undergrad math majors are women, and 40% of the grad students in math are women (I’m making these numbers up by the way), only 1% of the tenured faculty at the top places are women (again totally made up).

And of course there’s real discrimination involved (trust me), but there’s also the possibility that a bunch of women just never wanted to be a professor, they just wanted to get a Ph.D. for whatever reason. But the horizon bias at the top places assumes that everyone would want to become a professor.

On the one hand I’m just making things worse, because I’m pointing out that in addition to the real discrimination that takes place for those women who actually do want to become professors, there’s also this natural but invisible self-selection thing going on where women leave the professorship train at some point. Seems like I’ve made one problem into two.

On the other hand, we can address this horizon bias, if it exists. But instead of addressing it by blotting out the names of candidates on applications (a good idea by the way, and one I think I’ll start using), we would need to address it by looking at the actual company or department or culture and see why it’s less than attractive to people who aren’t already there. It’s a bigger and harder kind of change.

Data Science and Engineering at Columbia?

Yesterday Columbia announced a proposal to build an Institutes for Data Sciences and Engineering a few blocks north of where I live. It’s part of the Bloomberg Administration’s call for proposals to add more engineering and entrepreneurship in New York City, and he’s said the city is willing to chip in up to 100 million dollars for a good plan. Columbia’s plan calls for having five centers within the institute:

- New Media Center (journalism, advertising, social media stuff)

- Smart Cities Center (urban green infrastructure including traffic pattern stuff)

- Health Analytics Center (mining electronic health records)

- Cybersecurity Center (keeping data secure and private)

- Financial Analytics Center (mining financial data)

A few comments. Currently the data involved in media 1) and finance 5) costs real money, although I guess Bloomerg can help Columbia get a good deal on Bloomberg data. On the other hand, urban traffic data 2) and health data 3) should be pretty accessible to academic researchers in New York.

There’s a reason that 1) and 5) cost money: they make money. The security center is kind of in the middle, since you can try to make any data secure, you don’t need to particularly pay for it, but on the other hand if you can find a good security system then people will pay for it.

On the other hand, even though it’s a great idea to understand urban infrastructure and health data, it’s not particularly profitable (not to say it doesn’t save alot of money potentially, but it’s hard to monetize the concept of saving money, especially if it’s the government’s or the city’s money).

So the overall cost structure of the proposed Institute would probably work like this: incubator companies from 1) and 5) and maybe 4) fund the research going on in (themselves and) 2) and 3). This is actually a pretty good system, because we really do need some serious health analytics research on an enormous scale, and it needs to be done ethically.

Speaking of ethics, I hope they formalize and follow The Modeler’s Hippocratic Oath. In fact, if they end up building this institute, I hope they have a required ethics course for all incoming students (and maybe professors).

Hmmm… I’d better get my “data science curriculum” plan together fast.

Back!

I’m back from vacation, and the sweet smell of blog has been calling to me. Big time. I’m too tired from Long Island Expressway driving to make a real post now, but I have a few things to throw your way tonight:

First, I’m completely loving all of the wonderful comments I continue to receive from you, my wonderful readers. I’m particularly impressed with the accounting explanation on my recent post about the IASP and what “level 3” assets are. Here is a link to the awesome comments, which has really turned into a conversation between sometimes guest blogger FogOfWar and real-life accountant GMHurley who knows his shit. Very cool and educational.

Second, my friend and R programmer Daniel Krasner has finally buckled and started a blog of his very own, here. It’s a resource for data miners, R or python programmers, people working or wanting to work at start-ups, and thoughtful entrepreneurs. In his most recent post he considers how smart people have crappy ideas and how to focus on developing good ones.

Finally, over vacation I’ve been reading anarchist David Graeber‘s new book about debt, and readers, I think I’m in love. In a purely intellectual and/or spiritual way, of course, but man. That guy can really rile me up. I’ll write more about his book soon.

Why should you care about statistical modeling?

One of the major goals of this blog is to let people know how statistical modeling works. My plan is to explain as much as I can in simple plain English, with the least amount of confusion, and the maximum amount of elucidation at every possible level, so every reader can take at least a basic understanding away.

Why? What’s so important about you knowing about what nerds do?

Well, there are different answers. First, you may be interested in it from a purely cerebral perspective – you may yourself be a nerd or a potential nerd. Since it is interesting, and since there will be I suspect many more job openings coming soon that use this stuff, there’s nothing wrong with getting technical; it may come in handy.

But I would argue that even if it’s not intellectually stimulating for you, you should know at least the basics of this stuff, kind of like how we should all know how our government is run and how to conserve energy; kind of a modern civic duty, if you will.

Civic duty? Whaaa?

Here’s why. There’s an incredible amount of data out there, more than every before, and certainly more than when I was growing up. I mean, sure, we always kept track of our GDP and the stock market, that’s old school data collection. And marketers and politicians have always experimented with different ads and campaigns and kept track of what does and what doesn’t work. That’s all data too. But the sheer volume of data that we are now collecting about people and behaviors is positively stunning. Just think of it as a huge and exponentially growing data vat.

And with that data comes data analysis. This is a young field. Even though I encourage every nerd out there to consider becoming a data scientist, I know that if a huge number of them agreed to it today, there wouldn’t be enough jobs out there for everyone. Even so, there will be, and very soon. Each CEO of each internet startup should be seriously considering hiring a data scientist, if they don’t have one already. The power in data mining is immense and it’s only growing. And as I said, the field is young but it’s growing in sophistication rapidly, for good and for evil.

And that gets me to the evil part, and with it the civic duty part.

I claim two things. First, that statistical modeling can and does get out of hand, which I define as when it starts controlling things in a way that is not intended or understood by the people who built the model (or who use the model, or whose lives are affected by the model). And second, that by staying informed about what models are, what they aren’t, what limits they have and what boundaries need to be enforced, we can, as a society, live in a place which is still data-intensive but reasonable.

To give evidence to my first claim, I point you to the credit crisis. In fact finance is a field which is not that different from others like politics and marketing, except that it is years ahead in terms of data analysis. It was and still is the most data-driven, sophisticated place where models rule and the people typically stand back passively and watch (and wait for the money to be transferred to their bank accounts). To be sure, it’s not the fault of the models. In fact I firmly believe that nobody in the mortgage industry, for example, really believed that the various tranches of the mortgage backed securities were in fact risk-free; they knew they were just getting rid of the risk with a hefty reward and they left it at that. And yet, the models were run, and their numbers were quoted, and people relied on them in an abstract way at the very least, and defended their AAA ratings because that’s what the models said. It was a very good example of models being misapplied in situations that weren’t intended or appropriate. The result, as we know, was and still is an economic breakdown when the underlying numbers were revealed to be far far different than the models had predicted.

Another example, which I plan to write more about, is the value-added models being used to evaluate school teachers. In some sense this example is actually more scary than the example of modeling in finance, in that in this case, we are actually talking about people being fired based on a model that nobody really understands. Lives are ruined and schools are closed based on the output of an opaque process which even the model’s creators do not really comprehend (I have seen a technical white paper of one of the currently used value-added models, and it’s my opinion that the writer did not really understand modeling or at best tried not to explain it if he did).

In summary, we are already seeing how statistical modeling can and has affected all of us. And it’s only going to get more omnipresent. Sometimes it’s actually really nice, like when I go to Pandora.com and learn about new bands besides Bright Eyes (is there really any band besides Bright Eyes?!). I’m not trying to stop cool types of modeling! I’m just saying, we wouldn’t let a model tell us what to name our kids, or when to have them. We just like models to suggest cool new songs we’d like.

Actually, it’s a fun thought experiment to imagine what kind of things will be modeled in the future. Will we have models for how much insurance you need to pay based on your DNA? Will there be modeling of how long you will live? How much joy you give to the people around you? Will we model your worth? Will other people model those things about you?

I’d like to take a pause just for a moment to mention a philosophical point about what models do. They make best guesses. They don’t know anything for sure. In finance, a successful model is a model that makes the right bet 51% of the time. In data science we want to find out who is twice as likely to click a button- but that subpopulation is still very unlikely to click! In other words, in terms of money, weak correlations and likelihoods pay off. But that doesn’t mean they should decide peoples’ fates.

My appeal is this: we need to educate ourselves on how the models around us work so we can spot one that’s a runaway model. We need to assert our right to have power over the models rather than the other way around. And to do that we need to understand how to create them and how to control them. And when we do, we should also demand that any model which does affect us needs to be explained to us in terms we can understand as educated people.

Short Post!

I’ve been told my posts are intimidatingly long, what with the twitter generation’s sound byte attention span. Normally I’d say, screw that! It’s because my ideas are so freaking nuanced they can’t be condensed to under a paragraph without losing their essence!

But today I acquiesce; here’s a short post containing at most one idea.

Namely, I’ve been getting pretty strong reactions online and offline regarding my post about whether an academic math job is a crappy job. I just want to set the record straight: I’m not even saying it’s a crappy job, I’m simply talking about someone else’s essay which describes it that way. But moreover, even if I were saying that, I would only be saying it’s crappy (which I’m not) compared to other jobs that very very smart mathy people could get. Obviously in the grand scheme of things it’s a very good job- safe working conditions, regular hours, well-respected, etc., and many people in this world have far crappier jobs and would love a job with those conditions. But relative to other jobs that math people could be getting, it may not be the best.

Many professors of math (you know who you are) have this weird narrow world view, that they feed their students, which goes something like, “if you want to be a success, you should be exactly like me (which is to say, an academic)”. So anyone who gets educated in a math department is apt to run into all these people who define success as getting tenure in an academic math department, and they just don’t know about or consider other kinds of gigs. It would be nice if there was a way to get a more balanced view of the pros and cons of all of the options.

Cookies

About three months ago I started working at an internet company which hosts advertising platforms. It’s a great place to work, with a bunch of fantastically optimistic, smart people who care about their quality of life. I’m on the tech team along with the team of developers which is led by this super smart, cool guy who looks like Keanu Reeves from the Matrix.

I’ve learned a few things about how the internet works and how information is collected about people who are surfing the web, and the bottom line is I clear my cookies now after every session of browsing. Now that I know the ways information travels the risks of retaining cookies seem to outweigh the benefits. First I’ll explain how the system works and then I’ll try to make a case for why it’s creepy, and finally, why you may not care at all.

Basically you should think of yourself, when you surf the web, as analogous to someone on the subway coming home from Macy’s with those enormous red and white shopping bags. You are a walking advertisement for your past, your consumer tastes, and your style, not to mention your willingness to purchase. Moreover, beyond that, you are also carrying around information about your political beliefs, religious beliefs, and temperament. The longer you browse between cookie cleanings, the more precise a picture you’ve painted of yourself for the sites you visit and for third parties (explained below) who get their hands on your information.

Just to give you a flavor of what I’m talking about, you probably are already aware that when you go to a site like, say, Amazon, the site assigns you a cookie to recognize you as a guest; when you return a week later it knows you and says, “Hi, Catherine!”. That’s on the low end of creepy since you have an account with Amazon and it’s convenient for the site to not ask you who you are every time you visit.

However, you may not be aware that Amazon can also see and parce the cookies that other sites, like Google (correction: a reader has pointed out to me that Google doesn’t let this happen, sorry. I was getting confused between the cookie and the “referring url”, which tells a site where the user has come from when they first get to the site. That does contain Google search terms), places on your web signature. In other words Amazon, or any other site that knows how to look, can figure out what other sites’ label of you says. Some cookies are encrypted but not all of them, and I think the general rule is to not encrypt- after all, the people who have the tools to read the cookies all benefit from that information being easy to read. From the perspective of Google, moreover, this information is helping improve your user experience. It should be added that Google and many other companies give you the option of opting out of receiving cookies, but to do so you have to figure out it’s happening and then how to opt out (which isn’t hard).

One last layer of cookie collection is this: there are other companies which lurk on websites (like Amazon, although I’m not an expert on exactly when and where this happens) which can also see your cookies and tag you with additional cookies, or even change your existing cookies (this is considered rude but not prevented). This is where, for me, the creep factor gets going. Those third parties certainly have less riding on their brand, since of course you don’t even see them, so they have less motivation to act honorably with the information they collect about you. For the most part, though, they are just looking to see what kind of advertisement you may be weak for and, once they figure it out, they show you exactly that model of showerhead that you searched for three weeks ago but decided was too expensive to buy. If you want to stop seeing that freaking showerhead popping up everywhere, clear thy cookies.

Here’s why I don’t like this; it’s not about the ubiquitous showerhead, which is just annoying. Think about rich people and how they experience their lives. I touched on this in a previous post about working at D.E. Shaw, but to summarize, rich people think they are always right, and that’s a pretty universal rule, which is to say anyone who becomes rich will probably succumb to that pretty quickly. Why, though? My guess is that everyone around them is aware of their money and is always trying to make them happy in the hope that they at some point could have some of that money. So they effectively live in a cocoon of rightness, which after a while seems perfectly logical and normal.

How that concept manifests itself in this conversation about cookies is that, in a small but meaningful way, that’s exactly what happens to the user when he or she is browsing the web with lots of cookies. Every time Joe encounters a site, the site and all third-party advertisers have the ability to see that Joe is a Republican gun-owner, and the ads shown to Joe will be absolutely in line with that part of the world. Similarly the cookies could expose Dan as a liberal vegetarian and he sees ads that never shake his foundations. It’s like we are funneled into a smaller and smaller world and we see less and less that could challenge our assumptions. This is an isolating thought, and it’s really happening.

At the same time, people sometimes want to be coddled, and I’m one of those people. Sometimes I enjoy it when my favorite yarn store advertises absolutely gorgeous silk-cashmere blends at me, or shows me to a rant against greedy bankers, and no I’d rather not replace them with Viagra ads. So it’s also a question of how much does this matter. For me it matters, but I also like New York City because it is dirty and gritty and all these people from all over the world live there and sweat on each other on the subway and it makes me feel like part of a larger community- I like to mix it up and have it mixed up.

I’d also like to mention another kind of reason you may want to clear your cookies: you get better deals. A general rule of internet advertising is that you don’t need to show good deals to loyalists. So if you don’t have cookies proving you have an account on Netflix, you may get an advertisement offering you three free months of membership. Or if you want to get more free articles on the New York Times website, clear your cookies and the site will have no idea who you are. There are many such examples like this.

Lastly, I’d like to point out that you probably don’t need to worry about this. After all, many browsers will clear your cookies but also clear your usernames and passwords, and you may never be able to get some of those back. And maybe you don’t mind being coddled while online. Maybe it’s the one place where you get to feel understood. Why question that?