Brainstorming with narcissists

In the most recent New Yorker, there’s an article which basically says that, although “no-judgment” brainstorming sounds great, it doesn’t actually produce better ideas. That in fact you need to be able to criticize each other’s half-baked plans to get real innovation.

The idea that a bunch of people, who have been instructed that no idea is too banal to speak out loud will eventually move beyond the obvious into creative territory is certainly attractive, mostly because it’s so hopeful: in this world everyone can participate in innovation. And in fact it may be true, that everyone can be creative, but I agree that it won’t generally happen in the standard brainstorming meeting.

As usual I have lots of opinions about this, and lots of experience, so I’ll just go ahead and say what I think.

When does working in a group work?

- When people are sufficiently technical for the discussion, although not completely informed: it’s helpful to have someone with great technical skills or domain knowledge but who hasn’t thought through the issue, so they can question all of the assumptions as they come up to speed. In my experience this is when some of the best ideas happen.

- When people are more interested in getting to the answer than in impressing the people around them. This sounds too obvious to mention, but as we will see below it’s actually almost impossible to achieve in a largish group at an ambitious or successful company.

- When people know the people around them will be able to follow somewhat vague arguments and help them make those arguments precise.

- Alternatively when people know that others will gladly find flaws in ideas that are essentially bullshit. When everyone has agreed to call each other’s bullshit in a supportive way, and has taken on that role aggressively, you have a good dynamic.

Why does the “no-judgment” rule fail?

- When you aren’t being critical, you never get to the reasons why things are obviously a bad idea, so you never get to a new idea. That’s the critical part of no-judgment brainstorming that fails, the friction supplied by the other people who call you on a bad idea. Otherwise it’s just a bunch of people talking in a room, distracting you from thinking well by the loudness of their voices.

- When you have a bunch of successful people who have never failed, nobody actually lowers their guard. This idea of the super-achieving educational 1% is described in this recent New York Times article. I’ve seen this phenomenon close-up many times. People who are academic superstars absolutely hate taking risks and hate being wrong: life is a competition and they need to win every time. (update: there are plenty of people who were really freaking good at school that aren’t like this; when I say “academic superstars” I want to incorporate the idea that these people identify their success in school and/or other arenas that have metrics of success, like contests or high-quality brands (Harvard, McKinsey, etc.), as part of their identity.)

- Finally, the setup of the brainstorm is necessarily shallow and doesn’t require follow-through. In my experience only germs of good ideas can possibly occur in meetings. Lots of good germs have been left to rot on whiteboards. It would be wicked useful to try to rank ideas at the end of a meeting (by a show of hands, for example), but the “no-judgment” rule also prevents this.

Asian educational systems often get criticized for being so non-individualistic that they repress originality. True. But a system where the individual is promoted as special in every way also represses originality, because narcissists brook no argument.

This recent “Room for Debate” discussion in the New York Times brings up this issue beautifully. The idea is that schools are more and more being seen as companies, where the students and parents (especially the parents) are seen as the customer. The customer is always right, of course, and the schools are expected to tailor themselves to please everyone. It’s the opposite of learning how to disagree, learning how to be a member of society, and learning how to be wrong.

Interestingly, some of the best experiences I’ve had recently in the successful brainstorming arena have come from the #OWS Alternative Banking group I help organize. It’s made up of a bunch of citizens, many of whom are experts, but not all, and many of whom are experts in different corners of finance. The fact that people come to a meeting to talk policy and finance on Sunday afternoons means they are obviously interested, and the fact that no two people seem to agree on anything completely makes for feisty and productive debates.

Mortgage settlement talks

If you haven’t been following the drama of the possible mortgage settlement between the big banks that committed large-scale mortgage fraud and the state Attorney Generals, then get yourself over to Naked Capitalism right away. What could end up being the biggest boondoggle coming out of the credit crisis is unfolding before us.

The very brief background story is this. Banks made huge bets on the housing market through securitized products (mortgage backed securities which were then often repackaged or rerepackaged). The underlying loans were often given to people with very hopeful expectations about the future of the housing market, like that it would only go up. In the meantime, the banks did very bad jobs of keeping track of the paperwork. In addition to that, many of the loans were actually fraudulent and a very large number of them were ridiculous, with resetting interest rates that were clearly unaffordable.

Fast forward to post-credit crisis, when people were having trouble with their monthly bills. The banks made up a bunch of paperwork that they’d lost or had never been made in the first place (this is called “robo-signing”). The judges at foreclosures got increasingly skeptical of the shoddy paperwork and started balking (to be fair, not all of them).

Who’s on the hook for the mistakes the banks made? The home owners, obviously, and also the investors in the securitized products, but most critically the taxpayer, through Fannie and Freddie, who are insuring these ridiculous mortgages.

So what we’ve got now is an effort by the big banks to come to a “settlement” with the states to pay a small fee (small in the context of how much is at stake) to get out of all of this mess, including all future possible findings of fraud or misdeeds. The settlement terms have been so outrageously bank-friendly that a bunch of state Attorney Generals have been pushing back, with the help of prodding from the people.

Meanwhile, the Obama administration would love nothing more than to be able to claim they cleaned up the mess and made the banks pay. But that story seriously depends on people not really understanding the scale of the problem and the meaning of the fine print of the proposed settlement.

If you want to learn more recent details about this potential tragedy, this post from Naked Capitalism got me so entranced that I actually missed my subway stop on the way to work and had to walk uptown from Canal. From the post:

The story did not outline terms, but previous leaks have indicated that the bulk of the supposed settlement would come not in actual monies paid by the banks (the cash portion has been rumored at under $5 billion) but in credits given for mortgage modifications for principal modifications. There are numerous reasons why that stinks. The biggest is that servicers will be able to count modifying first mortgages that were securitized toward the total. Since one of the cardinal rules of finance is to use other people’s money rather than your own, this provision virtually guarantees that investor-owned mortgages will be the ones to be restructured. Why is this a bad idea? The banks are NOT required to write down the second mortgages that they have on their books. This reverses the contractual hierarchy that junior lienholders take losses before senior lenders. So this deal amounts to a transfer from pension funds and other fixed income investors to the banks, at the Administration’s instigation.

Another reason the modification provision is poorly structured is that the banks are given a dollar target to hit. That means they will focus on modifying the biggest mortgages. So help will go to a comparatively small number of grossly overhoused borrowers, no doubt reinforcing the “profligate borrower” meme.

But those criticisms assume two other things: that the program is actually implemented. The experience with past consent decrees in the mortgage space is that the servicers get a legal get out of jail free card, a release, and do not hold up their end of the deal. Similarly, we’ve seen bank executives swear in front of Congress in late 2010 that they had stopped robosigning, which turned out to be a brazen lie. So here, odds favor that servicers will pretty much do nothing except perhaps be given credit for mortgage modifications they would have made anyhow.

Interestingly, Romney has gone on record siding with the homeowners. The following is a Romney quote:

The banks are scared to death, of course, because they think they’re going to go out of business… They’re afraid that if they write all these loans off, they’re going to go broke. And so they’re feeling the same thing you’re feeling. They just want to pretend all of this is going to get paid someday so they don’t have to write it off and potentially go out of business themselves.”

This is cascading throughout our system and in some respects government is trying to just hold things in place, hoping things get better… My own view is you recognize the distress, you take the loss and let people reset. Let people start over again, let the banks start over again. Those that are prudent will be able to restart, those that aren’t will go out of business. This effort to try and exact the burden of their mistakes on homeowners and commercial property owners, I think, is a mistake.

“This effort” must refer to the mortgage settlement. I’m with Romney on this one.

In 50 years, when we look back at this period of time, we may be able to describe it like this:

The financial system got high on profits from unreasonably priced homes and mortgages, underestimating risk, and securitization fees. When the truth came out they paid a pittance to escape their mistakes, transferring the cost to homeowners and the taxpayer and leaving the housing market utterly inflated and confused. The entire charade lasted decades and was in the name of not acknowledging what everyone already knew, namely that the banks were effectively insolvent.

Occupy the World Economic Forum

Seasonally adjusted news

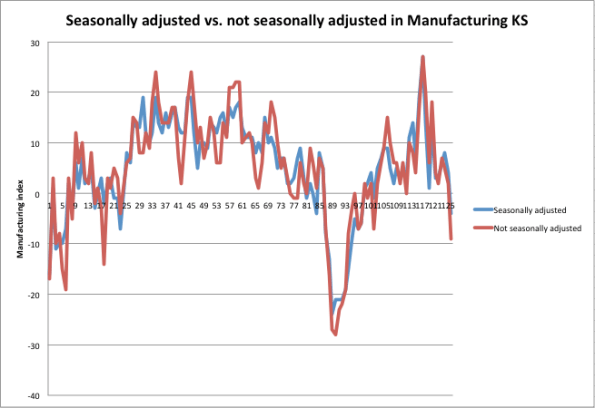

In one of my first posts ever, I talked about seasonal adjustment models and how they can work. I was sick of seeing that phrase go unexplained in the news all the time.

If I had been a bit more thoughtful, maybe I could have also mentioned various ways seasonal adjustment models could screw things up, or more precisely be screwed up by weird events. Luckily, a spokesperson from Goldman Sachs recently did that for me, and it was mentioned in this Bloomberg article. Those GS guys are smart, and would only mention this to Bloomberg if they thought everyone on the street knew it anyway, but I still appreciate them strewing their crumbs (it occurs to me that they might be trading on people’s overreactions to inflated good news right now).

Recall my frustration with seasonal adjustment models: they typically don’t tell you how many years of data they use, and how much they weight each year. But it’s safe to say that for statistics like unemployment and manufacturing, multiple years are used and more recent years are at least as important as older years. So events in the market that occurred in 2008 are still powerfully present in the seasonal adjustment model.

That means that, when the model is deciding what to expect, it looks at the past few years and kind of averages them. One of those years was 2008 when all hell broke loose, Lehman fell, TARP came into being, and Fannie, Freddie, and AIG were seized by the government. Lots of people lost their jobs and the housing and building industries went into freefall.

So the model thinks that’s a big deal, and compares what happened this year to that (and to the other years in the model, but that year dominates since it was such an extreme event), and decides we’re looking good. Here’s a picture from the Kansas Fed of the raw vs. seasonally adjusted manufacturing index results, from July 2001 to December 2011:

As one of my readers has already commented (darn, you guys are fast!), this just refers to manufacturing near Kansas, but the point I’m trying to make is still valid, namely that the seasonal adjustments clearly pale in comparison to the actual catastrophic event in 2008. However, that event still informs the seasonal adjustment model afterwards.

Because of the “golden rule” I mentioned in my post, namely that seasonal adjustment needs to on average (or at least in expectation) not add bias to the actual numbers, if things look better than they should in the second half of the year, that means they will look worse than they should in the first half of the year.

So be prepared for some crappy statistics coming out soon!

I still wish they’d just show us the graphs for the past 10 years and let us decide whether it’s good news.

Data Scientist degree programs

Prediction: in the next 10 years we will see the majority of major universities start masters degree programs, or Ph.D. programs, in data science, data analytics, business analytics, or the like. They will exist somewhere in the intersection of the fields of statistics, operations research, and computer science, and business. They will teach students how to use machine learning algorithms and various statistical methods, and how to design expert systems. Then they will send these newly minted data scientists out to work at McKinsey, Google, Yahoo, and possibly Data Without Borders.

The questions yet unanswered:

- Relevance: will they also teach the underlying theory well enough so that the students will know when the techniques are applicable?

- Skepticism: will they in general teach enough about robustness in order for the emerging data scientists to be sufficiently skeptical of the resulting models?

- Ethics: will they incorporate understanding the impact of the models so that students will think to understand the ethical implications of modeling? Will they have a well-developed notion of the Modeler’s Hippocratic Oath by then?

- Open modeling: will they focus narrowly on making businesses more efficient or will they focus on developing platforms which are open to the public and allow people more views into the models, especially when the models in question affect that public?

Open questions. And important ones.

Here’s one that’s already been started at the University of North Carolina, Charlotte.

Bad statistics debunked: serial killers and cervixes

If you saw this story going around about how statisticians can predict the activity of serial killers, be sure to read this post by Cosma Shalizi where he brutally tears down the underlying methodology. My favorite part:

Since Simkin and Roychowdhury’s model produces a power law, and these data, whatever else one might say about them, are not power-law distributed, I will refrain from discussing all the ways in which it is a bad model. I will re-iterate that it is an idiotic paper — which is different from saying that Simkin and Roychowdhury are idiots; they are not and have done interesting work on, e.g., estimating how often references are copied from bibliographies without being read by tracking citation errors4. But the idiocy in this paper goes beyond statistical incompetence. The model used here was originally proposed for the time intervals between epileptic fits. The authors realize that

[i]t may seem unreasonable to use the same model to describe an epileptic and a serial killer. However, Lombroso [5] long ago pointed out a link between epilepsy and criminality.

That would be the 19th-century pseudo-scientist3 Cesare Lombroso, who also thought he could identify criminals from the shape of their skulls; for “pointed out”, read “made up”. Like I said: idiocy.

Next, if you’re anything like me, you’ve had way too many experiences giving birth without pain control, even after begging continuously and profusely for some, because of some poorly derived statistical criterion that should never have been applied to anyone. This article debunks that among others related to babies and childbirth.

In particular, it suggests what I always suspected, namely that people misunderstand the effect of using epidurals because they don’t control for the fact that in the case of a long difficult birth you are more likely to get everything, which brings down the average outcome among people with epidurals but doesn’t at all prove causation.

Pregnant ladies, I suggest you print out this article and bring it with you to your OB appointments.

Apologies to Adam Smith

Not a lot of time to write this morning what with the sledding schedule, but I thought you might like this:

How’s it going with the Volcker Rule?

Glad you asked.

Recall that Occupy the SEC is currently drafting a letter of public comment of the Volcker Rule for the SEC (for background on the Volcker Rule itself, see my previous post). I was invited to join them on a call with the SEC last week and I will talk further about that below, but first I want to give you more recent news.

Yves Smith at Naked Capitalism wrote this post a couple of days ago talking about a House Financial Services Committee meeting, which happened Wednesday. Specifically, the House Financial Services Committee was considering a study done by Oliver Wyman which warned of reduced liquidity if the Volcker Rule goes into effect. Just to be clear, Oliver Wyman was paid by a collection of financial institutions (SIFMA) who would suffer under the Volcker Rule to study whether the Volcker Rule is a good idea. In her post, Yves was discussed the meeting as well as Occupy the SEC’s letter to that Committee which refuted the findings of Oliver Wyman’s study.

Simon Johnson, who was somehow on the panel even though it was more or less stuffed with people who wouldn’t argue, had some things to say about how much it makes sense to listen to people who are paid to write studies in his New York Times column published yesterday. He also made lots of good arguments against the content of the study, namely about the assumptions going into it and how reasonable they are. From Simon’s article:

Specifically, the study assumes that every dollar disallowed in pure proprietary trading by banks will necessarily disappear from the market. But if money can still be made (without subsidies), the same trading should continue in another form. For example, the bank could spin off the trading activity and associated capital at a fair market price.

Alternatively, the relevant trader – with valuable skills and experience – could raise outside capital and continue doing an equivalent version of his or her job. These traders would, of course, bear more of their own downside risks.

If it turns out that the previous form or extent of trading existed only because of the implicit government subsidies, then we should not mourn its end.

The Oliver Wyman study further assumes that the sensitivity of bond spreads to liquidity will be as it was in the depth of the financial crisis, 2007-9. This is ironic, given that the financial crisis severely disrupted liquidity and credit availability more generally – in fact this is a major implication of the Dick-Nelson, Feldhutter and Lando paper.

If Oliver Wyman had used instead the pre-crisis period estimates from the authors, covering the period 2004-7, even giving their own methods the implied effects would be one-fifth to one-twentieth of the size (this adjustment is based on my discussions with Professor Feldhutter).

CSPAN taped the meeting, which was pretty long, but I’d suggest you watch minutes 50 through 57, where Congressman Keith Ellison took some of the panel to task for being, or acting, super dumb.

For whatever reason, Occupy the SEC wasn’t invited to the panel. You can read their letter that argues against Wyman’s study, which is on Yves’s post, or you can read this comment that one of the members of Occupy the SEC posted on Johnson’s NYTimes piece (“OW” refers to Oliver Wyman, the author of the paid study):

Your testimony at the hearings yesterday was a refreshing counterpoint to the other members of the panel.

On top of the flaws in the OW analysis you covered in the article, there was another misleading point that the OW report purported to prove.

The study focused on liquidity for corporate bonds, which SIFMA/OW characterized as ‘financing american businesses’ . But a quick review of the outstanding corporate bonds in the study reveals that the lions share of corporate bonds are CMOs and ABS. Additionally, the study reports that the majority of the holdings of corporate bonds are in the hands of the finance industry.

As a result the loss of liquidity anticipated by the SIFMA folks will mostly impact them, not the pensioners and soldiers (and their Congressmen) the bankers were trying to scare with the OW loss estimates.

If the banks are forced to withdraw as market makers for this debt, replacement market makers won’t enter until these bonds trade at much lower levels. These losses are currently stranded (and disguised) in the banking system, and by extension are inflating the value of the funds invested in these bonds.

It’s critical that the market making rules are clarified to ensure that liquidity provision for these instruments is driven out of the protected banks and into a transparent market where the mispricing will be corrected and the losses will be properly recognized.

So just to summarize, the Congressional committee listened to the results of a paid study talking about how bad the Volcker Rule would be for the market, when in fact it would be good for the market to be uninsured and realistic.

I’m not a huge fan of the Volcker Rule as it is written, but these are really terrible reasons to argue against it. To my mind, the real problem is that, as written, the Volcker Rule is too easy to game and has too many exceptions written into it.

Going back to the call with the SEC (and with Occupy the SEC). I haven’t kept abreast of the details of the Volcker Rule like these guys (they are super relentless), but I did have some questions about the risk part. Namely, were they going to end up referring to an already existing risk regulatory scheme like Basel or Basel II, or were they creating something separate altogether? They were creating something separate. They mentioned that they weren’t interested in risk per se but only to the extent that wildly fluctuating risk numbers expose proprietary trading, which is the big no-no under the Volcker Rule.

But here’s the thing, I explained, the risk numbers you are asking for are so vague that it’s super easy, if I’m a bank, to game this to make my risk numbers look calm. You don’t specify the lookback period, you don’t specify the kind of Value-at-Risk, and you don’t compare my risk model worked out on a benchmark portfolio so you really don’t know what it’s saying. Their response was: oh, yeah, um, if you could give us better wording for that section that would be great.

So to re-summarize, we have “experts”, being paid by the banks, who explain to Congress why we shouldn’t let the Volcker Rule go through, and in the meantime we’ve assigned the SEC the job of writing that rule even though they don’t know how to game a risk model (there’s a good example here of JP Morgan doing just that last week).

One last issue: when we asked about why repos had been exempted sometime in between the writing of the statute and the design of the implementation, the SEC people just told us we’d “have to ask the Fed that”.

Followup: Change academic publishing

I really appreciate the amazing and immediate feedback I got from my post yesterday about changing the system of academic publishing. Let me gather the things I’ve learned or thought about in response:

First, I learned that mathoverflow is competitive and you “do well” on it if you’re quick and clever. Actually I didn’t know this, and since it is online I naively assumed people read it when they had time and so the answers to questions kind of drifted in over time. I kind of hate competitive math, and yes I wouldn’t like that to be the single metric deciding my tenure or job.

Next, ArXiv already existed when I left math, but I don’t think it’s all that good a “solution” either, because it’s treated mostly as a warehouse for papers, and there is not much feedback (although I’ve heard there’s way more in physics). Correct me if I’m wrong here.

I don’t want to sound like a pessimist, because the above two things really do function and add a lot to the community. I’m just pointing out that they aren’t perfect.

We, the mathematics community, should formally set out to be creative and thoughtful about different ways to collaborate and to document collaboration, and to score it for depth as well as helpfulness, etc. Let’s keep inventing stuff until we have a system which is respected and useful. The reason people may not be putting time into this right now is that they won’t be rewarded for it, but I say do it anyway and worry about that later. Let’s start brainstorming about what that system would look like.

That gets to another crucial point, which is that the people we have to convince are really not each other so much as deans and provosts of universities who are super conservative and want to be absolutely sure that the people they award tenure to are contributing citizens and will be for 40 years. We need to convince them to reconsider their definitions of “mathematical contributions”. How are we going to do this?

My first guess is that deans and provosts would listen to “experts in the field” quite a bit. This is good news, because it means that in some sense we just need to wait until the experts in the field come from the generation of people who invented (or at least appreciate) these tools. There are probably other issues though, which I don’t know about. I’d love to get comments from a dean or a provost on this one.

Change academic publishing

My last number theory paper just came out. I received it last week, so that makes it about 5 years since I submitted it – I know this since I haven’t even done number theory for 5 years. Actually I had already submitted it to a journal, and they took more than a year to reject it, so it’s been at least 6 years since I finished writing it.

One of the reasons I left academics was the painfully slow pace of being published, plus the feeling I got that, even when my papers did come out, nobody read them. I felt that way because I never read any papers, or at least I rarely read the new papers out of the new journals. I did read some older papers, ones that were recommended to me.

In other words I’m a pretty impatient person and the pace was killing me.

And I went to plenty of talks, but that process is of course very selective, and I would mostly be at a conference, or inside my own department. It led me to feel like I was mathematically isolated in my field as well as being incredibly impatient.

Plus, when you find yourself building a reputation more through giving talks and face-to-face interactions, you realize that much of that reputation is based on how you look and how well you give talks, and it stops seeming like mathematics is a just society, where everyone is judged based on their theorems. In fact it doesn’t feel like that at all.

I was really happy to see this article in the New York Times yesterday about how scientists are starting to collaborate online. This has got to be the future as far as I’m concerned. For example, the article mentions mathoverflow.net, which is a super awesome site where mathematicians pose and answer questions, and get brownie points if their answers are consistently good.

It’s funny how nowadays, to get tenure, you need to have a long list of publications, but brownie points for answering lots of questions on a community website for mathematicians doesn’t buy you anything. It’s totally ass backwards in terms of what we should actually be encouraging for a young mathematician. We should be hoping that young person is engaged in doing and explaining mathematics clearly, for its own sake. I can’t think of a better way of judging such a thing than mathoverflow.net points.

Maybe we also need to see that they can do original work. Why does it have to go through a 5 year process and be printed on paper? Why can’t we do it online and have other people read and rate (and correct) current research?

I know that people would respond that this would make lots of crappy papers seem on equal par with good, well thought-out papers, but I disagree. I think, first of all, that crap would be identified and buried, and that people would be more willing to referee online, since on the one hand it wouldn’t be resented, free work for publishers, and on the other hand, people would get more immediate and direct feedback and that would be cool and it would inspire people to work at it.

In other words, we can’t compare it to an ideal world where everyone’s papers are perfectly judged (not happening now) and where the good and important papers are widely read. We need to compare it to what we have now, which is highly dysfunctional.

That begs another huge question, which is why papers at all? Why not just contributions to projects that can be done online? For example my husband has an online open source project called the stacks project, but he feels like he can’t really urge anyone, especially if they’re young, to help out on it, because any work they do wouldn’t be recognized by their department. This is in spite of the fact that there’s already a system in place to describe who did what and who contributed what, and there are logs for corrections etc.; in other words, there’s a perfectly good way of seeing how much a given mathematician contributed to the project.

I honestly don’t see why we can’t, as a culture, acclimate to the computer age and start awarding tenure, or jobs, to people who have made major contributions to mathematics, rather than narrowly fulfilled some publisher’s fantasy. I also wonder if, when it finally happens, it will be a more enticing job prospect for smart but impatient people like myself who thrive on feedback. Probably so.

See also the follow-up post to this one.

Happy Birthday, Betty White!

Betty White turns 90 today (although Obama doesn’t seem to completely believe it).

I love her, and want to be just like her when I grow up. If you don’t know why, check her out describing Sarah Palin as a crazy bitch a couple of years ago, a brilliant combination of humor, politics, and sexual freedom.

Quantitative analysis of regulatory capture

I wrote earlier about how the movie “Inside Job” brought to light the issue of conflict of interest for business school professors, and I discussed Columbia and Harvard Business Schools. As some people pointed out, I forgot to mention economists.

Luckily the editors at Bloomberg took up that cause for me. Yesterday they published an opinion piece saying that disclosure won’t even be sufficient (but that it is absolutely necessary). From the article:

Disclosure, though, won’t eliminate the actual conflicts. Even the best-intentioned economists — and particularly those in the area of finance — face a litany of influences pushing them toward a rosier view of the industries they study. In a yet-to-be-published paper, Luigi Zingales, a finance professor at the University of Chicago’s Booth School of Business, likens the pressure to regulatory capture. A pro-business attitude, he notes, can increase an economist’s chances of landing lucrative consulting, expert-witness and research contracts, and can facilitate publication in academic journals whose editors are themselves captured. (Zingales is a contributor to Bloomberg View’s Business Class blog and has accepted money for speeches to Dimensional Fund Advisors, a hedge fund, and Banca Intermobiliare, an Italian private bank, among others.)

As a small test, Zingales looked at the 150 most-downloaded papers that had been done on executive pay — a subject he reasoned could legitimately be argued either way. He found that papers supporting high pay for top executives were 55 percent more likely to be published in prestigious economic journals, suggesting that the editors, also academic economists, have a bias.

I think this is an important study, and I look forward to reading it. Beyond the question of economists and disclosure, it points to a new subfield of quantitative analysis: the quantitative analysis of regulatory capture. I hope it is being done well: in other words, it could be true that high pay for top executives is really a better idea, and that’s why those studies are being published in prestigious economic journals. There has to be some way to separate the techniques from the politics of the results. It’s certainly an interesting question.

If we quants do this right, and especially if we make our models open source, then we potentially have the ability to measure the extent to which, when politicians and judges and the public get “expert” opinions, the information they receive is coming directly from the financial lobbyists (or other kinds of lobbyists) who are paid to think a certain way. It’s a possible first step towards removing some of the influence of money from decisions such as how much regulation or capital requirements we should impose on banks, for example.

Puzzle blog

Do you love puzzles like Sudoku? Check out Melon’s Puzzles. It’s a blog with lots of puzzles, including something new to me called Slitherlink (and lots of variations). Only go there if you have lots of time. It also gives you an insider’s view into puzzle contests. Only consider that if you have asstons of time.

Sunday Links

What do an upscale nightclub for Wall Streeters and the People’s Library from #Occupy Wall Street have in common? Turns out, nothing.

Someone thinks we can cure accounting shenanigans by rotating accounting firms. I’m not convinced.

I like this story from Matt Taibbi about one of the biggest assholes in the world.

For whatever reason I can’t get enough of this picture from a recent car show:

High Frequency Trading and Transaction Taxes

If you look at this list of the 20 biggest donors of the 2012 election, and you scroll down to number 20, you’ll find out stuff about Robert Mercer:

Robert Mercer is co-CEO of the $15 billion hedge fund company Renaissance Technologies. In 2009, according to the New York Daily News, he accused a builder of overcharging him $2 million for a construction project in his mansion—a “museum-quality” model train display “about half the size of a basketball court.” During the 2010 midterms, Mercer was outed as the funder behind $300,000 worth of attack ads targeting Rep. Peter DeFazio (D-Ore.). In the 2012 election cycle, he and his wife Diana have given $150,000 to the Republican National Lawyers Association and $100,000 to the free-market super-PAC Club for Growth Action.

Total giving for 2012 race: $384,100

• Giving to outside-spending groups: $260,000

• Giving to candidates and parties: $124,100

First, if I’m the model train set guy from above, I overcharge Mercer $2M and hope that he’s too embarrassed to sue me. And I am wrong.

Second, let’s look a bit more into the attack ads against DeFazio. Why is he spending so much time to work against this guy? Oh maybe this explains it. DeFazio was trying to impose a small transaction tax to curb high frequency trading, and Renaissance Technology, where Mercer works, makes their money through high frequency trading on the futures exchanges:

Capitol Hill Democrats introduced legislation today that would impose a tax on financial transactions in order to curb high-frequency trading and force Wall Street to contribute a bigger share to the federal budget.

A measure written by Sen. Tom Harkin, D-Iowa, and Rep. Peter DeFazio, D-Ore., would place a 0.03% levy on financial trading in stocks and bonds at their market value. It also would cover derivative contracts, options, puts, forward contracts and swaps at their purchase price.

So this is how politics works. As Sarkozy mentioned in this Bloomberg article where he was discussing imposing a similar transaction tax in France:

“If France waits for others to tax finance, then finance will never be taxed,” Sarkozy said today in a speech in the eastern French city of Mulhouse.

It seems like the Harkin/DeFazio transaction tax bill is still alive, for now. What’s so worrisome about it? To find out I registered to read this article from Investment News (registration is free), which starts out quite nicely:

Hark: Beware of Harkin tax plan

Investors, beware of the financial transactions tax proposed by Sen. Tom Harkin, D-Iowa, and Rep. Peter DeFazio, D-Ore. The tax may appear to have no chance of adoption at present, with the Republicans in control of the House and in position to block many proposals in the Senate, but the situation could change after the 2012 elections.

If Barack Obama retains the presidency and the Democrats regain control of both houses of Congress, we could see a tax on securities trades, especially if the passions evidenced by the Occupy Wall Street demonstrations remain high.

Woohoo! I love it when people are afraid of Occupy Wall Street, especially when they think they are only talking to their insider friends. After explaining the scope of the tax (again, 3 cents on 100 dollars), the article goes on:

In a breathtaking display of economic ignorance, Mr. Harkin declared: “This measure is not likely to impact the decision to engage in productive economic activity. There’s no question that Wall Street can easily bear this modest tax.”

Does Mr. Harkin not realize that customers, not Wall Street, would pay the tax? As opponents of the proposal argue, the proposed levy — effectively, a sales tax — would increase the cost of investing and be passed on to the ultimate customer, not absorbed by the brokerage firms, hedge funds and other professional traders at whom it is nominally aimed.

In effect, it also is a tax on liquidity. As anyone who has studied economics knows, when you tax something, you get less of it, so the result would be less liquid markets and more-costly transactions.

The article then goes on to warn that such a tax would move business offshore:

Finally, a transactions tax might drive trading and investing offshore to financial centers, such as Singapore or Dubai, that don’t impose such taxes. Academic research suggests that after the imposition of a transactions tax, market volatility would rise, while trading volume —and with it, liquidity — declined.

Just in case you’re not sufficiently worried yet, the article makes some further scary suggestions, which bizarrely allows for the fact that the current plan is benign:

Other dangers regarding the transactions tax proposal are that the low initial tax rate, 0.03%, might be absorbed by investors without too much pain, leading it to be raised quickly to a more burdensome and damaging rate.

That is what happened with the income tax in the U.S., and, more recently, with the value-added tax throughout Europe.

Another danger is that a transactions tax could be extended quickly to other financial transactions, including credit and debit card transactions, checks and bill payments. These likely would be even more damaging to economic activity.

The financial services industry should continue to resist the financial transactions tax, even at the proposed low rate. Once the camel’s nose is in the tent, the whole camel soon follows.

I asked a quant friend of mine what he thought of this article, and he said the following:

I’m personally not a huge fan of transaction taxes because I guess I feel trades should be encouraged (people should, for example, be able to have an S&P500 account instead of a checking account, where you sell units of S&P each time you buy some milk) and in general tight spreads means more actionable information (in the sense of knowing whether a bank is solvent for example, or allocating resources to build a new power plant). In addition, they are often avoided at some additional cost to investors. In the UK, they have a stamp tax on equities, and as a result only a few people trade equities, and almost all funds trade “swaps” with some additional arb/copmlexity added to the system as a result.

That said, the doomsaying in the article is definitely overboard. It would certainly wipe out a lot of HF trading, which is of limited value to society (I think HF does make for better pricing, but the resources put into it might not match the societal benefit of the slightly more accurate prices).

This would cause some trading to go offshore. In the UK for example, various regulations were (a small) part of the reason ManU decided to list in Singapore. The loss in capital gain taxes are from a bunch of sources, but one of the major ones is sure to be deferred sales, meaning the taxes would just be paid later.

It does make some sort of intuitive sense to match the costs associated with overseeing transactions paid for by the transactions though. If you feel that financial transactions are burdensome and need more regulation, the people who are causing that burden should pay. Whether that should be via a transaction tax or by a profit tax or by an exchange/regulatory fee, I don’t know.

(You know my bias, that it’s really the hidden transactions that are the main issue, and so I think if anything you should be taking financial intermediaries for having illiquid/non-tradable assets on their books rather than encouraging them to have more of it).

I’m left kind of rooting for the transaction tax, personally, and it’s not just because I want to see Mercer miserable; it can be seen as a tax that most people will not notice but people who make enormous number of bets will be affected by. On the one hand, this means it’s a truly progressive tax, and on the other hand, it means you will actually need to think a trade is worthwhile before making it.

Shareholder value and Adam Smith

I’m reading a fascinating “Ethnography of Wall Street” called Liquidated. This was written by anthropologist Karen Ho, who did her graduate student field work at an investment bank in the mid-1990’s.

As a woman and as a minority, Ho had an interesting, outsider’s view into the culture of investment banking, and the first third of the book describes that culture in painful detail; I might write a further post on her observations, but suffice it to say I recognize her experiences as both shocking (because awful) and totally unsurprising (because familiar).

What I’ve really gotten into in the middle third of the book (not done yet! I’m reading it on my kindle app on the phone on the subway to and from work) is her explanation of the history of the cult of shareholder value. Although she starts in the present and goes backwards, I’ll summarize (and simplify) her narrative by starting earlier and going forward.

Back when Adam Smith wrote his book, most commerce was conducted by small business owners. Smith wrote that the small business owner, by having complete control and by profiting directly from his business, will improve the overall system by acting in his self interest. This is the fundamental belief behind the “invisible hand” theory.

Fast forward to the beginning of the 20th century. The stock market was created, and sold to people, as a way of having ownership of companies without having responsibility, or importantly, control. In other words the fundamental idea of owning a stock was to separate the Adam Smith “ownership/control” concept. Incidentally, Ho has quite a few excellent quotations from Adam Smith explaining that if you do this, your enterprise is destined for failure.

Now move forward to post-World War II. At this point we see the rise of the large corporation, and stock holders continue to own but not control, and managers of the corporations consider the corporations to be social entities, and consider their obligations diffused among stake holders such as employees, customers,stockholders, and the general public.

At some point it seems that investment bankers, who wanted a bigger piece of this pie, and economists got together and cooked up the shareholder value theory. According to Ho, modern economics at this point in history was still relying on the Adam Smith concept of individuals working in their self-interest. It had no theory of corporations, and didn’t know what to make of them.

Except it did know how to squeeze them into the tiny box they’d already built. In order to do this, though, they had to recombine the concept of ownership and control, and reimagine the corporation as an entity, where the role of the small business owner would be taken by the collection of shareholders.

Essentially, then, the idea was to convince the managers and the market itself that the only stakeholder to really pay attention to is the supposedly unified group of shareholders. The corporation should do everything in its power to increase share price, for the sake of this shareholder which was essentially mute (because they didn’t have power) but for whom the investment banker was more than happy to speak (for a large fee involved in restructuring the corporation). It also resulted in the CEO-as-shareholder concept of stock options etc. so that the CEO would be more aligned with his “natural” duties.

One reason this is a screwy concept: shareholders don’t actually have control, nor do they really want control (or responsibility). Most shareholders want to think of their stocks as fluid, like money, except riskier. Indeed Ho makes the argument, which I buy, that shareholders traded control and responsibility for liquidity, which is more meaningful to them.

Another reason this is a screwy concept: in effect, the only people who actually gain from the “shareholder value” revolution in the 1980’s and 1990’s were the investment bankers themselves, and some of the managers of those corporations. Ho goes into detail on how she reached this conclusion, and her facts are convincing, but I was already convinced by observing the lack of faith in the markets right now by regular shareholders (most people through their 401K’s) and by the monstrous size of the financial system.

I really like the way Ho explains this stuff. In particular I enjoy the way she pokes holes in invented nostalgic histories; she talks about how people generate authenticity for their “shareholder value” theory by inventing a past that never was, when shareholders had more say in the running of the company. In fact she does that more than once, and you start to realize how much you can get away with by relying on people not knowing even recent history.

I am looking forward to the last third of the book!

Happy Baconmas!

Actually Baconmas is not til January 22nd but I wanted to get everyone totally psyched for it so I led with a misleading title. Sorry about that.

What is Baconmas?

Baconmas is a relatively new holiday, celebrated on January 22nd (the birthday of Sir Francis Bacon) to celebrate the sciences, with a side order of bacon.

Here’s what I love about the blog Baconmas. First of all the fun science experiments, second of all the bacon-related recipes, and third of all the fun of making up ridiculous traditions, including:

1. The Boasting Prop

Would it be that bad if we had a time set aside to acknowledge that we’ve done some pretty awesome things? I think not. So set aside some prop at your party (in my case an awesome model brain) as the Boasting X (again, the Boasting Brain in my case), and make the rule that whenever you hold it, you can announce to all around you the coolest thing you’ve done or achieved in the past 12 months, and bask in the applause.

Variations: No statute of limitations on boasting material; require everyone to boast once; allow for increasingly ridiculous fake boasts.

2. Science Book Swap

It’s a bit awkward recommending your favorite books to your friends, or asking to borrow one. We can fix this on Baconmas. Have everyone bring a book (related to some science in some way; sci-fi totally counts) that they’d recommend to others, and that they wouldn’t mind loaning to a random party guest. (Make sure everyone’s written their email or contact info on their offering.) Then when the party starts, anyone can swap books with anyone else, for as long as the party goes! At the end, most everyone should have a new and exciting book to read.

Variations: Instead of having everyone carry their books for the whole time, you could just run a round of “Rob Your Neighbor” in the middle of the party.

3. Great Moments in Science

From the discovery of phosphorus (the first official elemental discovery- a glowing residue produced from giant vats of urine) to the discovery of pulsars (Jocelyn Bell, a grad student at Cambridge, found the signals which were quickly dubbed “little green men”), the history of science is full of moments that would be really neat to re-enact for your friends. In full costume and on video, if you feel like it. (Please think carefully before re-enacting the Archimedes’ discovery of fluid displacement.)

Suggestions: In addition to the ones above:

James Young Simpson’s discovery of chloroform as anaesthesia

Marie and Pierre Curie’s discovery of radium

Louis Pasteur’s disproof of spontaneous generation

Erasto Mpemba’s discovery of the Mpemba effect in fluid cooling

Variations: Have one person read a short description of the event, then run it as a Genre Switch improv game: two people act it out ‘normal’ the first time, then take audience suggestions for different genres and act it out again in that style! Alexander Fleming’s discovery of penicillin as an antibiotic should be a lot more interesting when played as a zombie buddy-cop movie…

4. Drunk Science Lectures

Now, I wouldn’t recommend letting your friends get as drunk as the Drunk History stars, but if you’re planning to make it an alcoholic Baconmas, you can’t go wrong with having a knowledgeable friend try and give an impromptu scientific lecture in an inebriated state. And you certainly can’t go wrong by filming this, then using it as the voiceover for a “serious” science video on the topic.

Variations: Instead of having one impaired person try to explain science, you could record the results when your entire party tries to explain a concept, with each word being spoken by the next person in line (like the Whose Line is it Anyway? game Three-Headed Broadway Star– Google it for some hilarious clips).

5. Free Cookies

This one isn’t a party tradition, but a good “day of” tradition. Bake a bunch of homemade cookies, find a busy public place, and give them away for free! Watch a neverending wave of gratitude and confusion. (It goes without saying that you’d better not put anything bad in the cookies. We don’t want a bad reputation here!)

Variation: Compete to invent the best explanation when people ask you why you’re giving away free baked goods- I’ll start you off with “Because cheeseburgers don’t stay tasty nearly as long!”

This guy is just plain funny, and I love his blog. My kids and I are coming up with ideas for the big day. On the list: fun with non-Newtonian fluids.

Open Models (part 1)

A few days ago I posted about how riled up I was to see the Heritage Foundation publish a study about teacher pay which was obviously politically motivated. In the comment section a skeptical reader challenged me on a few things. He had some great points, and I’d love to address them all, but today I will only address the most important one, namely:

…the criticism about this particular study could be leveled to any study funded by any think tank, from the lowly ones, to the more prestigious ones, which have near-academic status (e.g. Brookings or Hoover). But indeed, most social scientists have a political bias. Piketty advised Segolene Goyal. Does it invalidate his study on inequality in America? Rogoff is a republican. Should one dismiss his work on debit crises? I think the best reaction is not to dismiss any study, or any author for that sake, on the basis of their political opinion, even if we dislike their pre-made tweets (which may have been prepared by editors that have nothing to do with the authors, by the way). Instead, the paper should be judged on its own merit. Even if we know we’ll disagree, a good paper can sharpen and challenge our prior convictions.

Agreed! Let’s judge papers on their own merits. However, how can we do that well? Especially when the data is secret and/or the model itself is only vaguely described, it’s impossible. I claim we need to demand more information in such cases, especially when the results of the study are taken seriously and policy decisions are potentially made based on them.

What should we do?

Addressing this problem of verifying modelling results is my goal with defining open source models. I’m not really inventing something new, but rather crystallizing and standardizing something that is already in the air (see below) among modelers who are sufficiently skeptical of the underlying incentives that modelers and their institutions have to look confident.

The basic idea is that we cannot and should not trust models that are opaque. We should all realize how sensitive models are to design decisions and tuning parameters. In the best case, this means we, the public, should have access to the model itself, manifested as a kind of app that we can play with.

Specifically, this means we can play around with the parameters and see how the model changes. We can input new data and see what the model spits out. We can retrain the model altogether with a slightly different assumption, or with new data, or with a different cross validation set.

The technology to allow us to do this all exists – even the various ways we can anonymize sensitive data so that it can still be semi-public. I will go further into how we can put this together in later posts. For now let me give you some indication of how badly this is needed.

Already in the Air

I was heartened yesterday to read this article from Bloomberg written by Victoria Stodden and Samuel Arbesman. In it they complain about how much of science depends on modeling and data, and how difficult it is to confirm studies when the data (and modeling) is being kept secret. They call on federal agencies to insist on data sharing:

Many people assume that scientists the world over freely exchange not only the results of their experiments but also the detailed data, statistical tools and computer instructions they employed to arrive at those results. This is the kind of information that other scientists need in order to replicate the studies. The truth is, open exchange of such information is not common, making verification of published findings all but impossible and creating a credibility crisis in computational science.

Federal agencies that fund scientific research are in a position to help fix this problem. They should require that all scientists whose studies they finance share the files that generated their published findings, the raw data and the computer instructions that carried out their analysis.

The ability to reproduce experiments is important not only for the advancement of pure science but also to address many science-based issues in the public sphere, from climate change to biotechnology.

How bad is it now?

You may think I’m exaggerating the problem. Here’s an article that you should read, in which the case is made that most published research is false. Now, open source modeling won’t fix all of that problem, since a large part of is it the underlying bias that you only publish something that looks important (you never publish results explaining all the things you tried but didn’t look statistically significant).

But think about it, that’s most published research. I’d like to posit that it’s the unpublished research that we should be really worried about. Note that banks and hedge funds don’t ever publish their research, obviously, because of proprietary reasons, but that this doesn’t improve the verifiability problems.

Indeed my experience is that very few people in the bank or hedge fund actually vet the underlying models, partly because they don’t want information to leak and partly because those models are really hard. You may argue that the models are carefully vetted, since big money is often at stake. But I’d reply that actually, you’d be surprised.

How about on the internet? Again, not published, and we don’t have reason to believe that they are more correct than published scientific models. And those models are being used day in and day out and are drawing conclusions about you (what is your credit score, whether you deserve a certain loan) every time you click.

We need a better way to verify models. I will attempt to outline specific ideas of how this should work in further posts.

The Waltons’ money

We have the following statistic, taken from this Reuters article written by Felix Salmon:

In 2007, according to the labor economist Sylvia Allegretto, the six Walton family members on the Forbes 400 had a net worth equal to the bottom 30 percent of all Americans.

To be precise:

The Waltons are now collectively worth about $93 billion, according to Forbes.

Ummmm… that’s obscene under any measurement. But I wouldn’t be posting about it if it ended there, because I think we had some idea how much money, Walmart makes, or at least the scale of it.

However, it doesn’t end there. For some reason people who like super income inequality keep coming back with this:

This sounds outrageous, until you stop for a second and take note of the fact that Jeffrey Goldberg, individually, has a net worth greater than the bottom 25% of all Americans.

According to the latest data we have, 24.8% of American households had zero or negative net worth — add them all together, and you get zero.

For context, Jeffrey Goldberg is the guy who pointed out how stinking rich the Waltons are, and Salmon’s point here is to show that Goldberg, by dint of not being in debt, is actually kind of relatively rich.

That’s supposed to make the Waltons’ money okay? How does it make it okay? Here’s an indication that it’s supposed to somehow make it okay:

And while it’s definitely a bad thing that one in four Americans have no net worth at all, I don’t think you can really blame Walmart for that.

I will attempt to understand: we are saying that 25% of the households in this country are either in debt or have zero asset worth. Incidentally, if you are looking for an explanation of why “social mobility” has gone down in this country, look no further than this. We are starting out with a quarter of the people below the water line.

That’s not the point though: I think part of the point is that we are supposed to imagine some of those people in that 25% are recent college graduates with great jobs who will eventually pay off their student debt. I wonder how much, as a percentage, those whippersnappers account for in that 25% of households? Not much. And it doesn’t do anything about the $93 billion statistic we saw earlier. It’s just another shocking statistic that is supposed to make us… what? Feel like those 25% of households are just too damn lazy? I’m not understanding something.

I would posit that we have two extremely depressing statistics here, and one does not make the other one okay. Taken independently, they each make me want to barf.

And just because I’m close to barfing anyway, please allow me to refer to recent this article where former hedge fund manager Andy Kessler explains to us that nobody in this country should complain about being poor since 8-year-olds now own cell phones. Here’s my favorite line of ignorance:

Medical care? Thanks to the market, you can afford a hip replacement and extracapsular cataract extraction and a defibrillator—the costs have all come down with volume. Arthroscopic, endoscopic, laparoscopic, drug-eluting stents—these are all mainstream and engineered to get you up and around in days. They wouldn’t have been invented to service only the 1%.

I’d love to see this guy in a conversation with Elizabeth Warren about the real cost of medical care for the poor.

CBC Radio

If you are in Canada, or if you can figure out how to stream CBC Radio 1 online, then you can hear me talk #Occupy Wall Street stuff this morning at 8:30am Eastern time on their show The Current.

update: there’s a recording of the interview here. I was on with David Sauvage, a very cool man who created this commercial.