Big data and surveillance

You know how, every now and then, you hear someone throw out a statistic that implies almost all of the web is devoted to porn?

Well, that turns out to be a false myth, which you can read more about here – although once upon a time it was kind of true, before women started using the web in large numbers and before there was Netflix streaming.

Here’s another myth along the same lines which I think might actually be true: almost all of big data is devoted to surveillance.

Of course, data is data, and you could define “surveillance” broadly (say as “close observation”), to make the above statement a tautology. To what extent is Google’s data, collected about you, a surveillance database, if they only use it to tailor searches and ads?

On the other hand, something that seems unthreatening now can become creepy soon: recall the NSA whistleblower who last year described how the government stores an enormous amount of the “electronic communications” in this country to keep close tabs on us.

The past

Back in 2011, computerworld.com published an article entitled “Big data to drive a surveillance society” and makes the case that there is a natural competition among corporations with large databases to collect more data, have it more interconnected (knowing now only a person’s shopping habits but also their location and age, say) and have the analytics work faster, even real-time, so they can peddle their products faster and better than the next guy.

Of course, not everyone agrees to talk about this “natural competition”. From the article:

Todd Papaioannou, vice president of cloud architecture at Yahoo, said instead of thinking about big data analytics as a weapon that empowers corporate Big Brothers, consumers should regard it as a tool that enables a more personalized Web experience.

“If someone can deliver a more compelling, relevant experience for me as a consumer, then I don’t mind it so much,” he said.

Thanks for telling us consumers how great this is, Todd. Later in the same article Todd says, “Our approach is not to throw any data away.”

The present

Fast forward to 2013, when defence contractor Raytheon is reported to have a new piece of software, called Riot, which is cutting-edge in the surveillance department.

The name Riot refers to “Rapid Information Overlay Technology” and it can locate individuals with longitude and latitudes, using cell phone data, and make predictions as well, using data scraped from Facebook, Twitter, and Foursquare. A video explains how they do it. From the op-ed:

The possibilities for RIOT are hideous at consumer level. This really is the stalker’s dream technology. There’s also behavioural analysis to predict movements in the software. That’s what Big Data can do, and if it’s not foolproof, there are plenty of fools around the world to try it out on.

US employers, who have been creating virtual Gulags of surveillance for employees with much less effective technology, will love this. “We know what you do” has always been a working option for coercion. The fantastic levels of paranoia involved in the previous generations of surveillance technology will be truly gratified by RIOT.

The future

Lest we think that our children are not as affected by such stalking software, since they don’t spend as much time on social media and often don’t have cellphones, you should also be aware that educational data is now being collected about individual learners in the U.S. at an enormous scale and with very little oversight.

This report from educationnewyork.com (hat tip Matthew Cunningham-Cook) explains recent changes in privacy laws for children, which happen to coincide with how much data is being collected (tons) and how much money is in the analysis of that data (tons):

Schools are a rich source of personal information about children that can be legally and illegally accessed by third parties.With incidences of identity theft, database hacking, and sale of personal information rampant, there is an urgent need to protect students’ rights under FERPA and raise awareness of aspects of the law that may compromise the privacy of students and their families.

In 2008 and 2011, amendments to FERPA gave third parties, including private companies,increased access to student data. It is significant that in 2008, the amendments to FERPA expanded the definitions of “school officials” who have access to student data to include “contractors, consultants, volunteers, and other parties to whom an educational agency or institution has outsourced institutional services or functions it would otherwise use employees to perform.” This change has the effect of increasing the market for student data.

There are lots of contractors and consultants, for example inBloom, and they are slightly less concerned about data privacy issues than you might be:

inBloom has stated that it “cannot guarantee the security of the information stored … or that the information will not be intercepted when it is being transmitted.”

The article ends with this:

The question is: Should we compromise and endanger student privacy to support a centralized and profit-driven education reform initiative? Given this new landscape of an information and data free-for-all, and the proliferation of data-driven education reform initiatives like CommonCore and huge databases of student information, we’ve arrived at a time when once a child enters a public school,their parents will never again know who knows what about their children and about their families. It is now up to individual states to find ways to grant students additional privacy protections.

No doubt about it: our children are well on their way to being the most stalked generation.

Privacy policy

One of the reasons I’m writing this post today is that I’m on a train to D.C. to sit in a Congressional hearing where Congressmen will ask “big data experts” questions about big data and analytics. The announcement is here, and I’m hoping to get into it.

The experts present are from IBM, the NSF, and North Carolina State University. I’m wondering how they got picked and what their incentives are. If I get in I will write a follow-up post on what happened.

Here’s what I hope happens. First, I hope it’s made clear that anonymization doesn’t really work with large databases. Second, I hope it’s clear that there’s no longer a very clear dividing line between sensitive data and nonsensitive data – you’d be surprised how much can be inferred about your sensitive data using only nonsensitive data.

Next, I hope it’s clear that the very people who should be worried the most about their data being exposed and freely available are the ones who don’t understand the threat. This means that merely saying that people should protect their data more is utterly insufficient.

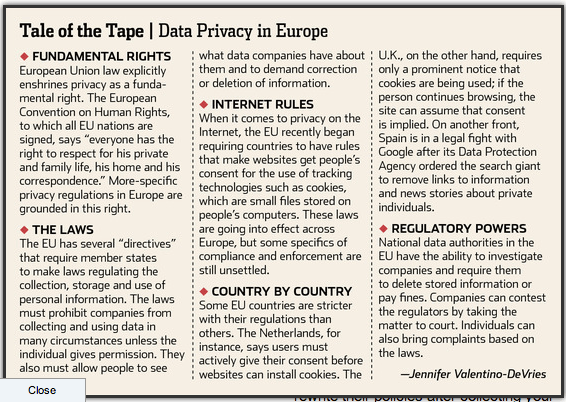

Next, we should understand what policies already in place look like in Europe:

Finally, we should focus not only the collection of data, but on the usage of data. Just because you have a good idea of my age, race, education level, income, and HIV status doesn’t mean you should be able to use that information against me whenever you want.

In particular, it should not be legal for companies that provide loans or insurance to use whatever information they can buy from Acxiom about you. It should be a highly regulated set of data that allows for such decisions.

Had lunch with a former student a few years ago. He now works at the National Security Agency, which has grown dramatically since 9/11. He told me there is a big revolving door, with many employees leaving NSA to join Facebook and Google, and vice versa. I asked, “Why is that?” and he replied without a trace of irony, “Well, we’re all doing more or less the same thing.”

LikeLike

This needs to be more widely distributed. A lot more widely distributed.

LikeLike