Two clarifications

First, I think I over-reacted to automated pricing models (thanks to my buddy Ernie Davis who made me think harder about this). I don’t think immediate reaction to price changes is necessarily odious. I do think it changes the dynamics of price optimization in weird ways, but upon reflection I don’t see how they’d necessarily be bad for the general consumer besides the fact that Amazon will sometimes have weird disruptions much like the flash crashes we’ve gotten used to on Wall Street.

Also, in terms of the question of “accuracy versus discrimination,” I’ve now read the research paper that I believe is under consideration, and it’s more nuanced than my recent blog posts would suggest (thanks to Solon Barocas for help on this one).

In particular, the 2011 paper I referred defines discrimination crudely, whereas this new article allows for different “base rates” of recidivism. To see the different, consider a model that assigns a high risk score 70% of the time to blacks and 50% to whites. Assume that, as a group, blacks recidivate at a 70% rate and whites at a 50% rate. The article I referred to would define this as discriminatory, but the newer paper refers to this as “well calibrated.”

Then the question the article tackles is, can you simultaneously ask for a model to be well-calibrated, to have equal false positive rates for blacks and whites, and to have equal false negative rates? The answer is no, at least not unless you are in the presence of equal “base rates” or a perfect predictor.

Some comments:

- This is still unsurprising. The three above conditions are mathematical constraints, and there’s no reason to expect that you can simultaneously require a bunch of really different constraints. The authors do the math and show that intuition is correct.

- Many of my comments still hold. The most important one is the question of why the base rates for blacks and whites are so different. If it’s because of police practice, at least in part, or overall increased surveillance of black communities, then I’d argue “well-calibrated” is insufficient.

- We need to be putting the science into data science and examining questions like this. In other words, we cannot assume the data is somehow fixed in stone. All of this is a social construct.

This question has real urgency, by the way. New York Governor Cuomo announced yesterday the introduction of recidivism risk scoring systems to modernize bail hearings. This could be great if fewer people waste time in jail pending their hearings or trials, but if the people chosen to stay in prison are chosen on the basis that they’re poor or minority or both, that’s a problem.

Algorithmic collusion and price-fixing

There’s a fascinating article on the FT.com (hat tip Jordan Weissmann) today about how algorithms can achieve anti-competitive collusion. Entitled Policing the digital cartels and written by David J Lynch, it profiles a classic cinema poster seller that admitted to setting up algorithms for pricing with other poster sellers to keep prices high.

That sounds obviously illegal, and moreover it took work to accomplish. But not all such algorithmic collusion is necessarily so intentional. Here’s the critical paragraph which explains this issue:

As an example, he cites a German software application that tracks petrol-pump prices. Preliminary results suggest that the app discourages price-cutting by retailers, keeping prices higher than they otherwise would have been. As the algorithm instantly detects a petrol station price cut, allowing competitors to match the new price before consumers can shift to the discounter, there is no incentive for any vendor to cut in the first place.

We also don’t seem to have the legal tools to address this:

“Particularly in the case of artificial intelligence, there is no legal basis to attribute liability to a computer engineer for having programmed a machine that eventually ‘self-learned’ to co-ordinate prices with other machines.

How to fix recidivism risk models

Yesterday I wrote a post about the unsurprising discriminatory nature of recidivism models. Today I want to add to that post with an important goal in mind: we should fix recidivism models, not trash them altogether.

The truth is, the current justice system is fundamentally unfair, so throwing out algorithms because they are also unfair is not a solution. Instead, let’s improve the algorithms and then see if judges are using them at all.

The great news is that the paper I mentioned yesterday has three methods to do just that, and in fact there are plenty of papers that address this question with various approaches that get increasingly encouraging results. Here are brief descriptions of the three approaches from the paper:

- Massaging the training data. In this approach the training data is adjusted so that it has less bias. In particular, the choice of classification is switched for some people in the preferred population from + to -, i.e. from the good outcome to the bad outcome, and there are similar switches for some people in the discriminated population from – to +. The paper explains how to choose these switches carefully (in the presence of continuous scorings with thresholds).

- Reweighing the training data. The idea here is that with certain kinds of models, you can give weights to training data, and with a carefully chosen weighting system you can adjust for bias.

- Sampling the training data. This is similar to reweighing, where the weights will be nonnegative integer values.

In all of these examples, the training data is “preprocessed” so that you can train a model on “unbiased” data, and importantly, at the time of usage, you will not need to know the status of the individual you’re scoring. This is, I understand, a legally a critical assumption, since there are anti-discrimination laws which forbid you to “consider” the race of someone when deciding whether to hire them or so on.

In other words, we’re constrained by anti-discrimination law to not use all the information that might help us avoid discrimination. This constraint, generally speaking, prevents us from doing as good a job as possible.

Remarks:

- We might not think that we need to “remove all the discrimination.” Maybe we stratify the data by violent crime convictions first, and then within each resulting bin we work to remove discrimination.

- We might also use the racial and class discrepancies in recidivism risk rates as an opportunity to experiment with interventions that might lower those discrepancies. In other words, why are there discrepancies, and what can we do to diminish them?

- In other words, I do not claim that this is a trivial process. It will in fact require lots of conversations about the nature of justice and the goals of sentencing. Those are conversations we should have.

- Moreover, there’s the question of balancing the conflicting goals of various stakeholders which makes this an even more complicated ethical question.

Recidivism risk algorithms are inherently discriminatory

A few people have been sending me, via Twitter or email, this unsurprising article about how recidivism risk algorithms are inherently racist.

I say unsurprising because I’ve recently read a 2011 paper by Faisal Kamiran and Toon Calders entitled Data preprocessing techniques for classification without discrimination, which explicitly describes the trade-off between accuracy and discrimination in algorithms in the presence of biased historical data (Section 4, starting on page 8).

In other words, when you have a dataset that has a “favored” group of people and a “discriminated” group of people, and you’re deciding on an outcome that has historically been awarded to the favored group more often – in this case, it would be a low recidivism risk rating – then you cannot expect to maximize accuracy and keep the discrimination down to zero at the same time.

Discrimination is defined in the paper as the difference in percentages of people who get the positive treatment among all people in the same category. So if 50% of whites are considered low-risk and 30% of blacks are, that’s a discrimination score of 0.20.

The paper goes on to show that the trade-off between accuracy and discrimination, which can be achieved through various means, is linear or sub-linear depending on how it’s done. Which is to say, for every 1% loss of discrimination you can expect to lose a fraction of 1% of accuracy.

It’s an interesting paper, well written, and you should take a look. But in any case, what it means in the case of recidivism risk algorithms is that any algorithm that is optimized for “catching the bad guys,” i.e. accuracy, which these algorithms are, and completely ignores the discrepancy between high risk scores for blacks and for whites, can be expected to be discriminatory in the above sense, because we know the data to be biased*.

* The bias is due to the history of heightened scrutiny of black neighborhoods by police which we know as broken windows policing, which makes blacks more likely to be arrested for a given crime, as well as the inherent racism and classism in our justice system itself that was so brilliantly explained out by Michelle Alexander in her book The New Jim Crow, which makes them more likely to be severely punished for a given crime.

2017 Resolutions: switch this shit up

Don’t know about you, but I’m sick of New Year’s resolutions, as a concept. They’re flabby goals that we’re meant not only to fail to achieve but to feel bad about personally. No, I didn’t exercise every single day of 2012. No, I didn’t lose 20 pounds and keep it off in 1988.

What’s worst to me is how individual and self-centered they are. They make us focus on how imperfect we are at a time when we should really think big. We don’t have time to obsess over details, people! Just get your coping mechanisms in place and do some heavy lifting, will you?

With that in mind, here are my new-fangled resolutions, which I full intend to keep:

- Let my kitchen get and stay messy so I can get some goddamned work done.

- Read through these papers and categorize them by how they can be used by social justice activists. Luckily the Ford Foundation has offered me a grant to do just this.

- Love the shit out of my kids.

- Keep up with the news and take note of how bad things are getting, who is letting it happen, who is resisting, and what kind of resistance is functional.

- Play Euclidea, the best fucking plane geometry app ever invented.

- Form a cohesive plan for reviving the Left.

- Gain 10 pounds and start smoking.

Now we’re talking, amIright?

Kindly add your 2017 resolutions as well so I’ll know I’m not alone.

Creepy Tech That Will Turbocharge Fake News

My buddy Josh Vekhter is visiting from his Ph.D. program in computer science and told me about a couple of incredibly creepy technological advances that will soon make our previous experience of fake news seem quaint.

First, there’s a way to edit someone’s speech:

Next, there’s a way to edit a video to insert whatever facial expression you want (I blame Pixar on this one):

Put those two technologies together and you’ve got Trump and Putin having an entirely fictitious but believable conversation on video.

Section 230 isn’t up to the task

Today in my weekly Slate Money podcast I’m discussing the recent lawsuit, brought by the families of the Orlando Pulse shooting victims, against Facebook, Google, and Twitter. They claim the social media platforms aided and abetted the radicalization of the Orlando shooter.

They probably won’t win, because Section 230 of the Communications Decency Act of 1996 protects internet sites from content that’s posted by third parties – in this case, ISIS or its supporters.

The ACLU and the EFF are both big supporters of Section 230, on the grounds that it contributes to a sense of free speech online. I say sense because it really doesn’t guarantee free speech at all, and people are kicked off social media all the time, for random reasons as well as for well-thought out policies.

Here’s my problem with Section 230, and in particular this line:

No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider

Section 230 treats “platforms” as innocent bystanders in the actions and words of its users. As if Facebook’s money-making machine, and the design of that machine, have nothing to do with the proliferation of fake news. Or as if Google does not benefit directly from the false and misleading information of advertisers on its site, which Section 230 immunizes it from.

The thing is, in this world of fake news, online abuse, and propaganda, I think we need to hold these platforms at least partly responsible. To ignore their contributions would be foolish from the perspective of the public.

I’m not saying I have a magic legal tool to do this, because I don’t, and I’m no legal scholar. It’s also difficult to precisely quantify the externalities of the kinds of problems stemming from a complete indifference and immunization from consequences that the platforms currently enjoy. But I think we need to do something, and I think Section 230 isn’t that thing.

How do you quantify morality?

Lately I’ve been thinking about technical approaches to measuring, monitoring, and addressing discrimination in algorithms.

To do this, I consider the different stakeholders and the relative harm they will suffer depending on mistakes made by the algorithm. It turns out that’s a really robust approach, and one that’s basically unavoidable. Here are three examples to explain what I mean.

- AURA is an algorithm that is being implemented in Los Angeles with the goal of finding child abuse victims. Here the stakeholders are the children and the parents, and the relative harm we need to quantify is the possibility of taking a child away from parents who would not have abused that kid (bad) versus not removing a child from a family that does abuse them (also bad). I claim that, unless we decide on the relative size of those two harms – so, if you assign “unit harm” to the first, then you have to decide what the second harm counts as – and then optimize to it using that ratio in the penalty function, then you cannot really claim you’ve created a moral algorithm. Or, to be more precise, you cannot say you’ve implemented an algorithm in line with a moral decision. Note, for example, that arguments like this are making the assumption that the ratio is either 0 or infinity, i.e. that one harm matters but the other does not.

- COMPAS is a well-known algorithm that measures recidivism risk, i.e. the risk that a given person will end up back in prison within two years of leaving it. Here the stakeholders are the police and civil rights groups, and the harms we need to measure against each other are the possibility of a criminal going free and committing another crime versus a person being jailed in spite of the fact that they would not have gone on to commit another crime. ProPublica has been going head to head with COMPAS’s maker, Northpointe, but unfortunately, the relative weight of these two harms is being sidestepped both by one side and the other.

- Michigan recently acknowledged its automated unemployment insurance fraud detection system, called Midas, was going nuts, accusing upwards of 20,000 innocent people of fraud while filling its coffers with (mostly unwarranted) fines, which it’s now using to balance the state budget. In other words, the program deeply undercounted the harm of charging an innocent person with fraud while it was likely overly concerned with missing out on a fraud fine payment that it deserved. Also it was probably just a bad program.

If we want to build ethical algorithms, we will need to weight harms against each other and quantify their relative weights. That’s a moral decision, and it’s hard to agree on. Only after we have that difficult conversation can we optimize our algorithms to those choices.

Facebook, the FBI, D&S, and Quartz

If you’re wondering why I don’t write more blog posts, it’s because I’m writing for other stuff all the time now! But the good news is, once those things are published, I can talk about them on the blog.

- I wrote a piece about the Facebook algorithm versus democracy for Nova. TL;DR: Facebook is winning.

- Susan Landau and I wrote a letter to respond to a bad idea about how the FBI should use machine learning. Both were published on the LawFare blog.

- The kind folks at Data & Society met up, read my book, and wrote a bunch of fascinating responses to it.

- Nikhil Sonnad from Quartz published a nice interview with me yesterday and brought along a photographer.

Wednesday Morning Soundtrack

Now that I’m not on Facebook, I have more time to listen to music. Here’s what I’ve got this morning. First, Fare Thee Well from the soundtrack to Llewyn Davis:

Second, my son’s favorite song to sing wherever he happens to be, a Bruno Mars song called Count on Me:

Finally, one of my favorite songs of my favorite band, First Day of My Life by Bright Eyes:

I quit Facebook and my life is better now

I don’t need to hear from all you people who never got on Facebook in the first place. I know you’re already smiling your smug smile. This story is not for you.

But hey, you people who are on Facebook way too much, let me tell you my story.

It’s pretty simple. I was like you, spending more time than I was comfortable with on Facebook. The truth is, I didn’t even go there on purpose. It was more like I’d find myself there, scrolling down in what can only be described as a fetid swamp of echo-chamber-y hyper partisan news, the same old disagreements about the same old topics. So many petitions.

I wasn’t happy but I didn’t really know how to control myself.

Then, something amazing happened. Facebook told me I’d need to change my password for some reason. Maybe someone had tried to log into my account? I’m not sure, I didn’t actually read their message. In any case, it meant that when went to the Facebook landing page, again without trying to, I’d find myself presented with a “choose a new password” menu.

And you know what I did? I simply refused to choose a new password.

Over the next week, I found myself on that page like maybe 10 times, or maybe 10 times a day, I’m not sure, it all happened very subconsciously. But I never chose a new password, and over time I stopped going there, and now I simply don’t go to Facebook, and I don’t miss it, and my life is better.

That’s not to say I don’t miss anything or anyone on Facebook. Sometimes I wonder how those friends are doing. Then I remember that they’re probably all still there, wondering how they got there.

In the New York Review of Books!

I’m happy to report that my book was reviewed in the New York Review of Books along with Ariel Ezrachi and Maurice E. Stucke’s Virtual Competition: The Promise and Perils of the Algorithm-Driven Economy, by Sue Halpern.

The review is entitled They Have, Right Now, Another You and in it she calls my book “insightful and disturbing.” So I’m happy.

Using Data Science to do Good: A Conversation

This is a guest post by Roger Stanev and Chris French. Roger Stanev is a data scientist and lecturer at the University of Washington. His work focuses on ethical and epistemic issues concerning the nature and application of statistical modeling and inference, and relationship between science and democracy. Chris French is a data science enthusiast, and an advocate for social justice. He’s worked on the history of statistics and probability, and writes science fiction in his spare time.

Calling Data Scientists, Data Science Enthusiasts, and Advocates for Civic Liberties and Social Justice. Please join us for an information and preliminary discussion about how Data Science can be used to do Good!

Throughout Seattle/Tacoma, the state of Washington and the other forty-nine states in America, many non-profit organizations promote causes that are vital to the health, safety and humanity of our friends, families and communities. For the next several years, these social and civic groups will need all the help they can get to resist the increase of fear and hatred – of racism, sexism, xenophobia and bigotry – in our country.

Data Scientists have a unique skill set. They are trained to transform vague and difficult questions – typically questions about human behavior – into empirical, solvable problems.

So here is the question we want to have a conversation about: How can Data Scientists & IT Professionals use their expertise to help answer the current human questions which social and policy-based organizations are currently struggling to address?

What problems will minority and other vulnerable communities face in the coming years? What resources, tools and activities are currently being employed to address these questions? What can data science do, if anything, to help address these questions? Do data scientists or computer professionals have an obligation to assist in promoting social justice? What can we, as data scientists, do to help add and expand the digital tool-belt for these non-profit organizations?

If you’d like to join the conversation, RSVP to ds4goodwa@gmail.com

Saturday, January 14

11am to 1pm @ King County Library (Lake Forest)

17171 Bothell Way NE, Lake Forest Park, WA 98155

Saturday, January 21

11am to 1pm @ Tacoma Public Library

1102 Tacoma Ave S, Tacoma, WA 98402

Saturday, January 28

1 to 3pm @ Seattle Public Library (Capitol Hill)

425 Harvard Ave E, Seattle, WA 98102

Box Cutter Stats

Yesterday I heard a segment on WNYC on the effort to decriminalize box cutters in New York State. I guess it’s up to Governor Cuomo to sign it into law.

During the segment we hear a Latino man who tells his story: he was found by cops to be in possession of a box cutter and spent 10 days in Rikers. He works in construction and having a box cutter is literally a requirement for his job. His point was that the law made it too easy for people with box cutters to end up unfairly in jail.

It made me wonder, who actually gets arrested for possession of box cutters? I’d really like to know. I’m guessing it’s not a random selection of “people with box cutters.” Indeed I’m pretty sure this is almost never a primary reason to arrest a white person at all, man or woman. It likely only happens to people after being stopped and frisked for no particular good reason, and that’s much more likely to happen to minority men. I could be wrong but I’d like to see those stats.

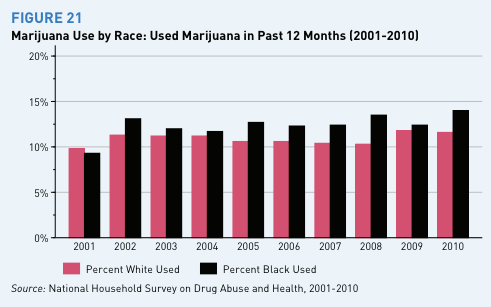

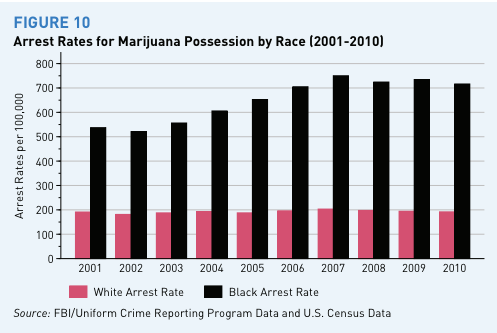

It’s part of a larger statistical question that I think we should tackle: what is the racial discrepancy in arrest rates for other crimes, versus the population that actually commits those other crimes? I know for pot possession it’s extremely biased against blacks:

On the other end of the spectrum, I’d guess murder arrests are pretty equally distributed by race relative to the murdering population. But there’s all kinds of crimes in between, and I’d like some idea of how racially biased the arrests all are. In the case of box cutters, I’m guessing the bias is even stronger than for pot possession.

If w had this data, a statistician could mock up a way to “account for” racial biases in police practices for a given crime record, like we do in polling or any other kind of statistical analysis.

Not that it’s easy to collect; this is honestly some of the most difficult “ground truth” data you can imagine, almost as hard as money in politics. Still, it’s interesting to think about.

Five Stages of Trump-related Grief

Denial. This happened to all of us at first, even people who voted for him. We couldn’t believe it, we were living through cognitive dissonance. We’d wake up in the morning wondering why they were referring to inane tweets on NPR, suddenly realize at lunch time that the Consumer Financial Protection Bureau will probably never do anything significant again. Binge-watching West Wing helped me sustain this stage. They were so damn patriotic and good. Their integrity and well-meaning-ness were leaking onto everything, and although they didn’t have gay marriage, they were progressing towards it, not backing down from it.

Anger. This stage hit me a few days after the election, in spite of my West Wing strategy. It was a rainy, cold day, and everyone I saw on the street looked absolutely pissed. People were bumping into each other more than usual, partly because of the umbrella traffic, partly on purpose. It was dumb rage, as anger always is. Nobody understood what the point was of being there, they just wanted to get home, to eat muffins, to smoke a damn cigarette. I came very close to picking up smoking that day.

Bargaining. For many people, this stage is still happening. I want to snap them out of it, out of the idea that the recounts will work or that the electoral college system will be changed or that electoral college delegates will refuse to do their job. It’s not gonna happen people, and Jill Stein can please stop. And it’s not that I don’t want to recount stuff – why not? – it’s just that the dying hope that it will change the outcome is sad to witness.

Depression. The problem with calling it depression is that people who are realistic, rather than overly optimistic, seem depressed. I’ve got to admit, I was much more prepared for this than most New Yorkers I know. I think it’s because I’ve been in war mode since joining Occupy in 2011. I never thought Hillary would win, that she was a good candidate, or that people’s resentment and anger had been properly addressed. I’ve basically been here, poised for this moment, since Obama introduced HAMP as a shitty and insufficient way to address the financial crisis back in 2009. So you can call it depression, I just call it reality.

Acceptance. And by acceptance I do not mean “normalization.” By acceptance I mean it’s time to move forward, to build things and communities and organizations that will protect the most vulnerable in post-fact America. That could mean giving money, but it should also mean being an activist and coming up with good ideas, like these churches offering sanctuary to undocumented migrants. It also might mean occupying the democratic party – or for that matter, some other party – and reimagining it for the future. Acceptance is not passive, not in this case. Acceptance means accepting our roles as activists and getting shit done.

In NYTimes’ Room for Debate

This morning I’m in the New York Times, having written a short opinion piece on the following Facebook-centered theme:

How to Stop the Spread of Fake News

My actual opinion is entitled Social Media Companies Like Facebook Need to Hire Human Editors.

Tell me what you think!

Miami Book Festival

I had a great time this weekend in Miami, attending the delightful Miami Book Festival with other longlisters (and winners!) of the National Book Award. We each read for about 5 minutes. Here’s a picture of me perched on the edge of the stage Saturday afternoon, getting ready to read, with many cool non-fiction writers:

Before my reading the wonderful Karan Mahajan brought me to a graffiti art area called Wynwood Wall and we were amazed by spray painted walls:

I was supposed to go to a party after that but I made a detour to South Beach, hanging out with the amazing Jonathan Rabb at the Clevelander:

After about 3 mojitos and many many performance artists I fell asleep at about 8pm.

Conclusion: I’m not cool enough for cool things like Miami, but I had a great time.

Facebook should hire me to audit their algorithm

There’s lots of post-election talk that Facebook played a large part in the election, despite Zuckerberg’s denials. Here are some the various theories going around:

- People shared fake news on their walls, and sometimes Facebook’s “trending algorithm” also messed up and shared fake news. This fake news was created by Russia or by Eastern European teenagers and it distracts and confuses people and goes viral.

- Political advertisements had deep influence through Facebook, and it worked for Trump even better than it worked for Clinton.

- The echo chamber effect, called the “filter bubble,” made people hyper-partisan and the election became all about personality and conspiracy theories instead of actual policy stances. This has been confirmed by a recent experiment on swapping feeds.

If you ask me, I think “all of the above” is probably most accurate. The filter bubble effect is the underlying problem, and at its most extreme you see fake news and conspiracy theories, and a lot of middle ground of just plain misleading, decontextualized headlines that have a cumulative effect on your brain.

Here’s a theory I have about what’s happening and how we can stop it. I will call it “engagement proxy madness.”

It starts with human weakness. People might claim they want “real news” but they are actually very likely to click on garbage gossip rags with pictures of Kardashians or “like” memes that appeal to their already held beliefs.

From the perspective of Facebook, clicks and likes are proxies for interest. Since we click on crap so much, Facebook (and the rest of the online ecosystem) interprets that as a deep interest in crap, even if it’s actually simply exposing a weakness we wish we didn’t have.

Imagine you’re trying to cut down on sugar, because you’re pre-diabetic, but there are M&M’s literally everywhere you look, and every time you stress-eat an M&M, invisible nerds exclaim, “Aha! She actually wants M&M’s!” That’s what I’m talking about, but where you replace M&M’s with listicles.

This human weakness now combines with technological laziness. Since Facebook doesn’t have the interest, commercially or otherwise, to dig in deeper to what people really want in a longer-term sense, our Facebook environments eventually get filled with the media equivalent of junk food.

Also, since Facebook dominates the media advertising world, it creates feedback loops in which newspapers are stuck in the loop of creating junky clickbait stories so they can beg for crumbs of advertising revenue.

This is really a very old story, about how imperfect proxies, combined with influential models, lead to distortions that undermine the original goal. And here the goal was, originally, pretty good: to give people a Facebook feed filled with stuff they’d actually like to see. Instead they’re subjected to immature rants and conspiracy theories.

Of course, maybe I’m wrong. I have very little evidence that the above story is true beyond my experience of Facebook, which is increasingly echo chamber-y, and my observation of hyper-partisanship overall. It’s possible this was entirely caused by something else. I have an open mind if there were evidence that Facebook’s influence on this system is minor.

Unfortunately, Facebook’s data is private and so I cannot audit their algorithm for the effect as an interested observer. That’s why I’d like to be brought in as an outside auditor. The first step in addressing this problem is measuring it.

I already have a company, called ORCAA, which is set up for exactly this: auditing algorithms and quantitatively measuring effects. I’d love Facebook to be my first client.

As for how to address this problem if we conclude there is one: we improve the proxies.

Guest post: we should not get out-imagined again

This is an anonymous guest post.

I am a member of Cathy’s Occupy group, and like a lot of people, had a really bad week. By Sunday I thought I was feeling better. It seemed some of the sadness and shock had passed, and I was developing a resolve about how to move forward.

Then I had a really weird experience Sunday night. I came into the City to attend a black-tie event at the Waldorf in support of an organization I really like, even if it raises a lot of its money from the .001%.

As a labor lawyer and Occupier, it is not my crowd. But I usually find the event amusing. They serve sushi for cocktails and pour Makers Mark into wine glasses, like it is wine.

I walked in and immediately felt strange, actually felt really sick. It was like being in an historical re-enactment, precisely because everything was the same. I went for the Makers Mark early. It only made the disembodied feeling worse. Nothing, nothing, had changed from years past. The beautiful young women in the exquisite dresses were the same. The conversation among the supremely confident looking men seemed the same.

I was not the same.

I got another drink and went to my table. Then, like everyone else, I rose for the National Anthem.

I started feeling super weird though, because everyone else was carrying on so completely normally. I thought of kneeling like Collin Kaepernick, but figured my wife would kill me. Then came “My Country Tisethy” and the room just started swirling.

I sat down and took a breath. They started introducing the first honoree: Hank Greenberg. Yes, the guy in charge of AIG until shortly before it blew up the world economy. The guy who sued the government alleging that the $182 billion bailout his company got was on inadequately advantageous terms. In other words, one of the guys most responsible for elite behaviors that led to this most awful eruption of fear, resentment and hate, that led to last Tuesday.

And all I heard was the introduction of him as a “Great American”.

I walked briskly out of the room, then ran fast through the hotel halls and down Park Ave to my car. Where I sat, for I don’t know how long, and just cried. Cried like a fucking baby. Cried for having to look out my car window at what seemed now like an unfamiliar place, cried for the kid in the Bronx who doesn’t know yet about the threat people think he poses to “law and order,” cried for the family in some far off country that doesn’t know about the charade war coming their way to assuage an angry people losing its collective mind over broken empty promises, cried for all the people who, after I’m gone, will live on a chaotic planet my purposefully ignorant country cooked. Damn, I cried hard.

Then I stopped. And I felt a lot better.

It feels so weird to share this publicly, because it is really embarrassing. I really did all that. But I figured out what it was and wanted to say it out loud. It is moral injury. It is real. It hurts. It can make you cry. Don’t try to pretend otherwise. But also take solace that the only way to treat it is to do good anyway.

There are going to be a lot of opportunities. But we should not get out-imagined again, as I surely was. We should shoot really high this time, be really creative about the good we can do.

For example, if you think our national government will remain awful for a long time, you are probably right. So think locally and globally. What stops us from creating real “sanctuary cities, ” ones that are sanctuaries in such a wider sense, to all of the people he has declared hated or who otherwise just reject him? And why cant we make contact, seek advice, and give aid to the 99.9% of the world that is far more affected by this than us, and got no vote? Again, they are the ones who will otherwise get bombed when he starts dumb wars to distract from his mindless policies; and get drowned and fried when he turns up the temperature on the already sizzling planet.

And remember, 2017 is an election year in New York City. Yes, there is another election coming up which it would feel really good to WIN. Let’s demand better. The Left has never said “think nationally” … no, it is has always been Local and Global.

Feel the pain; it is real; cry; and then gather a stronger opposing force to treat it by occupying the spaces that remain up for the taking.

WMD press from Germany, Israel, and the Netherlands

The Netherlands’ Vrij Nederland, written by Gerard Janssen:

Germany’s Die Tageszeitung, written by Ingo Arzt:

Israel’s Calcalist, written by Uri Pasovsky: