P-values and power in statistical tests

Today I’m going to do my best to explain Andrew Gelman’s recent intriguing post on his blog for the sake of non-statisticians including myself (hat tip Catalina Bertani). If you are a statistician, and especially if you are Andrew Gelman, please do correct me if I get anything wrong.

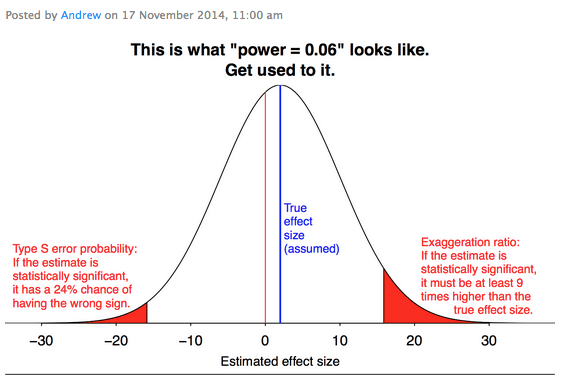

Here’s his post, which more or less consists of one picture:

I decided to explain this to my friend Catalina, because she asked me to, in terms she could understand as a student of midwifery. So I invented a totally fake set-up involving breast-fed versus bottle-fed babies.

Full disclosure: I have three kids who were both breast fed and bottle fed for various lengths of time and, although I was once pretty opinionated about the whole thing, I could care less at this point and I don’t think the data is in either (check this out as an example). So I’m not actually trying to make any political point.

Anyhoo, just to make things super concrete, I want to imagine there’s a small difference in weight, say at 5 years of age, between bottle fed and breast fed children. The actual effect is like 1.7 pounds at 5 years. Let’s assume that, which is why we see a blue line in the graph above at 1.7 with the word “assumed” next to it. You can decide who weighs more and if that’s a good thing or not depending on your politics.

OK, so that’s the underlying “truth” in the situation, but the point is, we don’t actually know it. We can only devise tests to estimate it, and this is where the graph comes in. The graph is showing us the distribution of our estimates of this effect if we have a crappy test.

So, imagine we have a crappy test – something like, we ask all our neighbors who have had kids recently how they fed their kids and how much those kids weighed at 5 years, and then we averaged the two groups. That test would be crappy because we probably don’t have very many kids overall, and the 5-year check-ups aren’t always exactly at 5 years, and the scales might have been wrong, or clothes might have been confusing the scale, and people might not have reported it correctly, or whatever. A crappy test.

Even so, we’d get some answer, and the graph above tells us that, if our tests are at a certain level of crappiness, which we will go into in a second, then very likely our estimate of the difference will come in between something like -22 pounds and +24 pounds. And the “most likely” answer would be the correct one, sure, but that doesn’t mean it’s all that likely to even come close – say within 2 pounds – of the “true effect”. In fact, if you make a band of width 4 centered around the “true effect” level, you’d definitely capture a smallish percentage of the total area under the curve. In fact, it looks like a good 45% of the area under the curve is in negative territory, so the chances are really very good that the test estimate, at this level of crappiness, would give you the wrong sign. That’s a terrible test!

Let’s be a bit more precise now about what we mean by “crappy.” The crappiness of our test is measured by its power, which is defined as “the probability that it correctly rejects the null hypothesis – i.e. the hypothesis that the “true effect” is zero – when it is false.” In other words, power quantifies how well the test can distinguish between the blue line above and the line at zero. So if the bell curve were really really concentrated at the blue line, then more of the total area under the curve would be on the positive side of zero, and we’d have a much better test. Alternatively, if the true effect were much stronger, say at 25 instead of 1.7, then even with a test this imprecise, the power would be much much higher because the bulk of the bell curve would be to the right of zero.

On the one hand, power estimates are done by researchers, and they are attempting to achieve a power of at least 0.80, or 80%, so the above power of 0.06 is indeed extremely low and our test is indeed very crappy by researching standards. But on the other hand, since researchers are expected to estimate their power to be at least 0.80, there’s probably fudging going on and we might be trusting tests to be less crappy than they actually are. Also, I am no expert on how to accurately estimate the power of a test, but there’s an example here, and in general it depends on your sample size (how many kids) and the actual effect size, as we have already discussed. In general it requires way more data to produce evidence of a small effect.

OK so now we have some general sense of what “crappiness” means. But what about the red parts?

Those are the “statistically significant” parts of the distribution. If we did our neighborhood kids test and we found an effect of 20 or -20, we’d be totally convinced, even though our test was crap. There are two take-aways from this. First, that “statistically significant” in the presence of a small actual effect and a crappy test means that we are wildly overestimating the effect. Second, that the red part on the left is about a third of the size of the red part on the right, which is to say that when we get a result that seems “statistically significant,” in the presence of a crappy test, it still has a one in four chance of being totally wrong.

In other words, when we have crappy tests, we just shouldn’t be talking about statistical significance at all. But of course, nobody can publish their results without statistical significance, so there’s that.

There’s one part of the crappy test that Gelman mentions that makes your hypothesis slightly less crappy. At least with your crappy test, there is only one degree of freedom in the outcome, i.e. weight. Suppose instead you are trying to find out any difference between breast-fed and bottle-fed children. It could be the number of times they get sick, or performance in math, or performance in reading. There would be a lot of degrees of freedom. It is likely, especially with the small sample size, that a “statistically significant” difference will appear between the two populations. There are a lot more degrees of freedom here. But that does not mean that the result is objectively valid.

LikeLike

It seems to me that the real lesson here is that “math is not meaning”. Just because you can find realtionships that satisfy certain mathematical formulas for statistical signficance, it doesn’t mean that the relationships themselves have any meaning in the real world. As always, we have to be careful in choosing the questions and evaluating the answers.

But one question on the idea of signficance. I noticed there is an equation between the rejection of the null hypothesis and the acceptance of the alterative hypothesis. But I’m not sure why these two quantities have to be equal? Here, the rejection of the “zero effect” doesn’t mean that you really known the actual effect. (Wasn’t that the whole point of the exercise?)

LikeLike

Andrew Abbott said:

“The idea of variables was a great idea. But its day as an exciting source of knowledge is gone.” (Of Time and Space, 1997: 1165)

LikeLike

“But one question on the idea of signficance. I noticed there is an equation between the rejection of the null hypothesis and the acceptance of the alterative hypothesis. But I’m not sure why these two quantities have to be equal? Here, the rejection of the “zero effect” doesn’t mean that you really known the actual effect. (Wasn’t that the whole point of the exercise?)”

I am learning statistics, so let me try to explain this (and sorry for my grammar, I’m not an english speaker). You’re right, the rejection of the zero “effect” doesn’t mean that you really known the actual effect.

The rejection of the null hypothesis is only that (the rejection of the null hypothesis), you can’t accept THE ONE alternative hypothesis because there are a lot of differents alternative hypothesis: they can differ in the size of the difference (or the direction of the effect) between breast-fed versus bottle-fed babies, and each one can change the acceptance of that particular alternative hypothesis. You can accept the alternative hypothesis only if you know the size and direction of the difference, before making the test, and you usually don’t know that. If you would know it, why make the test?!

So, the p-value of a null hypothesis can be considered like the size and direction of an effect that we’ll considere too extreme to the null hypothesis. The fixation of a p-value for a test is an arbitrary decision, you need to set it in a way is meaningful for your test. For example in breast-fed vs bottle-fed you must think what difference you will considere to be big for the weight of the babies, and let’s call that delta. We can use that delta to set an alternative hypothesis.

That delta can be translated to a p-value (alfa) and two curves. A fast and ugly explanation is that you can adjust a bell-shaped curve like the top one with differents sample sizes, a bigger sample size mean a narrower bell-curve and viceversa. But you need two curves, you make one centered in zero and the other one centered in delta, both with the same sample size.

Here is an illustration (http://rpsychologist.com/creating-a-typical-textbook-illustration-of-statistical-power-using-either-ggplot-or-base-graphics).

So the trick is, with an N sample size you need to choose a p-value (alfa) in the null hypothesis in a way that a good size of the alternative hypothesis is beyond that p-value, that’s statistical power.

The problem with choosing only a p-value is that usually the two hypothesis curves are too near, like Gelman’s example. In that case, finding an effect wil be only because you’re extremely lucky (only a 6% of your alternative hypothesis curve were beyond your p-value), or your data is extremely awful (your data is in the 5% extreme p-value of your null hypothesis).

Is safer (and good practice) to use a bigger sample size, because you made narrower curves and is easier to the curves to be separated with the same delta, but that costs money, usually a lot of money.

LikeLike

Thanks, edivimo! Your explanation was very helpful. And your grammar was quite excellent too! 🙂

LikeLike

I am not completely thrilled with what’s being said in Andrew’s piece. It seems like the point is: using p-values as an initial filter for a hypothesis test, and then subsequently using that same sample result as an estimate of the population parameter, leads to biased estimates (especially in the case of low-power tests). But I don’t see that being the argument in the Durante paper — in fact, I don’t see anything in the Durante paper, because I can’t find it and I’ve been looking for an hour. Even the Griskevicius professional page has a broken link where it claims to have that paper. Does anyone have a link to that paper so I can see where the heck this supposed 0.06 power study comes from? If Durante’s simply claiming statistical significance to the test, then yes he really would have a point that small size and a huge effect are really arguments in his favor on that — but using that as an estimate of true effect would be a separate issue. I certainly wouldn’t be surprised to see other methodology, errors, however — and replicability is of course the real acid test.

LikeLike

This reminds me of the great Ioannidis paper that flags the collection of accumulating issues that should reduce our confidence in published research:

http://www.plosmedicine.org/article/info%3Adoi%2F10.1371%2Fjournal.pmed.0020124

LikeLike

Reblogged this on Daniel C. Narey | Datafication.

LikeLike