Archive

How to build a model that will be gamed

I can’t help but think that the new Medicare readmissions penalty, as described by the New York Times, is going to lead to wide-spread gaming. It has all the elements of a perfect gaming storm. First of all, a clear economic incentive:

Medicare last month began levying financial penalties against 2,217 hospitals it says have had too many readmissions. Of those hospitals, 307 will receive the maximum punishment, a 1 percent reduction in Medicare’s regular payments for every patient over the next year, federal records show.

It also has the element of unfairness:

“Many of us have been working on this for other reasons than a penalty for many years, and we’ve found it’s very hard to move,” Dr. Lynch said. He said the penalties were unfair to hospitals with the double burden of caring for very sick and very poor patients.

“For us, it’s not a readmissions penalty,” he said. “It’s a mission penalty.”

And the smell of politics:

In some ways, the debate parallels the one on education — specifically, whether educators should be held accountable for lower rates of progress among children from poor families.

“Just blaming the patients or saying ‘it’s destiny’ or ‘we can’t do any better’ is a premature conclusion and is likely to be wrong,” said Dr. Harlan Krumholz, director of the Center for Outcomes Research and Evaluation at Yale-New Haven Hospital, which prepared the study for Medicare. “I’ve got to believe we can do much, much better.”

Oh wait, we already have weird side effects of the new rule:

With pressure to avert readmissions rising, some hospitals have been suspected of sending patients home within 24 hours, so they can bill for the services but not have the stay counted as an admission. But most hospitals are scrambling to reduce the number of repeat patients, with mixed success.

Note, the new policy is already a kind of reaction to gaming that’s already there, namely because of the stupid way Medicare decides how much to pay for treatment (emphasis mine):

Hospitals’ traditional reluctance to tackle readmissions is rooted in Medicare’s payment system. Medicare generally pays hospitals a set fee for a patient’s stay, so the shorter the visit, the more revenue a hospital can keep. Hospitals also get paid when patients return. Until the new penalties kicked in, hospitals had no incentive to make sure patients didn’t wind up coming back.

How about, instead of adding a weird rule that compromises people’s health and especially punishes poor sick people and the hospitals that treat them, we instead improve the original billing system? Otherwise we are certain to see all sorts of weird effects in the coming years with people being stealth readmitted under different names or something, or having to travel to different hospitals to be seen for their congestive heart failure.

Columbia Data Science course, week 13: MapReduce

The week in Rachel Schutt’s Data Science course at Columbia we had two speakers.

The first was David Crawshaw, a Software Engineer at Google who was trained as a mathematician, worked on Google+ in California with Rachel, and now works in NY on search.

David came to talk to us about MapReduce and how to deal with too much data.

Thought Experiment

Let’s think about information permissions and flow when it comes to medical records. David related a story wherein doctors estimated that 1 or 2 patients died per week in a certain smallish town because of the lack of information flow between the ER and the nearby mental health clinic. In other words, if the records had been easier to match, they’d have been able to save more lives. On the other hand, if it had been easy to match records, other breaches of confidence might also have occurred.

What is the appropriate amount of privacy in health? Who should have access to your medical records?

Comments from David and the students:

- We can assume we think privacy is a generally good thing.

- Example: to be an atheist is punishable by death in some places. It’s better to be private about stuff in those conditions.

- But it takes lives too, as we see from this story.

- Many egregious violations happen in law enforcement, where you have large databases of license plates etc., and people who have access abuse it. In this case it’s a human problem, not a technical problem.

- It’s also a philosophical problem: to what extent are we allowed to make decisions on behalf of other people?

- It’s also a question of incentives. I might cure cancer faster with more medical data, but I can’t withhold the cure from people who didn’t share their data with me.

- To a given person it’s a security issue. People generally don’t mind if someone has their data, they mind if the data can be used against them and/or linked to them personally.

- It’s super hard to make data truly anonymous.

MapReduce

What is big data? It’s a buzzword mostly, but it can be useful. Let’s start with this:

You’re dealing with big data when you’re working with data that doesn’t fit into your compute unit. Note that’s an evolving definition: big data has been around for a long time. The IRS had taxes before computers.

Today, big data means working with data that doesn’t fit in one computer. Even so, the size of big data changes rapidly. Computers have experienced exponential growth for the past 40 years. We have at least 10 years of exponential growth left (and I said the same thing 10 years ago).

Given this, is big data going to go away? Can we ignore it?

No, because although the capacity of a given computer is growing exponentially, those same computers also make the data. The rate of new data is also growing exponentially. So there are actually two exponential curves, and they won’t intersect any time soon.

Let’s work through an example to show how hard this gets.

Word frequency problem

Say you’re told to find the most frequent words in the following list: red, green, bird, blue, green, red, red.

The easiest approach for this problem is inspection, of course. But now consider the problem for lists containing 10,000, or 100,000, or words.

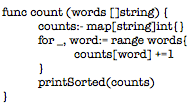

The simplest approach is to list the words and then count their prevalence. Here’s an example code snippet from the language Go:

Since counting and sorting are fast, this scales to ~100 million words. The limit is now computer memory – if you think about it, you need to get all the words into memory twice.

We can modify it slightly so it doesn’t have to have all words loaded in memory. keep them on the disk and stream them in by using a channel instead of a list. A channel is something like a stream: you read in the first 100 items, then process them, then you read in the next 100 items.

Wait, there’s still a potential problem, because if every word is unique your program will still crash; it will still be too big for memory. On the other hand, this will probably work nearly all the time, since nearly all the time there will be repetition. Real programming is a messy game.

But computers nowadays are many-core machines, let’s use them all! Then the bandwidth will be the problem, so let’s compress the inputs… There are better alternatives that get complex. A heap of hashed values has a bounded size and can be well-behaved (a heap seems to be something like a poset, and I guess you can throw away super small elements to avoid holding everything in memory). This won’t always work but it will in most cases.

Now we can deal with on the order of 10 trillion words, using one computer.

Now say we have 10 computers. This will get us 100 trillion words. Each computer has 1/10th of the input. Let’s get each computer to count up its share of the words. Then have each send its counts to one “controller” machine. The controller adds them up and finds the highest to solve the problem.

We can do the above with hashed heaps too, if we first learn network programming.

Now take a hundred computers. We can process a thousand trillion words. But then the “fan-in”, where the results are sent to the controller, will break everything because of bandwidth problem. We need a tree, where every group of 10 machines sends data to one local controller, and then they all send to super controller. This will probably work.

But… can we do this with 1000 machines? No. It won’t work. Because at that scale one or more computer will fail. If we denote by the variable which exhibits whether a given computer is working, so

means it works and

means it’s broken, then we can assume

But this means, when you have 1000 computers, that the chance that no computer is broken is which is generally pretty small even if

is small. So if

for each individual computer, then the probability that all 1000 computers work is 0.37, less than even odds. This isn’t sufficiently robust.

We address this problem by talking about fault tolerance for distributed work. This usually involves replicating the input (the default is to have three copies of everything), and making the different copies available to different machines, so if one blows another one will still have the good data. We might also embed checksums in the data, so the data itself can be audited for erros, and we will automate monitoring by a controller machine (or maybe more than one?).

In general we need to develop a system that detects errors, and restarts work automatically when it detects them. To add efficiency, when some machines finish, we should use the excess capacity to rerun work, checking for errors.

Q: Wait, I thought we were counting things?! This seems like some other awful rat’s nest we’ve gotten ourselves into.

A: It’s always like this. You cannot reason about the efficiency of fault tolerance easily, everything is complicated. And note, efficiency is just as important as correctness, since a thousand computers are worth more than your salary. It’s like this:

- The first 10 computers are easy,

- The first 100 computers are hard, and

- The first 1,000 computers are impossible.

There’s really no hope. Or at least there wasn’t until about 8 years ago. At Google I use 10,000 computers regularly.

In 2004 Jeff and Sanjay published their paper on MapReduce (and here’s one on the underlying file system).

MapReduce allows us to stop thinking about fault tolerance; it is a platform that does the fault tolerance work for us. Programming 1,000 computers is now easier than programming 100. It’s a library to do fancy things.

To use MapReduce, you write two functions: a mapper function, and then a reducer function. It takes these functions and runs them on many machines which are local to your stored data. All of the fault tolerance is automatically done for you once you’ve placed the algorithm into the map/reduce framework.

The mapper takes each data point and produces an ordered pair of the form (key, value). The framework then sorts the outputs via the “shuffle”, and in particular finds all the keys that match and puts them together in a pile. Then it sends these piles to machines which process them using the reducer function. The reducer function’s outputs are of the form (key, new value), where the new value is some aggregate function of the old values.

So how do we do it for our word counting algorithm? For each word, just send it to the ordered with the key that word and the value being the integer 1. So

red —> (“red”, 1)

blue —> (“blue”, 1)

red —> (“red”, 1)

Then they go into the “shuffle” (via the “fan-in”) and we get a pile of (“red”, 1)’s, which we can rewrite as (“red”, 1, 1). This gets sent to the reducer function which just adds up all the 1’s. We end up with (“red”, 2), (“blue”, 1).

Key point: one reducer handles all the values for a fixed key.

Got more data? Increase the number of map workers and reduce workers. In other words do it on more computers. MapReduce flattens the complexity of working with many computers. It’s elegant and people use it even when they “shouldn’t” (although, at Google it’s not so crazy to assume your data could grow by a factor of 100 overnight). Like all tools, it gets overused.

Counting was one easy function, but now it’s been split up into two functions. In general, converting an algorithm into a series of MapReduce steps is often unintuitive.

For the above word count, distribution needs to be uniform. It all your words are the same, they all go to one machine during the shuffle, which causes huge problems. Google has solved this using hash buckets heaps in the mappers in one MapReduce iteration. It’s called CountSketch, and it is built to handle odd datasets.

At Google there’s a realtime monitor for MapReduce jobs, a box with “shards” which correspond to pieces of work on a machine. It indicates through a bar chart how the various machines are doing. If all the mappers are running well, you’d see a straight line across. Usually, however, everything goes wrong in the reduce step due to non-uniformity of the data – lots of values on one key.

The data preparation and writing the output, which take place behind the scenes, take a long time, so it’s good to try to do everything in one iteration. Note we’re assuming distributed file system is already there – indeed we have to use MapReduce to get data to the distributed file system – once we start using MapReduce we can’t stop.

Once you get into the optimization process, you find yourself tuning MapReduce jobs to shave off nanoseconds 10^{-9} whilst processing petabytes of data. These are order shifts worthy of physicists. This optimization is almost all done in C++. It’s highly optimized code, and we try to scrape out every ounce of power we can.

Josh Wills

Our second speaker of the night was Josh Wills. Josh used to work at Google with Rachel, and now works at Cloudera as a Senior Director of Data Science. He’s known for the following quote:

Data Science (n.): Person who is better at statistics than any software engineer and better at software engineering than any statistician.

Thought experiment

How would you build a human-powered airplane? What would you do? How would you form a team?

Student: I’d run an X prize. Josh: this is exactly what they did, for $50,000 in 1950. It took 10 years for someone to win it. The story of the winner is useful because it illustrates that sometimes you are solving the wrong problem.

The first few teams spent years planning and then their planes crashed within seconds. The winning team changed the question to: how do you build an airplane you can put back together in 4 hours after a crash? After quickly iterating through multiple prototypes, they solved this problem in 6 months.

Josh had some observations about the job of a data scientist:

- I spend all my time doing data cleaning and preparation. 90% of the work is data engineering.

- On solving problems vs. finding insights: I don’t find insights, I solve problems.

- Start with problems, and make sure you have something to optimize against.

- Parallelize everything you do.

- It’s good to be smart, but being able to learn fast is even better.

- We run experiments quickly to learn quickly.

Data abundance vs. data scarcity

Most people think in terms of scarcity. They are trying to be conservative, so they throw stuff away.

I keep everything. I’m a fan of reproducible research, so I want to be able to rerun any phase of my analysis. I keep everything.

This is great for two reasons. First, when I make a mistake, I don’t have to restart everything. Second, when I get new sources of data, it’s easy to integrate in the point of the flow where it makes sense.

Designing models

Models always turn into crazy Rube Goldberg machines, a hodge-podge of different models. That’s not necessarily a bad thing, because if they work, they work. Even if you start with a simple model, you eventually add a hack to compensate for something. This happens over and over again, it’s the nature of designing the model.

Mind the gap

The thing you’re optimizing with your model isn’t the same as the thing you’re optimizing for your business.

Example: friend recommendations on Facebook doesn’t optimize you accepting friends, but rather maximizing the time you spend on Facebook. Look closely: the suggestions are surprisingly highly populated by attractive people of the opposite sex.

How does this apply in other contexts? In medicine, they study the effectiveness of a drug instead of the health of the patients. They typically focus on success of surgery rather than well-being of the patient.

When I graduated in 2001, we had two options for file storage.

1) Databases:

- structured schemas

- intensive processing done where data is stored

- somewhat reliable

- expensive at scale

2) Filers:

- no schemas

- no data processing capability

- reliable

- expensive at scale

Since then we’ve started generating lots more data, mostly from the web. It brings up the natural idea of a data economic indicator, return on byte. How much value can I extract from a byte of data? How much does it cost to store? If we take the ratio, we want it to be bigger than one or else we discard.

Of course this isn’t the whole story. There’s also a big data economic law, which states that no individual record is particularly valuable, but having every record is incredibly valuable. So for example in any of the following categories,

- web index

- recommendation systems

- sensor data

- market basket analysis

- online advertising

one has an enormous advantage if they have all the existing data.

A brief introduction to Hadoop

Back before Google had money, they had crappy hardware. They came up with idea of copying data to multiple servers. They did this physically at the time, but then they automated it. The formal automation of this process was the genesis of GFS.

There are two core components to Hadoop. First is the distributed file system (HDFS), which is based on the google file system. The data stored in large files, with block sizes of 64MB to 256MB. As above, the blocks are replicated to multiple nodes in the cluster. The master node notices if a node dies.

Data engineering on hadoop

Hadoop is written in java, Whereas Google stuff is in C++.

Writing map reduce in the java API not pleasant. Sometimes you have to write lots and lots of map reduces. However, if you use hadoop streaming, you can write in python, R, or other high-level languages. It’s easy and convenient for parallelized jobs.

Cloudera

Cloudera is like Red hat for hadoop. It’s done under aegis of the Apache Software Foundation. The code is available for free, but Cloudera packages it together, gives away various distributions for free, and waits for people to pay for support and to keep it up and running.

Apache hive is a data warehousing system on top of hadoop. It uses an SQL-based query language (includes some map reduce -specific extensions), and it implements common join and aggregation patterns. This is nice for people who know databases well and are familiar with stuff like this.

Workflow

- Using hive, build records that contain everything I know about an entity (say a person) (intensive mapReduce stuff)

- Write python scripts to process the records over and over again (faster and iterative, also mapReduce)

- Update the records when new data arrives

Note phase 2 are typically map-only jobs, which makes parallelization easy.

I prefer standard data formats: text is big and takes up space. Thrift, Avro, protobuf are more compact, binary formats. I also encourage you to use the code and metadata repository Github. I don’t keep large data files in git.

Rolling Jubilee is a better idea than the lottery

Yesterday there was a reporter from CBS Morning News looking around for a quirky fun statistician or mathematician to talk about the Powerball lottery, which is worth more than $500 million right now. I thought about doing it and accumulated some cute facts I might want to say on air:

- It costs $2 to play.

- If you took away the grand prize, a ticket is worth 36 cents in expectation (there are 9 ways to win with prizes ranging from $4 to $1 million).

- The chance of winning grand prize is one in about 175,000,000.

- So when the prize goes over $175 million, that’s worth $1 in expectation.

- So if the prize is twice that, at $350 million, that’s worth $2 in expectation.

- Right now the prize is $500 million, so the tickets are worth more than $2 in expectation.

- Even so, the chances of being hit by lightening in a given year is something like 1,000,000, so 175 times more likely than winning the lottery

In general, the expected payoff for playing the lottery is well below the price. And keep in mind that if you win, almost half goes to taxes. I am super busy trying to write, so I ended up helping find someone else for the interview: Jared Lander. I hope he has fun.

If you look a bit further into the lottery system, you’ll find some questionable information. For example, lotteries are super regressive: poor people spend more money than rich people on lotteries, and way more if you think of it as a percentage of their income.

One thing that didn’t occur to me yesterday but would have been nice to try, and came to me via my friend Aaron, is to suggest that instead of “investing” their $2 in a lottery, people might consider investing it in the Rolling Jubilee. Here are some reasons:

- The payoff is larger than the investment by construction. You never pay more than $1 for $1 of debt.

- It’s similar to the lottery in that people are anonymously chosen and their debts are removed.

- The taxes on the benefits are nonexistent, at least as we understand the taxcode, because it’s a gift.

It would be interesting to see how the mindset would change if people were spending money to anonymously remove debt from each other rather than to win a jackpot. Not as flashy, perhaps, but maybe more stimulative to the economy. Note: an estimated $50 billion was spent on lotteries in 2010. That’s a lot of debt.

How to evaluate a black box financial system

I’ve been blogging about evaluation methods for modeling, for example here and here, as part of the book I’m writing with Rachel Schutt based on her Columbia Data Science class this semester.

Evaluation methods are important abstractions that allow us to measure models based only on their output.

Using various metrics of success, we can contrast and compare two or more entirely different models. And it means we don’t care about their underlying structure – they could be based on neural nets, logistic regression, or decision trees, but for the sake of measuring the accuracy, or the ranking, or the calibration, the evaluation method just treats them like black boxes.

It recently occurred to me a that we could generalize this a bit, to systems rather than models. So if we wanted to evaluate the school system, or the political system, or the financial system, we could ignore the underlying details of how they are structured and just look at the output. To be reasonable we have to compare two systems that are both viable; it doesn’t make sense to talk about a current, flawed system relative to perfection, since of course every version of reality looks crappy compared to an ideal.

The devil is in the articulated evaluation metric, of course. So for the school system, we can ask various questions: Do our students know how to read? Do they finish high school? Do they know how to formulate an argument? Have they lost interest in learning? Are they civic-minded citizens? Do they compare well to other students on standardized tests? How expensive is the system?

For the financial system, we might ask things like: Does the average person feel like their money is safe? Does the system add to stability in the larger economy? Does the financial system mitigate risk to the larger economy? Does it put capital resources in the right places? Do fraudulent players inside the system get punished? Are the laws transparent and easy to follow?

The answers to those questions aren’t looking good at all: for example, take note of the recent Congressional report that blames Jon Corzine for MF Global’s collapse, pins him down on illegal and fraudulent activity, and then does absolutely nothing about it. To conserve space I will only use this example but there are hundreds more like this from the last few years.

Suffice it to say, what we currently have is a system where the agents committing fraud are actually glad to be caught because the resulting fines are on the one hand smaller than their profits (and paid by shareholders, not individual actors), and on the other hand are cemented as being so, and set as precedent.

But again, we need to compare it to another system, we can’t just say “hey there are flaws in this system,” because every system has flaws.

I’d like to compare it to a system like ours except where the laws are enforced.

That may sounds totally naive, and in a way it is, but then again we once did have laws, that were enforced, and the financial system was relatively tame and stable.

And although we can’t go back in a time machine to before Glass-Steagall was revoked and keep “financial innovation” from happening, we can ask our politicians to give regulators the power to simplify the system enough so that something like Glass-Steagall can once again work.

On Reuters talking about Occupy

I was interviewed a couple of weeks ago and it just got posted here:

Systematized racism in online advertising, part 1

There is no regulation of how internet ad models are built. That means that quants can use any information they want, usually historical, to decide what to expect in the future. That includes associating arrests with african-american sounding names.

In a recent Reuters article, this practice was highlighted:

Instantcheckmate.com, which labels itself the “Internet’s leading authority on background checks,” placed both ads. A statistical analysis of the company’s advertising has found it has disproportionately used ad copy including the word “arrested” for black-identifying names, even when a person has no arrest record.

Luckily, Professor Sweeney, a Harvard University professor of government with a doctorate in computer science, is on the case:

According to preliminary findings of Professor Sweeney’s research, searches of names assigned primarily to black babies, such as Tyrone, Darnell, Ebony and Latisha, generated “arrest” in the instantcheckmate.com ad copy between 75 percent and 96 percent of the time. Names assigned at birth primarily to whites, such as Geoffrey, Brett, Kristen and Anne, led to more neutral copy, with the word “arrest” appearing between zero and 9 percent of the time.

Of course when I say there’s no regulation, that’s an exaggeration. There is some, and if you claim to be giving a credit report, then regulations really do exist. But as for the above, here’s what regulators have to say:

“It’s disturbing,” Julie Brill, an FTC commissioner, said of Instant Checkmate’s advertising. “I don’t know if it’s illegal … It’s something that we’d need to study to see if any enforcement action is needed.”

Let’s be clear: this is just the beginning.

Aunt Pythia’s advice and a request for cool math books

First, my answer to last week’s question which you guys also answered:

Aunt Pythia,

My loving, wonderful, caring boyfriend slurps his food. Not just soup — everything (even cereal!). Should I just deal with it, or say something? I think if I comment on it he’ll be offended, but I find it distracting during our meals together.

Food (Consumption) Critic

——

You guys did well with answering the question, and I’d like to nominate the following for “most likely to actually make the problem go away”, from Richard:

I’d go with blunt but not particularly bothered – halfway through his next bowl of cereal, exclaim “Wow, you really slurp your food, don’t you?! I never noticed that before.”

But then again, who says we want this problem to go away? My firm belief is that every relationship needs to have an unimportant thing that bugs the participants. Sometimes it’s how high the toaster is set, sometimes it’s how the other person stacks the dishes in the dishwasher, but there’s always that thing. And it’s okay: if we didn’t have the thing we’d invent it. In fact having the thing prevents all sorts of other things from becoming incredible upsetting. My theory anyway.

So my advice to Food Consumption Critic is: don’t do anything! Cherish the slurping! Enjoy something this banal and inconsequential being your worst criticism of this lovely man.

Unless you’re like Liz, also a commenter from last week, who left her husband because of the way he breathed. If it’s driving you that nuts, you might want to go with Richard’s advice.

——

Aunt Pythia,

Dear Aunt Pythia, I want to write to an advice column, but I don’t know whether or not to trust the advice I will receive. What do you recommend?

Perplexed in SoPo

Dear PiSP,

I hear you, and you’re right to worry. Most people only ask things they kind of know the answer to, or to get validation that they’re not a total jerk, or to get permission to do something that’s kind of naughty. If the advice columnist tells them something they disagree with, they ignore it entirely anyway. It’s a total waste of time if you think about it.

However, if your question is super entertaining and kind of sexy, then I suggest you write in ASAP. That’s the very kind of question that columnists know how to answer in deep, meaningful and surprising ways.

Yours,

AP

——

Aunt Pythia,

With global warming and hot summers do you think it’s too early to bring the toga back in style?

John Doe

Dear John,

It’s never to early to wear sheets. Think about it: you get to wear the very same thing you sleep in. It’s like you’re a walking bed.

Auntie

——

Aunt Pythia,

Is it unethical not to tell my dad I’m starting a business? I doubt he’d approve and I’m inclined to wait until it’s successful to tell him about it.

Angsty New Yorker

Dear ANY,

Wait, what kind of business is this? Are we talking hedge fund or sex toy shop?

In either case, I don’t think you need to tell your parents anything about your life if you are older than 18 and don’t want to, it’s a rule of american families. Judging by my kids, this rule actually starts when they’re 11.

Of course it depends on your relationship with your father how easy that will be and what you’d miss out on by being honest, but the fear of his disapproval is, to me, a bad sign: you’re gonna have to be tough as nails to be a business owner, so get started by telling, not asking, your dad. Be prepared for him to object, and if he does, tell him he’ll get used to it with time.

Aunt Pythia

——

Aunt Pythia,

I’m a philosophy grad school dropout turned programmer who hasn’t done math since high school. But I want to learn, partly for professional reasons but mainly out of curiosity. I recently bought *Proofs From the Book* but found that I lacked the requisite mathematical maturity to work through much of it. Where should I start? What should I read? (p.s. Thanks for the entertaining blog!)

Confused in Brooklyn

Readers, this question is for you! I don’t know of too many good basic math books, so Confused in Brooklyn is counting on you. There have actually been lots of people asking similar questions, so you’d be helping them too. If I get enough good suggestions I’ll create a separate reading list for cool math page on mathbabe. Thanks in advance for your suggestions!

——

Please take a moment to ask me a question:

It’s Pro-American to be Anti-Christmas

This is a guest post by Becky Jaffe.

I know what you’re thinking: Don’t Christmas and America go together like Santa and smoking?

Why, of course they do! Just ask Saint Nickotine, patron saint of profit. This Lucky Strike advertisement is an early introduction to Santa the corporate shill, the seasonal cash cow whose avuncular mug endorses everything from Coca-Cola to Siri to yes, even competing brands of cigarettes like Pall Mall. Sorry Lucky Strike, Santa’s a bit of a sellout.

Nearly a century after these advertisements were published, the secular trinity of Santa, consumerism and America has all but supplanted the holy trinity the holiday was purportedly created to commemorate. I’ll let Santa the Spokesmodel be the cheerful bearer of bad news:

Christmas and consumerism have been boxed up, gift-wrapped and tied with a red-white-and-blue ribbon. In this guest post I’ll unwrap this package and explain why I, for one, am not buying it.

____________________

Yesterday was Thanksgiving, followed inexorably by Black Friday; one day we’re collectively meditating on gratitude, the next we’re jockeying for position in line to buy a wall-mounted 51” plasma HDTV. Some would argue that’s quintessentially American. As social critic and artist Andy Warhol wryly observed, “Shopping is more American than thinking.”

Such a dour view may accurately describe post WW II America, but not the larger trends nor longer traditions of our nation’s history. Although we may have become profligate of late, we were at the outset a frugal people; consumerism and America need not be inextricably linked in our collective imagination if we take a longer view. Long before there was George Bush telling us the road to recovery was to have faith in the American economy, there was Henry David Thoreau, who spoke to a faith in a simpler economy:

The Simple Living experiment he undertook and chronicled in his classic Walden was guided by values shared in common by many of the communities who sought refuge in the American colonies at the outset of our nation: the Mennonites, the Quakers, and the Shakers. These groups comprise not only a great name for a punk band, but also our country’s temperamental and ethical ancestry. The contemporary relationship between consumerism and Christmas is decidedly un-American, according to our nation’s founders. And what could be more American than the Amish? Or the secular version thereof: The Simplicity Collective.

Being anti-Christmas™ is as uniquely American as Thoreau, who summed up his anti-consumer credo succinctly: “Men have become the tools of their tools.” If he were alive today, I have no doubt that curmudgeonly minimalist would be marching with Occupy Wall Street instead of queuing with the tools on Occupy Mall Street.

Being anti-Christmas™ is as American as Mark Twain, who wrote, “The approach of Christmas brings harrassment and dread to many excellent people. They have to buy a cart-load of presents, and they never know what to buy to hit the various tastes; they put in three weeks of hard and anxious work, and when Christmas morning comes they are so dissatisfied with the result, and so disappointed that they want to sit down and cry. Then they give thanks that Christmas comes but once a year.” (From Following the Equator)

Being anti-Christmas™ is as American as “Oklahoma’s favorite son,” Will Rogers, 1920’s social commentator who made the acerbic observation, “Too many people spend money they haven’t earned, to buy things they don’t want, to impress people they don’t like.”

He may have been referring to presents like this, which are just, well, goyish:

Being anti-Christmas is as American as Robert Frost, recipient of four Pulitzer prizes in poetry, who had this to say in a Christmas Circular Letter:

He asked if I would sell my Christmas trees;

My woods—the young fir balsams like a place

Where houses all are churches and have spires.

I hadn’t thought of them as Christmas Trees.

I doubt if I was tempted for a moment

To sell them off their feet to go in cars

And leave the slope behind the house all bare,

Where the sun shines now no warmer than the moon.

I’d hate to have them know it if I was.

Yet more I’d hate to hold my trees except

As others hold theirs or refuse for them,

Beyond the time of profitable growth,

The trial by market everything must come to.

We inherit from these American thinkers a unique intellectual legacy that might make us pause at the commercialism that has come to consume us. To put it in other words:

- John Porcellino’s Thoreau at Walden: $18

- Walt Whitman’s Leaves of Grass: $5.95

- Mary Oliver’s American Primitive: $9.99

- The Life and Letters of John Muir: $12.99

- Transcendentalist Intellectual Legacy: Priceless.

The intellectual and spiritual founders of our country caution us to value our long-term natural resources over short-term consumptive titillation. Unheeding their wisdom, last year on Black Friday American consumers spent $11.4 billion, more than the annual Gross Domestic Product of 73 nations.

And American intellectual legacy aside, isn’t that a good thing? Doesn’t Christmas spending stimulate our stagnant economy and speed our recovery from the recession? If you believe organizations like Made in America, it’s our patriotic duty to spend money over the holidays. The exhortation from their website reads, “If each of us spent just $64 on American made goods during our holiday shopping, the result would be 200,000 new jobs. Now we want to know, are you in?”

That depends once again on whether or not we take the long view. Christmas spending might create a few temporary, low-wage, part-time jobs without benefits of the kind described in Barbara Ehrenreich’s Nickel and Dimed: On (Not) Getting By In America, but it’s not likely to create lasting economic health, especially if we fail to consider the long-term environmental and social costs of our short-term consumer spending sprees. The answer to Made in America’s question depends on the validity of the economic model we use to assess their spurious claim, as Mathbabe has argued time and again in this blog. The logic of infinite growth as an unequivocal net good is the same logic that underlies such flawed economic models as the Gross National Product (GNP) and the Gross Domestic Product (GDP).

These myopic measures fail to take into account the value of the natural resources from which our consumer products are manufactured. In this accounting system, when an old-growth forest is clearcut to make way for a Best Buy parking lot, that’s counted as an unequivocal economic boon since the economic value of the lost trees/habitat is not considered as a debit. Feminist economist and former New Zealand parliamentarian Marilyn Waring explains the idea accessibly in this documentary: Who’s Counting? Marilyn Waring on Sex, Lies, and Global Economics.

If we were to adopt a model that factors in the lost value of nonrenewable natural resources, such as the proposed Green National Product, we might skip the stampede at Walmart and go for a walk in the woods instead to stimulate the economy.

Other critics of these standard models for measuring economic health point out that they overvalue quantity of production and, by failing to take into account such basic measures of economic health as wealth distribution, undervalue quality of life. And the growing gap in income inequality is a trend that we cannot afford to overlook as we consider the best options for economic recovery. According to this New York Times article, “Income inequality has soared to the highest levels since the Great Depression, and the recession has done little to reverse the trend, with the top 1 percent of earners taking 93 percent of the income gains in the first full year of the recovery.”

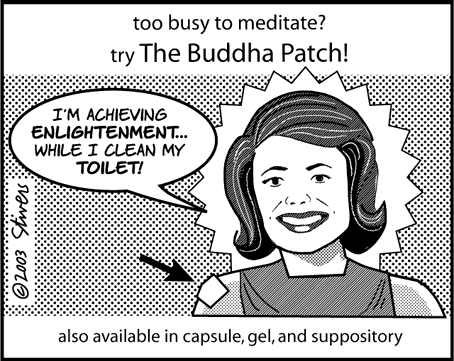

For the majority of Americans who are still struggling to make ends meet, the Black Friday imperative to BUY! means racking up more credit card debt. (As American poet ee cummings quipped,” “I’m living so far beyond my income that we may almost be said to be living apart.”) The specter of Christmas spending is particularly ominous this season, during a recession, after a national wave of foreclosures has left Americans with insecure housing, exorbitant rents, and our beleaguered Santa with fewer chimneys to squeeze into.

Some proposed alternatives to the GDP and GNP that factor in income distribution are the Human Progress Index, the Genuine Progress Indicator, and yes, even a proposed Gross National Happiness.

A dose of happiness could be just the antidote to the dread many Americans feel at the prospect of another hectic holiday season. As economist Paul Heyne put it, “The gap in our economy is between what we have and what we think we ought to have – and that is a moral problem, not an economic one.”

____________________

Mind you, I’m not anti-Christmas, just anti-Christmas™. I’ve been referring thus far to the secular rites of the latter, but practicing Christians for whom the former is a meaningful spiritual meditation might equally take offense at its runaway commercialization, which the historical Jesus would decidedly not have endorsed.

I hate to pull the Bible card, but, seriously, what part of Ecclesiastes don’t you understand?

Photo by Becky Jaffe. http://www.beckyjaffe.com

Better is an handful with quietness, than both the hands full with travail and vexation of spirit. – Ecclesiastes 4:6

Just imagine if Buddhism were hijacked by greed in the same fashion.

After all,

Right?

Or is it axial tilt?

Whether you’re a practicing Christian, a Born-Again Pagan celebrating the Winter Solstice (“Mithras is the Reason for the Season”), or a fundamentalist atheist (“You know it’s a myth! This season, celebrate reason.”), we all have reason to be concerned about the corporatization of our cultural rituals.

The meaning of Christmas has gotten lost in translation:

So this year, let’s give Santa a much-needed smoke break, pour him a glass of Kwanzaa Juice, and consider these alternatives for a change:

1. Presence, not presents. Skip the spending binge (and maybe even another questionable Christmas tradition, the drinking binge), and give the gift of time to the people you love. I’m talking luxurious swaths of time: unstructured time, unproductive time, time wasted exquisitely together.

I’m talking about turning off the television in preparation for Screen-Free Week 2013, and going for a slow walk in nature, which can create more positive family connection than the harried shopping trip. And if Richard Louv’s thesis about Nature Deficit Disorder is merited, it may be healthier for your child to take a walk in the woods than to camp out in front of the X-Box you can’t afford anyway.

Whether you’re choosing to spend the holidays with your family of origin or your family of choice, playing a game together is a great way to reconnect. How about kibitzing over this new card game 52 Shades of Greed? It’s quite the conversation starter. And what better soundtrack for a card game than Sweet Honey in the Rock’s musical musings on Greed? Or Tracy Chapman’s Mountains of Things.

Or how about seeing a show together? You can take the whole family to see Reverend Billy and the Church of Stop Shopping at the Highline Ballroom in New York this Sunday, November 25th. He’s on a “mission to save Christmas from the Shopocalypse.” From the makers of the film What Would Jesus Buy?

2. Give a group gift. There is a lot of talk about family values, but how well do we know each other’s values? One way to find out is to pool our giving together and decide as a group who the beneficiaries should be. You might elect to skip Black Friday and Cyber Monday and make a donation instead to Giving Tuesday’s Hurricane Sandy relief efforts. Other organizations worth considering:

3. Think outside the box. If you’re looking for alternatives to the gift-giving economy, how about bartering? Freecycle is a grassroots organization that puts a tourniquet on the flow of luxury goods destined for the landfill by creating local voluntary swap networks.

Or how about giving the gift of debt relief? Check out the Rolling Jubilee, a “bailout for the people by the people.”

4. Give the Buddhist gift: Nothing!

Buy Nothing Do Something is the slogan of an organization proposing an alternative to Black Friday: On Brave Friday, we “choose family over frenzy.” One contributor to the project shared this family tradition: “We have a “Five Hands” gift giving policy. We can exchange items that are HANDmade (by us), HAND-me-down and secondHAND. We can choose to gift a helping HAND (donations to charities). Lastly and my favorite, we can gift a HAND-in-hand, which is a dedication of time spent with one another. (Think date night or a day at the museum as a family.)”

Adbusters magazine sponsors an annual boycott of consumerism in England called Buy Nothing Day.

5. Invent your own holiday. Americans pride ourselves on our penchant for innovation. We amalgamate and synthesize novelty out of eclectic sources. Although we often talk about “traditional values,” we’re on the whole much less tradition-bound than say, the Serbs, who collectively recall several thousand years of history as relevant to the modern instant. We tend to abandon tradition when it is incovenient (e.g. marriage), which is perhaps why we harken back to its fantasy status in such a treacly manner. Making stuff up is what we do well as a nation. Isn’t “DIY Christmas” a no-brainer? Happy Christmahannukwanzaka, y’all!

6. Keep it weird. Several of you wrote with these suggestions for Black Friday street theater:

“Someone said go for a hike in a park, not the Wal*Mart parking lot. But why not the Wal*Mart parking lot? With all your hiking gear and everything.”

“I’d love for 5-6 of us to sit at Union Square in front of Macy’s and sit and meditate. Perhaps passer-bys will take a notice and ponder. Anyone in? Friday 2-3 hours.”

Few pranksters have been so elaborate in their anti-corporate antics as the Yes Men. You may get inspired to come up with some political theater of your own after watching the documentary The Yes Men: The True Story of the End of the World Trade Organization.

____________________

However we choose to celebrate the holidays in this relativist pluralistic era, whatever our preferred religious and/or cultural December-based holiday celebration may be, we can disentangle our rituals from obligatory consumerism.

I’m not suggesting this is an easy task. Our consumer habits are wrapped up with our identities as social critic Alain de Botton observes: “We need objects to remind us of the commitments we’ve made. That carpet from Morocco reminds us of the impulsive, freedom-loving side of ourselves we’re in danger of losing touch with. Beautiful furniture gives us something to live up to. All designed objects are propaganda for a way of life.”

This year, let’s skip the propaganda.

We can choose to

Yes, we can.

Happy Solstice, everyone!

(If only I had a Celestron CPC 1100 Telescope with Nikon D700 DSLR adapter to admire the Solstice skies….)

A primer on time wasting

Hello, Aaron here.

Cathy asked me to write a post about wasting time. But I never got around to it.

Just kidding. I’m actually doing it.

Things move fast here in New York City, but where the hell is everyone going anyway?

When I was writing my dissertation, I lived on the west coast and Cathy lived on the east coast. I used to get up around 7 every morning. She was very pregnant (for the first time), and I was very stressed out. We talked on the phone every morning, and we got in the habit of doing the New York Times crossword puzzle. Mind you, this was before it was online – she actually had a newspaper, with ink and everything, and she read me the clues over the phone and we did the puzzle together. It helped get me going in the morning, and it warmed me up for dissertation writing.

After a long time of not doing crossword puzzles, I’ve taken it up again in recent years. Sometimes Cathy and I do it together, online, using the app where two people can solve it at the same time. [NYT, if you’re listening, the new version is much worse than the old! Gotta fix up the interfacing.] Sometimes I do it myself. Sometimes, like today, it’s Thanksgiving, and it’s a real treat to do the puzzle with Cathy in person. But one way or another, I do it just about every day.

At one point, early in the current phase of my habit, I got stuck and I wanted to cheat. I looked for the answers online, since I couldn’t just wait until the next day. I came across this blog, which I call rexword for short.

I got addicted. As happens so frequently with the internets, I discovered an entire community of people who are both really into something mildly obscure (read: nerdy) and also actually insightful, funny, and interesting (read: nerdy).

I’ve learned a lot about puzzling from rexword. I like to tell my students they have to learn how to get “behind” what I write on the chalkboard, to see it before it’s being written or as it’s being written as if they were doing it themselves, the way a musician hears music or the way anyone does anything at an expert level. Rex’s blog took me behind the crossword puzzle for the first time. I’m nowhere near as good at it as he is, or as many of his readers seem to be, but seeing it from the other side is a lot of fun. I appreciate puzzles in a completely different way now: I no longer just try to complete it (which is still a challenge a lot of the time, e.g. most Saturdays), but I look it over and imagine how it might have been different or better or whatever. Then, especially if I think there’s something especially notable about it, I go to the blog expecting some amusing discussion.

Usually I find it. In fact, usually I find amusing discussion and insights about way more things than I would ever notice myself about the puzzle. I also find hilarious things like this:

We just did last Sunday’s puzzle, and at the end we noticed that the completed puzzle contained all of the following: g-spot, tits, ass, cock. Once upon a time I might not have thought much of this (or noticed) but now my reaction was, “I bet there’s something funny about this on the blog.” I was sure this would be amply noted and wittily de- and re-constructed. In fact, it barely got a mention, although predictably, several commenters picked up the slack.

Anyway, I’ve got this particular addiction under control – I no longer read the blog every day, but as I said, when there’s something notable or funny I usually check it out and sometimes comment myself, if no one else seems to have noticed whatever I found.

What is the point of all this? In case you forgot, Cathy asked me to write about wasting time. I think she made this request because of the relish with which I tread the fine line between being super nerdy about something and just wasting time (don’t get me started about the BCS….).

Today, I am especially thankful to be alive and in such a luxurious condition that I can waste time doing crossword puzzles, and then reading blogs about doing crossword puzzles, and then writing blogs about reading blogs about doing crossword puzzles.

Happy Thanksgiving everyone.

Black Friday resistance plan

The hype around Black Friday is building. It’s reaching its annual fever pitch. Let’s compare it to something much less important to americans like “global warming”, shall we? Here we go:

Note how, as time passes, we become more interested in Black Friday and less interested in global warming.

How do you resist, if not the day itself, the next few weeks of crazy consumerism that is relentlessly plied? Lots of great ideas were posted here, when I first wrote about this. There will be more coming soon.

In the meantime, here’s one suggestion I have, which I use all the time to avoid over-buying stuff and which this Jane Brody article on hoarding reminded me of.

Mathbabe’s Black Friday Resistance plan, step 1:

Go through your closets and just look at all the stuff you already have. Go through your kids’ closets and shelves and books and toychests to catalog their possessions. Count how many appliances you own, in your kitchen alone.

Be amazed that anyone could ever own that much stuff, and think about what we really need to survive, and indeed, what we really need to be content.

In case you need more, here’s an optional step 2. Think about the Little House on the Prairie series, and how Laura made a doll out of scraps of cloth left over from the dresses, and how once a year when Pa sold his crop they’d have penny candy and it would be a huge treat. For Christmas one year, Laura got an orange. Compared to that we binge on consumerism on a daily basis and we’ve become enured to its effects.

Now, I’m not a huge fan of going back to those roots entirely. After all, during The Long Winter, as I’m sure you recall and which was very closely based on her real experience, Mary went blind from hunger and Carrie was permanently affected. If it hadn’t been for Almonzo coming to their rescue with food for the whole town, many might have died. Now that was a man.

I think we need a bit more insurance than that. Even so, we might have all the material possessions we need for now.

Columbia Data Science course, week 12: Predictive modeling, data leakage, model evaluation

This week’s guest lecturer in Rachel Schutt’s Columbia Data Science class was Claudia Perlich. Claudia has been the Chief Scientist at m6d for 3 years. Before that she was a data analytics group at the IBM center that developed Watson, the computer that won Jeopardy!, although she didn’t work on that project. Claudia got her Ph.D. in information systems at NYU and now teaches a class to business students in data science, although mostly she addresses how to assess data science work and how to manage data scientists. Claudia also holds a masters in Computer Science.

Claudia is a famously successful data mining competition winner. She won the KDD Cup in 2003, 2007, 2008, and 2009, the ILP Challenge in 2005, the INFORMS Challenge in 2008, and the Kaggle HIV competition in 2010.

She’s also been a data mining competition organizer, first for the INFORMS Challenge in 2009 and then for the Heritage Health Prize in 2011. Claudia claims to be retired from competition.

Claudia’s advice to young people: pick your advisor first, then choose the topic. It’s important to have great chemistry with your advisor, and don’t underestimate the importance.

Background

Here’s what Claudia historically does with her time:

- predictive modeling

- data mining competitions

- publications in conferences like KDD and journals

- talks

- patents

- teaching

- digging around data (her favorite part)

Claudia likes to understand something about the world by looking directly at the data.

Here’s Claudia’s skill set:

- plenty of experience doing data stuff (15 years)

- data intuition (for which one needs to get to the bottom of the data generating process)

- dedication to the evaluation (one needs to cultivate a good sense of smell)

- model intuition (we use models to diagnose data)

Claudia also addressed being a woman. She says it works well in the data science field, where her intuition is useful and is used. She claims her nose is so well developed by now that she can smell it when something is wrong. This is not the same thing as being able to prove something algorithmically. Also, people typically remember her because she’s a woman, even when she don’t remember them. It has worked in her favor, she says, and she’s happy to admit this. But then again, she is where she is because she’s good.

Someone in the class asked if papers submitted for journals and/or conferences are blind to gender. Claudia responded that it was, for some time, typically double-blind but now it’s more likely to be one-sided. And anyway there was a cool analysis that showed you can guess who wrote a paper with 80% accuracy just by knowing the citations. So making things blind doesn’t really help. More recently the names are included, and hopefully this doesn’t make things too biased. Claudia admits to being slightly biased towards institutions – certain institutions prepare better work.

Skills and daily life of a Chief Data Scientist

Claudia’s primary skills are as follows:

- Data manipulation: unix (sed, awk, etc), Perl, SQL

- Modeling: various methods (logistic regression, nearest neighbors, k-nearest neighbors, etc)

- Setting things up

She mentions that the methods don’t matter as much as how you’ve set it up, and how you’ve translated it into something where you can solve a question.

More recently, she’s been told that at work she spends:

- 40% of time as “contributor”: doing stuff directly with data

- 40% of time as “ambassador”: writing stuff, giving talks, mostly external communication to represent m6d, and

- 20% of time in “leadership” of her data group

At IBM it was much more focused in the first category. Even so, she has a flexible schedule at m6d and is treated well.

The goals of the audience

She asked the class, why are you here? Do you want to:

- become a data scientist? (good career choice!)

- work with data scientist?

- work for a data scientist?

- manage a data scientist?

Most people were trying their hands at the first, but we had a few in each category.

She mentioned that it matters because the way she’d talk to people wanting to become a data scientist would be different from the way she’d talk to someone who wants to manage them. Her NYU class is more like how to manage one.

So, for example, you need to be able to evaluate their work. It’s one thing to check a bubble sort algorithm or check whether a SQL server is working, but checking a model which purports to give the probability of people converting is different kettle of fish.

For example, try to answer this: how much better can that model get if you spend another week on it? Let’s face it, quality control is hard for yourself as a data miner, so it’s definitely hard for other people. There’s no easy answer.

There’s an old joke that comes to mind: What’s the difference between the scientist and a consultant? The scientists asks, how long does it take to get this right? whereas the consultant asks, how right can I get this in a week?

Insights into data

A student asks, how do you turn a data analysis into insights?

Claudia: this is a constant point of contention. My attitude is: I like to understand something, but what I like to understand isn’t what you’d consider an insight. My message may be, hey you’ve replaced every “a” by a “0”, or, you need to change the way you collect your data. In terms of useful insight, Ori’s lecture from last week, when he talked about causality, is as close as you get.

For example, decision trees you interpret, and people like them because they’re easy to interpret, but I’d ask, why does it look like it does? A slightly different data set would give you a different tree and you’d get a different conclusion. This is the illusion of understanding. I tend to be careful with delivering strong insights in that sense.

For more in this vein, Claudia suggests we look at Monica Rogati‘s talk “Lies, damn lies, and the data scientist.”

Data mining competitions

Claudia drew a distinction between different types of data mining competitions.

On the one hand you have the “sterile” kind, where you’re given a clean, prepared data matrix, a standard error measure, and where the features are often anonymized. This is a pure machine learning problem.

Examples of this first kind are: KDD Cup 2009 and 2011 (Netflix). In such competitions, your approach would emphasize algorithms and computation. The winner would probably have heavy machines and huge modeling ensembles.

On the other hand, you have the “real world” kind of data mining competition, where you’re handed raw data, which is often in lots of different tables and not easily joined, where you set up the model yourself and come up with task-specific evaluations. This kind of competition simulates real life more.

Examples of this second kind are: KDD cup 2007, 2008, and 2010. If you’re competing in this kind of competition your approach would involve understanding the domain, analyzing the data, and building the model. The winner might be the person who best understands how to tailor the model to the actual question.

Claudia prefers the second kind, because it’s closer to what you do in real life. In particular, the same things go right or go wrong.

How to be a good modeler

Claudia claims that data and domain understanding is the single most important skill you need as a data scientist. At the same time, this can’t really be taught – it can only be cultivated.

A few lessons learned about data mining competitions that Claudia thinks are overlooked in academics:

- Leakage: the contestants best friend and the organizers/practitioners worst nightmare. There’s always something wrong with the data, and Claudia has made an artform of figuring out how the people preparing the competition got lazy or sloppy with the data.

- Adapting learning to real-life performance measures beyond standard measures like MSE, error rate, or AUC (profit?)

- Feature construction/transformation: real data is rarely flat (i.e. given to you in a beautiful matrix) and good, practical solutions for this problem remains a challenge.

Leakage

Leakage refers to something that helps you predict something that isn’t fair. It’s a huge problem in modeling, and not just for competitions. Oftentimes it’s an artifact of reversing cause and effect.

Example 1: There was a competition where you needed to predict S&P in terms of whether it would go up or go down. The winning entry had a AUC (area under the ROC curve) of 0.999 out of 1. Since stock markets are pretty close to random, either someone’s very rich or there’s something wrong. There’s something wrong.

In the good old days you could win competitions this way, by finding the leakage.

Example 2: Amazon case study: big spenders. The target of this competition was to predict customers who spend a lot of money among customers using past purchases. The data consisted of transaction data in different categories. But a winning model identified that “Free Shipping = True” was an excellent predictor

What happened here? The point is that free shipping is an effect of big spending. But it’s not a good way to model big spending, because in particular it doesn’t work for new customers or for the future. Note: timestamps are weak here. The data that included “Free Shipping = True” was simultaneous with the sale, which is a no-no. We need to only use data from beforehand to predict the future.

Example 3: Again an online retailer, this time the target is predicting customers who buy jewelry. The data consists of transactions for different categories. A very successful model simply noted that if sum(revenue) = 0, then it predicts jewelry customers very well?

What happened here? The people preparing this data removed jewelry purchases, but only included people who bought something in the first place. So people who had sum(revenue) = 0 were people who only bought jewelry. The fact that you only got into the dataset if you bought something is weird: in particular, you wouldn’t be able to use this on customers before they finished their purchase. So the model wasn’t being trained on the right data to make the model useful. This is a sampling problem, and it’s common.

Example 4: This happened at IBM. The target was to predict companies who would be willing to buy “websphere” solutions. The data was transaction data + crawled potential company websites. The winning model showed that if the term “websphere” appeared on the company’s website, then they were great candidates for the product.

What happened? You can’t crawl the historical web, just today’s web.

Thought experiment

You’re trying to study who has breast cancer. The patient ID, which seemed innocent, actually has predictive power. What happened?

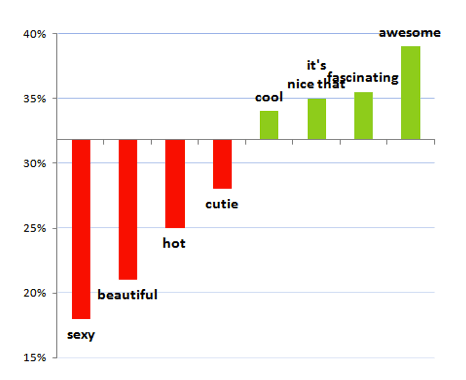

In the above image, red means cancerous, green means not. it’s plotted by patient ID. We see three or four distinct buckets of patient identifiers. It’s very predictive depending on the bucket. This is probably a consequence of using multiple databases, some of which correspond to sicker patients are more likely to be sick.

A student suggests: for the purposes of the contest they should have renumbered the patients and randomized.

Claudia: would that solve the problem? There could be other things in common as well.

A student remarks: The important issue could be to see the extent to which we can figure out which dataset a given patient came from based on things besides their ID.

Claudia: Think about this: what do we want these models for in the first place? How well can you predict cancer?

Given a new patient, what would you do? If the new patient is in a fifth bin in terms of patient ID, then obviously don’t use the identifier model. But if it’s still in this scheme, then maybe that really is the best approach.

This discussion brings us back to the fundamental problem that we need to know what the purpose of the model is and how is it going to be used in order to decide how to do it and whether it’s working.

Pneumonia

During an INFORMS competition on pneumonia predictions in hospital records, where the goal was to predict whether a patient has pneumonia, a logistic regression which included the number of diagnosis codes as a numeric feature (AUC of 0.80) didn’t do as well as the one which included it as a categorical feature (0.90). What’s going on?

This had to do with how the person prepared the data for the competition:

The diagnosis code for pneumonia was 486. So the preparer removed that (and replaced it by a “-1”) if it showed up in the record (rows are different patients, columns are different diagnoses, there are max 4 diagnoses, “-1” means there’s nothing for that entry).

Moreover, to avoid telling holes in the data, the preparer moved the other diagnoses to the left if necessary, so that only “-1″‘s were on the right.

There are two problems with this:

- If the column has only “-1″‘s, then you know it started out with only pneumonia, and

- If the column has no “-1″‘s, you know there’s no pneumonia (unless there are actually 5 diagnoses, but that’s less common).

This was enough information to win the competition.

Note: winning competition on leakage is easier than building good models. But even if you don’t explicitly understand and game the leakage, your model will do it for you. Either way, leakage is a huge problem.

How to avoid leakage

Claudia’s advice to avoid this kind of problem:

- You need a strict temporal cutoff: remove all information just prior to the event of interest (patient admission).

- There has to be a timestamp on every entry and you need to keep

- Removing columns asks for trouble

- Removing rows can introduce inconsistencies with other tables, also causing trouble

- The best practice is to start from scratch with clean, raw data after careful consideration

- You need to know how the data was created! I only work with data I pulled and prepared myself (or maybe Ori).

Evaluations

How do I know that my model is any good?

With powerful algorithms searching for patterns of models, there is a serious danger of over fitting. It’s a difficult concept, but the general idea is that “if you look hard enough you’ll find something” even if it does not generalize beyond the particular training data.

To avoid overfitting, we cross-validate and we cut down on the complexity of the model to begin with. Here’s a standard picture (although keep in mind we generally work in high dimensional space and don’t have a pretty picture to look at):

The picture on the left is underfit, in the middle is good, and on the right is overfit.

The model you use matters when it concerns overfitting:

So for the above example, unpruned decision trees are the most over fitting ones. This is a well-known problem with unpruned decision trees, which is why people use pruned decision trees.

Accuracy: meh

Claudia dismisses accuracy as a bad evaluation method. What’s wrong with accuracy? It’s inappropriate for regression obviously, but even for classification, if the vast majority is of binary outcomes are 1, then a stupid model can be accurate but not good (guess it’s always “1”), and a better model might have lower accuracy.

Probabilities matter, not 0’s and 1’s.

Nobody makes decisions on binary outcomes. I want to know the probability I have breast cancer, I don’t want to be told yes or no. It’s much more information. I care about probabilities.

How to evaluate a probability model

We separately evaluate the ranking and the calibration. To evaluate the ranking, we use the ROC curve and calculate the area under it, typically ranges from 0.5-1.0. This is independent of scaling and calibration. Here’s an example of how to draw an ROC curve:

Sometimes to measure rankings, people draw the so-called lift curve:

The key here is that the lift is calculated with respect to a baseline. You draw it at a given point, say 10%, by imagining that 10% of people are shown ads, and seeing how many people click versus if you randomly showed 10% of people ads. A lift of 3 means it’s 3 times better.

How do you measure calibration? Are the probabilities accurate? If the model says probability of 0.57 that I have cancer, how do I know if it’s really 0.57? We can’t measure this directly. We can only bucket those predictions and then aggregately compare those in that prediction bucket (say 0.50-0.55) to the actual results for that bucket.

For example, here’s what you get when your model is an unpruned decision tree, where the blue diamonds are buckets:

A good model would show buckets right along the x=y curve, but here we’re seeing that the predictions were much more extreme than the actual probabilities. Why does this pattern happen for decision trees?

A good model would show buckets right along the x=y curve, but here we’re seeing that the predictions were much more extreme than the actual probabilities. Why does this pattern happen for decision trees?

Claudia says that this is because trees optimize purity: it seeks out pockets that have only positives or negatives. Therefore its predictions are more extreme than reality. This is generally true about decision trees: they do not generally perform well with respect to calibration.

Logistic regression looks better when you test calibration, which is typical:

Takeaways:

- Accuracy is almost never the right evaluation metric.

- Probabilities, not binary outcomes.

- Separate ranking from calibration.

- Ranking you can measure with nice pictures: ROC, lift

- Calibration is measured indirectly through binning.

- Different models are better than others when it comes to calibration.

- Calibration is sensitive to outliers.

- Measure what you want to be good at.

- Have a good baseline.

Choosing an algorithm

This is not a trivial question and in particular small tests may steer you wrong, because as you increase the sample size the best algorithm might vary: often decision trees perform very well but only if there’s enough data.

In general you need to choose your algorithm depending on the size and nature of your dataset and you need to choose your evaluation method based partly on your data and partly on what you wish to be good at. Sum of squared error is maximum likelihood loss function if your data can be assumed to be normal, but if you want to estimate the median, then use absolute errors. If you want to estimate a quantile, then minimize the weighted absolute error.

We worked on predicting the number of ratings of a movie will get in the next year, and we assumed a poisson distributions. In this case our evaluation method doesn’t involve minimizing the sum of squared errors, but rather something else which we found in the literature specific to the Poisson distribution, which depends on the single parameter :

Charity direct mail campaign

Let’s put some of this together.

Say we want to raise money for a charity. If we send a letter to every person in the mailing list we raise about $9000. We’d like to save money and only send money to people who are likely to give – only about 5% of people generally give. How can we do that?

If we use a (somewhat pruned, as is standard) decision tree, we get $0 profit: it never finds a leaf with majority positives.

If we use a neural network we still make only $7500, even if we only send a letter in the case where we expect the return to be higher than the cost.

This looks unworkable. But if you model is better, it’s not. A person makes two decisions here. First, they decide whether or not to give, then they decide how much to give. Let’s model those two decisions separately, using:

Note we need the first model to be well-calibrated because we really care about the number, not just the ranking. So we will try logistic regression for first half. For the second part, we train with special examples where there are donations.

Altogether this decomposed model makes a profit of $15,000. The decomposition made it easier for the model to pick up the signals. Note that with infinite data, all would have been good, and we wouldn’t have needed to decompose. But you work with what you got.

Moreover, you are multiplying errors above, which could be a problem if you have a reason to believe that those errors are correlated.

Parting thoughts

We are not meant to understand data. Data are outside of our sensory systems and there are very few people who have a near-sensory connection to numbers. We are instead meant to understand language.

We are not mean to understand uncertainty: we have all kinds of biases that prevent this from happening and are well-documented.

Modeling people in the future is intrinsically harder than figuring out how to label things that have already happened.

Even so we do our best, and this is through careful data generation, careful consideration of what our problem is, making sure we model it with data close to how it will be used, making sure we are optimizing to what we actually desire, and doing our homework in learning which algorithms fit which tasks.

Whither the fake clicks?

My friend Ori explained to me last week about where all those fake clicks are coming from and going to and why. He blogged about it on m6d’s website.

As usual, it’s all about incentives. They’re gaming the online advertising model for profit.

To understand the scam, the first thing to know is that advertisers bid for placement on websites, and they bid higher if they think high quality people will see their ad and if they think a given website is well-connected in the larger web.

Say you want that advertising money. You set up a website for the express purpose of selling ads to the people who come to your website.

First, if your website gets little or no traffic, nobody is willing to bid up that advertising. No problem, just invent robots that act like people clicking.

Next, this still wouldn’t work if your website seems unconnected to the larger web. So what these guy have done is to create hundreds if not thousands of websites, which just constantly shovel fake people around their own network from place to place. That creates the impression, using certain calculations of centrality, that these are very well connected websites.

Finally, you might ask how the bad guys convince advertisers that these robots are “high quality” clicks. Here they rely on the fact that advertisers use different definition of quality.

Whereas you might only want to count people as high quality if they actually buy your product, it’s often hard to know if that has happened (especially if it’s a store-bought item) so proxies are used instead. Often it’s as simple as whether the online user visits the website of the product, which of course can be done by robots instead.

So there it is, an entire phantom web set up just to game the advertisers’ bid system and collect ad money that should by all rights not exist.

Two comments:

- First, I’m not sure this is illegal. Which is not to say it’s unavoidable, because it’s not so hard to track if you’re fighting against it. The beginning of a very large war.

- Second, even if there weren’t this free-for-the-gaming advertiser money out there, there’d still be another group of people incentivized to create fake clicks. Namely, the advertisers give out bonuses to their campaign-level people based on click-through rates. So these campaign managers have a natural incentive to artificially inflate the click-through rates (which would work against their companies’ best interest to be sure). I’m not saying that those people are the architects of the fake clicks, just that they have incentive to be. In particular, they have no incentive to fix this problem.

Support Naked Capitalism

Crossposted on Naked Capitalism.

Being a quantitative, experiment-minded person, I’ve decided to go about helping Yves Smith raise funds for Naked Capitalism using the scientific approach.

After a bit of research on what makes people haul their asses off the couch to write checks, I stumbled upon this blogpost, from nonprofitquarterly.org, which explains that I should appeal to readers’ emotions rather than reason. From the post:

The essential difference between emotion and reason is that emotion leads to action, while reason leads to conclusions.

Let’s face it, regular readers of Naked Capitalism have come to enough conclusions to last a life time, and that’s why they come back for more! But now it’s time for action!

According to them, the leading emotions I should appeal to are as follows: